Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Forensic Data Analysis interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Forensic Data Analysis Interview

Q 1. Explain the process of acquiring data from a hard drive in a forensically sound manner.

Acquiring data from a hard drive forensically requires meticulous steps to ensure data integrity and admissibility in court. Think of it like carefully excavating a delicate archeological site – any misstep could destroy crucial evidence. The process begins with creating a forensic image (a bit-by-bit copy) of the drive using a write-blocker. This device prevents any accidental writing to the original drive, preserving its pristine state. The imaging process uses hashing algorithms (like SHA-256) to verify the integrity of the copy, ensuring it’s an exact replica. After creating the image, we work exclusively with the image, leaving the original drive untouched. We then perform verification to confirm that the hash of the image matches the hash of the original drive. This whole process is documented meticulously, forming a part of the chain of custody.

For example, imagine investigating a computer suspected of being used in a cybercrime. We would first use a write-blocker to connect the drive to a forensic workstation. We’d then use imaging software (like FTK Imager or EnCase) to create a bit-stream copy. The software would generate a hash value for both the original and the image, proving their identical nature. Any discrepancy would indicate a problem during the process.

Q 2. Describe different types of data recovery techniques.

Data recovery techniques vary depending on the type of data loss and the storage media. Think of it like piecing together a shattered vase – the approach depends on the extent of the damage. We have:

- File Carving: This recovers files based on their file signatures (unique byte sequences) without relying on the file system. It’s useful when the file system is damaged. Imagine finding fragments of a picture; file carving reconstructs them based on the JPEG signature.

- File System Recovery: This method works when the file system is corrupted but the data is still largely intact. It repairs the file system’s structure to restore access to files. Think of it like fixing a broken table of contents in a book to navigate to the correct chapters.

- Data Recovery Software: Specialized software uses algorithms to scan storage media for traces of deleted files or damaged sectors, attempting to reconstruct them. They can often recover files deleted from the Recycle Bin, for example. These tools are like a powerful vacuum cleaner sucking up all recoverable information.

- Low-Level Data Recovery: This is a specialized technique used when the above methods fail. It involves inspecting the raw data on the disk at a very low level (sector by sector), which is highly time-consuming and complex.

The choice of technique depends on the specific circumstances and the level of damage sustained by the storage media.

Q 3. What are the challenges of analyzing encrypted data?

Analyzing encrypted data presents significant challenges. It’s like trying to solve a puzzle without knowing the rules. The primary hurdle is obtaining the decryption key. Without it, the data remains inaccessible, rendering standard forensic analysis impossible. Even if we have the key, the type of encryption used matters. Some are stronger than others, requiring substantial computational resources and time to crack. Furthermore, full-disk encryption makes it impossible to access any files or metadata, hindering the process. Some encryption methods are very robust; even if they are broken they can’t be considered to be broken without evidence and potentially expensive expert testimony.

For example, if a suspect uses full-disk encryption with a strong password and we lack the password, accessing the data becomes a significant, and often insurmountable, problem. We might need to explore other evidence or even seek legal warrants to compel the suspect to provide the decryption key.

Q 4. How do you handle data integrity during a forensic investigation?

Maintaining data integrity is paramount in forensic investigations. It’s like preserving a crime scene – any alteration compromises the evidence’s value. We ensure this through several measures:

- Write-Blocking: As mentioned earlier, using write-blockers prevents any modification of the original data.

- Hashing: Calculating and documenting cryptographic hash values (like MD5 or SHA-256) of the original drive and the forensic image helps verify that no changes have occurred.

- Chain of Custody: Precisely documenting every individual who handles the evidence and when helps prevent tampering or accidental alterations. A log is maintained describing who handled the evidence, and when.

- Forensic Software: Using reputable forensic software ensures the analysis process is conducted without unintentionally altering the data.

Any deviation from these practices could cast doubt on the evidence’s authenticity, significantly weakening its legal standing.

Q 5. What are the different types of hashing algorithms and their use in forensic analysis?

Hashing algorithms are mathematical functions that generate a unique fixed-size string (hash) from any input data. These are like digital fingerprints for data. In forensic analysis, they are crucial for verifying data integrity. Several algorithms exist, each with its strengths and weaknesses:

- MD5 (Message Digest Algorithm 5): An older algorithm, now considered cryptographically broken due to collision vulnerabilities (multiple inputs producing the same hash). While not ideal for security, it’s still used in some forensic situations for legacy reasons.

- SHA-1 (Secure Hash Algorithm 1): Also showing vulnerabilities, although less so than MD5. It’s largely being phased out in favor of more secure options.

- SHA-256 and SHA-512 (Secure Hash Algorithm 256-bit and 512-bit): These are stronger algorithms currently widely used and are considered more secure. The higher the bit count, the more robust the algorithm typically is.

In practice, we’d use a secure hashing algorithm (like SHA-256) to generate a hash value for the original data and the forensic image. Any mismatch indicates data corruption or tampering. This ensures that evidence has not been tampered with, which is critical for legal proceedings.

Q 6. Explain the concept of chain of custody and its importance.

The chain of custody meticulously documents the handling of evidence from the moment it’s collected to its presentation in court. It’s like a detailed trail of breadcrumbs, ensuring there are no gaps in accounting for the evidence’s journey. It records who handled the evidence, when they handled it, and where it was stored. Any breaks in the chain can cast doubt on the evidence’s integrity and admissibility. This is crucial because it proves that the evidence has not been tampered with or replaced.

Imagine a scenario where a hard drive is seized as evidence. The chain of custody would record the officer who seized it, the date and time, its location in storage, and every person who accessed it during the investigation, including the dates and times of access. A complete chain of custody is essential to successfully use the evidence in court.

Q 7. What are some common file carving techniques?

File carving is a data recovery technique used to extract files from unallocated space or damaged file systems based on their file signatures (unique identifying byte sequences). It’s like finding fragments of a broken puzzle and piecing them together based on their shapes and colors (file headers and footers). Common techniques include:

- Header and Footer Analysis: Identifying known file headers (e.g., JPEG, GIF, DOC) and footers to locate complete files.

- Data Structure Analysis: Using file system metadata (if available) to identify file fragments and reconstruct them.

- Keyword Searching: Searching for specific keywords within the raw data to identify and recover files.

File carving is useful when dealing with fragmented files, deleted files, or damaged file systems. The effectiveness depends on the extent of data corruption and the availability of sufficient file signatures. It’s a powerful technique, but often requires expertise and specialized tools.

Q 8. How do you identify and analyze malware?

Identifying and analyzing malware involves a multi-step process combining static and dynamic analysis techniques. Static analysis examines the malware without executing it, focusing on its code, structure, and metadata. This can reveal indicators of compromise (IOCs) such as suspicious strings, file hashes, and embedded commands. Dynamic analysis, on the other hand, involves running the malware in a controlled environment (like a sandbox) to observe its behavior, network connections, and registry modifications. This helps understand its functionality and potential impact.

For example, a static analysis might reveal a suspicious URL embedded within the malware’s code, indicating a potential command-and-control (C&C) server. Dynamic analysis might then show the malware attempting to connect to that URL, confirming its malicious intent and providing further insights into its operation. Tools like VirusTotal, which aggregates results from multiple antivirus engines, play a crucial role in identifying known malware samples through their unique hashes.

The analysis also often incorporates reverse engineering techniques, where the malware’s code is decompiled or disassembled to understand its logic and algorithms. This is particularly crucial for understanding advanced persistent threats (APTs) and custom-built malware.

Q 9. Describe your experience with various forensic tools (e.g., EnCase, FTK, Autopsy).

My experience encompasses a wide range of forensic tools, including EnCase, FTK, and Autopsy. Each tool has its strengths and weaknesses, making them suitable for different investigative needs. EnCase, for instance, is renowned for its robust imaging capabilities and its ability to handle large datasets efficiently. I’ve used EnCase extensively for creating forensic images of hard drives, analyzing file systems, and recovering deleted files. Its timeline functionality is particularly useful in establishing the sequence of events.

FTK (Forensic Toolkit) provides a user-friendly interface with strong data recovery and keyword searching capabilities. I’ve found it particularly helpful in quickly identifying potentially sensitive data within large datasets. Autopsy, an open-source alternative, offers a powerful platform for analyzing disk images and memory dumps, excelling in network forensics scenarios. Its ability to integrate with various plugins expands its functionality greatly.

In practice, I often select the tool that best suits the specific needs of the case. For example, I might use EnCase for a comprehensive examination of a hard drive, and then use Autopsy to analyze memory dumps in conjunction with network traffic logs to reconstruct the timeline of a cyberattack.

Q 10. Explain your understanding of network forensics and its methodologies.

Network forensics involves the systematic investigation of network events to identify security breaches, track attackers, and gather evidence for legal proceedings. Its methodologies closely mirror those of digital forensics, emphasizing the principles of preservation, identification, extraction, documentation, and interpretation of digital evidence. However, network forensics deals specifically with data transmitted across a network.

Key methodologies include packet capture (using tools like Wireshark), log analysis (examining server, firewall, and router logs), and intrusion detection system (IDS) analysis. The focus is on reconstructing the events that occurred on the network, identifying communication patterns, and determining the source and destination of malicious activities. Network flow analysis, which aggregates network traffic data, is crucial for identifying high-volume traffic and potential anomalies.

Consider a scenario where a company suspects data exfiltration. Network forensics would involve examining network traffic logs for suspicious outbound connections, analyzing the data transferred, and identifying the target system. Packet captures would provide detailed information about the communication protocols, ports used, and data content.

Q 11. How do you analyze network traffic logs to identify malicious activity?

Analyzing network traffic logs for malicious activity often involves pattern recognition and anomaly detection. I begin by identifying the baseline network activity—what’s considered normal for the network. Deviations from this baseline are potential indicators of malicious activity. Tools like Wireshark and tcpdump can be used to capture and filter network traffic, focusing on specific protocols, ports, or IP addresses.

Specific indicators include: unusual port usage (e.g., connections to known C&C servers), high volume of outbound data transfers to unfamiliar locations, connections to known malicious IP addresses or domains, unusual login attempts, and failed login attempts originating from unfamiliar locations. I use regular expressions and scripting to automate the search for suspicious patterns within massive log files. Log analysis tools with correlation capabilities can aid in identifying connections between seemingly disparate events.

For example, a sudden increase in outbound traffic on unusual ports, coupled with failed login attempts from a specific IP address, might suggest a compromise involving data exfiltration. Cross-referencing these findings with other logs (e.g., system logs) strengthens the evidence.

Q 12. What are the key differences between static and dynamic malware analysis?

Static and dynamic malware analysis offer complementary approaches to understanding malware. Static analysis examines the malware without executing it. This involves inspecting the code, file headers, strings, and metadata for indicators of malicious behavior. This is often done using disassemblers and debuggers. It’s less resource-intensive and safer than dynamic analysis, but it can miss behaviors that only appear during execution.

Dynamic analysis, conversely, involves running the malware in a controlled environment (like a sandbox) to observe its behavior in real-time. This allows analysts to see how the malware interacts with the system, networks, and other applications. It reveals functionalities hidden in static analysis but requires more resources and carries a higher risk.

For instance, static analysis of a file might reveal the presence of suspicious API calls. However, dynamic analysis is needed to determine the context of these calls and their actual impact. An analogy would be inspecting a car’s blueprint (static) versus test-driving it (dynamic) to fully assess its performance and functionalities.

Q 13. How do you handle volatile data during an investigation?

Volatile data, such as RAM contents and network connections, is ephemeral and lost when a system is powered down. Handling it effectively is paramount in forensic investigations because it often contains crucial information about recent activities, running processes, and network communications. To capture volatile data, specialized techniques and tools are employed.

The most common approach is to create a memory dump using specialized forensic tools. These tools create a bit-by-bit copy of the RAM’s contents, allowing for detailed analysis. After obtaining the memory dump, I use dedicated tools to analyze its contents, extracting running processes, open files, network connections, and user activities. This involves correlating data from memory with data from hard drives to create a comprehensive picture.

The speed and efficiency with which volatile data is acquired are critical, given its transient nature. Live acquisition (acquiring data from a running system) should be performed as early as possible in the investigation to minimize the risk of data loss or alteration.

Q 14. Explain your understanding of the legal and ethical considerations in forensic data analysis.

Legal and ethical considerations are paramount in forensic data analysis. Investigations must adhere strictly to applicable laws and regulations, including obtaining proper authorization before accessing and analyzing data. This often involves obtaining search warrants or subpoenas. The chain of custody must be meticulously maintained, ensuring the integrity and authenticity of all evidence. Each step of the investigation must be rigorously documented.

Ethical concerns encompass issues like privacy, confidentiality, and data protection. Analysts must only access and analyze data relevant to the investigation. Personal information should be treated with utmost care and only disclosed when legally required or necessary. Transparency and objectivity are crucial; analysis should be unbiased and reported accurately, without misrepresenting data or findings. Any potential conflicts of interest must be disclosed.

For example, before examining a suspect’s computer, we need to ensure we have the legal authority to do so. During the analysis, any personally identifiable information (PII) discovered must be handled in accordance with privacy regulations. The final report must be detailed, unbiased, and clearly articulate the methodology and findings.

Q 15. Describe a situation where you had to overcome a technical challenge during a forensic investigation.

In a recent investigation involving a suspected insider threat, we encountered heavily encrypted files using a custom algorithm. Standard decryption tools were ineffective. The challenge wasn’t just breaking the encryption, but doing so without compromising data integrity, which is paramount in a forensic context. We overcame this by combining several techniques. First, we analyzed the encryption code itself, looking for weaknesses or patterns. This involved reverse-engineering parts of the custom algorithm. We then developed a custom script, using Python and libraries like PyCryptodome, to simulate the encryption process and try different key combinations. This involved a significant amount of trial and error, guided by information gleaned from the system logs and metadata associated with the encrypted files. Finally, we cross-referenced partial decryptions with known data sources to confirm our results. Successfully decrypting these files without destroying the chain of custody was crucial to proving the insider’s involvement.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you prioritize tasks during a large-scale forensic investigation?

Prioritization in large-scale investigations is critical. I use a risk-based approach, combining urgency and impact. I start by creating a comprehensive timeline based on the initial evidence and the overall case goals. This timeline helps to identify the most time-sensitive tasks, such as preserving volatile data (like RAM) which might be lost if we don’t act quickly. Then, I categorize evidence based on its potential relevance to the case. High-impact evidence, such as direct evidence of a crime, gets immediate attention. I document every step using a case management system to ensure transparency and auditability. Using a project management methodology like Agile allows for flexibility and prioritization adjustments as the investigation progresses. This iterative approach is crucial in responding to newly discovered leads.

Q 17. Explain your experience with different data formats and their analysis.

My experience spans a wide range of data formats. I’m proficient in analyzing file systems (NTFS, FAT32, ext4), database formats (SQL, NoSQL), various document types (DOCX, PDF, RTF), image formats (JPEG, PNG, GIF), and multimedia files (MP3, AVI, MOV). I also have experience with log files (system logs, web server logs, application logs) and network data (pcap files). The approach to analyzing each format differs. For example, analyzing a database might involve SQL queries to extract relevant information, while analyzing an image could involve using tools to extract metadata or hidden information. I regularly use tools such as FTK Imager, Autopsy, and EnCase to assist with format-specific analyses. Understanding the nuances of each format is key to extracting meaningful insights, maintaining data integrity, and ensuring chain of custody.

Q 18. How do you identify and analyze deleted files?

Deleted files aren’t truly gone; they often leave remnants on the storage media. Identifying them requires specialized forensic tools that can recover data from unallocated space, slack space, and the file system’s metadata. Tools like Recuva, PhotoRec, and FTK Imager can help reconstruct these files. The process typically involves creating a forensic image of the drive to avoid altering the original evidence. Then, using file carving techniques, we scan the image for file signatures and reconstruct the files based on those signatures. We also examine the file system’s Master File Table (MFT) or equivalent structures to identify file entries that have been marked as deleted but whose data might still reside on the disk. Contextual analysis is vital; we examine metadata such as timestamps to determine when the files were deleted and potentially to associate them with other events in the investigation.

Q 19. What are your experiences with memory forensics?

Memory forensics is crucial for investigating live systems. I’m experienced with acquiring and analyzing RAM images using tools like Volatility and FTK Imager. Memory analysis reveals running processes, network connections, opened files, and user activity at a specific point in time. This provides a snapshot of the system’s state, helping to identify malicious processes, malware artifacts, and evidence of recent actions. Volatility’s plugins are invaluable for tasks like analyzing network connections, recovering passwords from memory, and identifying recently accessed files. For example, we might use Volatility to examine a suspect’s RAM for evidence of recent web browsing activity, communication with malicious servers, or the presence of hidden malware. The challenge lies in the volatility of the data – memory contents are lost upon system shutdown, requiring rapid acquisition and analysis.

Q 20. Describe your understanding of cloud-based forensics.

Cloud-based forensics presents unique challenges due to the distributed nature of cloud storage and the involvement of third-party providers. My understanding involves utilizing cloud providers’ APIs and forensic tools specifically designed for cloud environments. This includes working with various cloud services such as AWS S3, Azure Blob Storage, and Google Cloud Storage. The investigative process involves obtaining legal authorization for data access, coordinating with cloud providers, and potentially using specialized cloud forensic tools to extract, analyze, and preserve evidence. The challenges include dealing with encryption, data sprawl, and the complexity of different cloud architectures. Data retention policies within cloud services need careful consideration, as data may be deleted or altered by the provider according to their own procedures.

Q 21. Explain the concept of data sanitization and secure disposal of data.

Data sanitization and secure disposal are crucial for maintaining confidentiality, integrity, and availability. Sanitization involves securely erasing data to prevent its recovery using standard data recovery techniques. This often involves overwriting the data multiple times with random data using tools like DBAN (Darik’s Boot and Nuke) or wiping utilities provided by manufacturers. For sensitive data, cryptographic erasure might be employed, ensuring that the data is rendered unrecoverable even with advanced techniques. Secure disposal involves physically destroying storage media, like hard drives, to prevent data retrieval. This can involve shredding, degaussing (for magnetic media), or incineration. The choice of method depends on the sensitivity of the data and regulatory requirements. Proper documentation of the sanitization and disposal processes is essential to maintain the chain of custody and meet legal and compliance obligations.

Q 22. How familiar are you with various operating systems and their file systems?

My familiarity with operating systems and their file systems is extensive. I possess in-depth knowledge of Windows (NTFS, FAT32, exFAT), macOS (APFS, HFS+), and Linux (ext2, ext3, ext4, Btrfs) file systems. This includes understanding their underlying structures, metadata, journaling mechanisms, and potential vulnerabilities. For instance, I’m adept at recovering deleted files from NTFS using tools like Recuva or FTK Imager, understanding the intricacies of the $MFT (Master File Table) and its significance in data recovery. I also understand the differences in how data is stored and accessed across these systems, crucial for interpreting evidence found on various devices. My experience extends to less common file systems as well, allowing me to handle diverse forensic scenarios.

Understanding the nuances of each file system is paramount. For example, the way timestamps are handled differs between NTFS and ext4, which can impact the timeline reconstruction during an investigation. My experience allows me to accurately interpret these differences and draw reliable conclusions.

Q 23. Describe your experience working with databases in a forensic context.

My experience with databases in a forensic context is significant. I’ve worked extensively with various database systems, including SQL Server, MySQL, PostgreSQL, and Oracle, in both relational and NoSQL contexts. I understand how to extract data, analyze database logs, and correlate information across multiple databases to identify patterns and suspicious activity. A recent case involved analyzing a company’s SQL Server database to identify unauthorized access attempts. By examining the transaction logs and security audit logs, I was able to pinpoint the exact time and user responsible for the breach, leading to successful prosecution.

Beyond simple data extraction, I’m proficient in using SQL queries to identify anomalies, such as unusual data modifications, account creations, or deletions, which might indicate malicious activity. I can also analyze database schemas to understand how data is organized and identify potential vulnerabilities.

Furthermore, I understand the challenges of working with encrypted databases and have experience employing various techniques to overcome those challenges, within legal and ethical boundaries, of course.

Q 24. What are some common methods used to detect data breaches?

Detecting data breaches involves a multi-faceted approach. Common methods include:

- Monitoring system logs: Analyzing security logs, application logs, and network logs for suspicious activities, such as unauthorized access attempts, unusual data transfers, or failed logins. For example, a sudden spike in failed login attempts from an unusual geographic location could be a strong indicator.

- Intrusion Detection Systems (IDS): Utilizing IDS to detect malicious network traffic and potential intrusions. IDS can alert on various suspicious activities, including port scans, malware infections, and denial-of-service attacks.

- Security Information and Event Management (SIEM): Employing SIEM tools to collect and analyze security data from multiple sources, providing a centralized view of security events. SIEM helps correlate events across various systems, providing a more complete picture of potential breaches.

- Vulnerability scanning: Regularly scanning systems for known vulnerabilities and promptly patching them. Vulnerability scanners can identify weaknesses in software and configurations, preventing potential exploits.

- Data Loss Prevention (DLP): Implementing DLP tools to prevent sensitive data from leaving the organization’s control. DLP solutions can monitor data transfers and block unauthorized access to confidential information.

- Regular security audits: Conducting regular security audits to assess the organization’s security posture and identify potential weaknesses. Audits should assess both technical and procedural security controls.

Often, a combination of these methods is used to provide comprehensive breach detection capabilities. A single indicator may not be sufficient, so correlation and analysis of multiple data points are crucial.

Q 25. Explain your understanding of different types of log files and their analysis.

Log files are invaluable in forensic investigations. They provide a chronological record of system events, user activities, and application processes. Different types of log files offer unique insights:

- System logs: These logs record critical system events, such as boot processes, driver installations, and system errors. Examples include Windows Event Logs and Linux syslog.

- Application logs: These logs track application-specific events and errors. For example, a web server log might record each request made to the server, including the IP address of the client and the requested resource.

- Network logs: These logs record network traffic, including source and destination IP addresses, ports, and protocols. Firewall logs, proxy logs, and router logs fall under this category.

- Security logs: These logs record security-related events, such as login attempts, access controls, and security audits. These are critical for detecting unauthorized access or malicious activity.

Log file analysis often involves searching for specific events, patterns, or anomalies. For example, a sudden increase in failed login attempts from a specific IP address might indicate a brute-force attack. Understanding the structure and format of different log files, as well as using log analysis tools, is essential for extracting meaningful information.

I’m proficient in using various log analysis tools and techniques, including regular expressions and scripting languages like Python to automate analysis and identify key patterns within large datasets.

Q 26. How do you handle conflicting evidence during an investigation?

Handling conflicting evidence is a crucial aspect of forensic data analysis. It requires a meticulous and methodical approach. First, I meticulously document all evidence, including its source, chain of custody, and any potential biases or limitations. Then, I rigorously analyze each piece of evidence independently, looking for corroborating or contradictory information. Techniques such as timeline analysis, cross-referencing with other data sources, and using digital forensics tools to verify data integrity help me to resolve discrepancies.

If conflicts persist, I carefully consider the potential reasons for the discrepancies. This might involve examining the reliability of the sources, assessing the potential for tampering or manipulation, or investigating inconsistencies in data collection methods. In some instances, additional investigation may be required to resolve the conflicts.

Ultimately, the goal is to present a comprehensive and unbiased analysis that considers all evidence, even conflicting information. A clear explanation of the conflicts and their potential implications is essential for drawing accurate conclusions and providing robust reports.

Q 27. What are your strengths and weaknesses as a Forensic Data Analyst?

My strengths lie in my meticulous attention to detail, my proficiency in various forensic tools and techniques, and my ability to logically analyze complex datasets. I’m adept at identifying patterns, correlations, and anomalies within large volumes of data. I pride myself on my ability to explain complex technical concepts clearly and concisely to both technical and non-technical audiences.

My main weakness is, perhaps, a tendency towards perfectionism. I sometimes spend more time than necessary meticulously reviewing my work, but I’m actively working on improving time management while maintaining the rigorous standards necessary in this field.

Key Topics to Learn for Forensic Data Analysis Interview

- Data Acquisition and Preservation: Understanding legal and ethical considerations, proper chain of custody procedures, and techniques for acquiring data from various sources (e.g., hard drives, mobile devices, cloud storage).

- Data Analysis Techniques: Mastering methods for identifying patterns, anomalies, and correlations within datasets. Practical application includes using tools like Python with libraries like Pandas and NumPy for data manipulation and analysis.

- File System Forensics: Understanding file system structures, deleted file recovery, and data carving techniques. Practical application: Investigating deleted files on a suspect’s computer to recover incriminating evidence.

- Network Forensics: Analyzing network traffic logs, identifying intrusion attempts, and reconstructing network activities. Practical application: Tracing the source of a cyberattack by examining network packets.

- Memory Forensics: Analyzing RAM contents to identify running processes, malware, and other volatile data. Practical application: Capturing and analyzing memory dumps to identify active malware infections.

- Mobile Device Forensics: Extracting data from mobile devices, including call logs, text messages, and application data. Practical application: Recovering evidence from a suspect’s smartphone.

- Data Visualization and Reporting: Effectively communicating findings through clear and concise reports and visualizations. Practical application: Presenting forensic findings to law enforcement or legal professionals.

- Legal and Ethical Considerations: Understanding the legal framework surrounding digital forensics, including search warrants, admissibility of evidence, and data privacy regulations.

- Software and Tools: Familiarity with common forensic software and tools (e.g., EnCase, FTK, Autopsy). Demonstrating practical experience with at least one major tool is highly beneficial.

- Problem-Solving and Critical Thinking: Developing a structured approach to analyzing complex datasets and drawing logical conclusions. Practice solving hypothetical forensic scenarios to hone your skills.

Next Steps

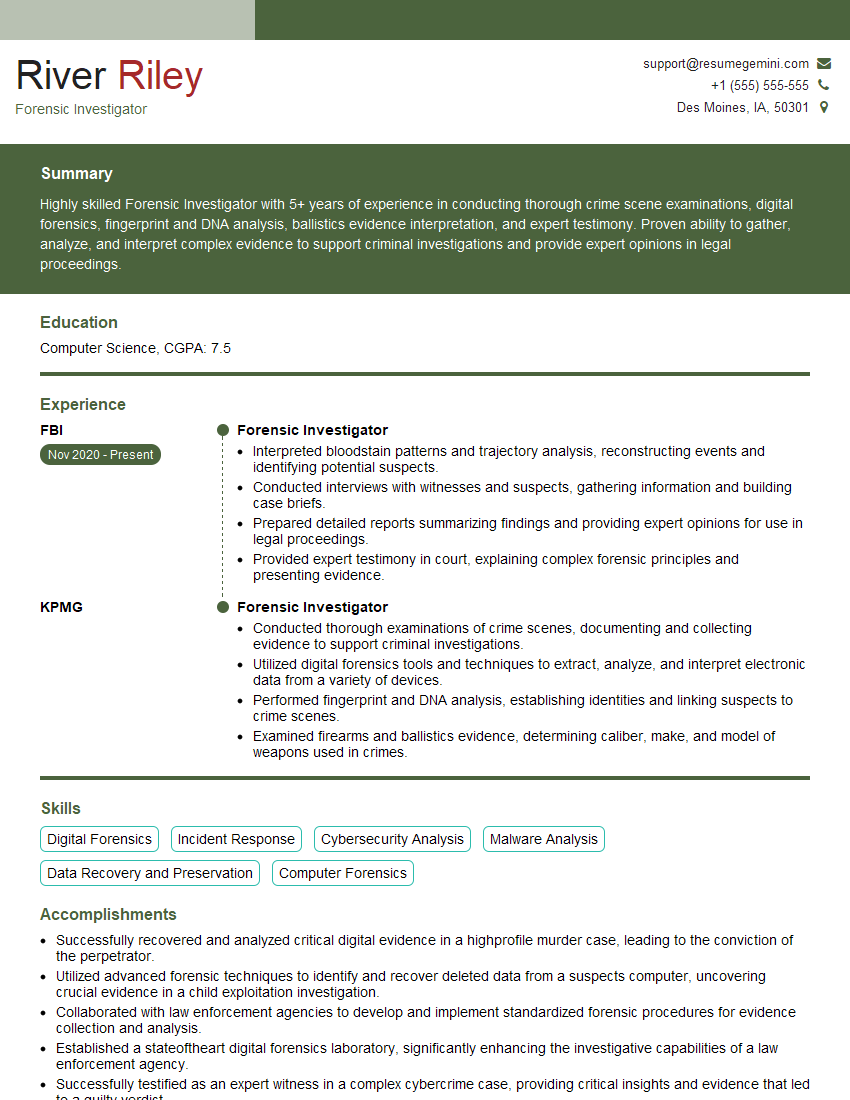

Mastering Forensic Data Analysis opens doors to exciting and impactful careers, offering the opportunity to contribute significantly to investigations and justice. To maximize your job prospects, it’s crucial to create a compelling and ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource to help you build a professional resume that stands out. They provide examples of resumes tailored to Forensic Data Analysis to guide you in showcasing your expertise. Invest the time to craft a strong resume—it’s your first impression with potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.