Are you ready to stand out in your next interview? Understanding and preparing for Big Data and Hadoop interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Big Data and Hadoop Interview

Q 1. Explain the Hadoop Distributed File System (HDFS) architecture.

The Hadoop Distributed File System (HDFS) is a highly scalable, distributed storage system designed to store very large datasets across a cluster of commodity hardware. Imagine it as a massive, shared library spread across many computers, each holding a portion of the books. Its architecture is master-slave based, with a single NameNode managing the file system metadata and numerous DataNodes storing the actual data blocks.

Key Architectural Features:

- NameNode: The master server. It maintains the file system namespace, manages metadata (like file location, permissions, and hierarchy), and directs DataNodes on where to store and retrieve data blocks. Think of it as the librarian, knowing where every book is located.

- DataNodes: Slave servers. They store the actual data blocks of the files, divided into chunks. Each DataNode reports its status and block information to the NameNode. These are the shelves holding individual books in our library analogy.

- Data Block Replication: HDFS replicates data blocks across multiple DataNodes to ensure fault tolerance and high availability. If one DataNode fails, the data is still accessible from the replicas. This is like having multiple copies of a crucial book in different locations of the library.

- Hierarchical Namespace: HDFS supports a hierarchical file system, similar to a traditional file system, allowing easy organization of data into directories and subdirectories. This is the structure within the library, organizing books into categories and subcategories.

This distributed architecture allows HDFS to handle petabytes of data efficiently, providing high throughput and scalability. The replication strategy ensures data redundancy and resilience to hardware failures, crucial for big data applications.

Q 2. What are the different components of Hadoop?

Hadoop is not just a single component; it’s an ecosystem of tools working together. The core components are:

- Hadoop Common: A set of utilities and libraries used by other Hadoop modules. It provides fundamental functionalities like logging, configuration management, and data serialization.

- Hadoop Distributed File System (HDFS): The storage system we discussed earlier, responsible for storing and managing large datasets across a cluster.

- Yet Another Resource Negotiator (YARN): The resource manager that allocates computing resources (CPU, memory) to applications running on the Hadoop cluster. It’s the conductor of the orchestra, allocating resources based on needs.

- MapReduce: A programming model and framework for processing large datasets in parallel across a cluster. It’s the worker that does the heavy lifting of processing the data.

- Hadoop YARN Applications (e.g., Hive, Pig, Spark): Higher-level tools built on top of YARN for easier data processing and analysis. These simplify data manipulation for users and offer diverse functionalities.

These components work together to provide a robust and scalable platform for big data processing. The combination of distributed storage (HDFS), resource management (YARN), and parallel processing (MapReduce) makes Hadoop powerful and suitable for many data-intensive tasks.

Q 3. Describe the MapReduce programming paradigm.

MapReduce is a programming paradigm for processing massive datasets in parallel across a cluster of machines. Imagine you need to count the occurrences of each word in a very large book. MapReduce divides this task into smaller, manageable parts:

1. Map Phase: The input data (the book) is divided into smaller chunks, and each chunk is processed independently by a mapper. Each mapper takes a piece of data, applies a user-defined mapping function, and produces key-value pairs. In our example, each mapper would read a chunk of the book, identify the words, and output key-value pairs like ("word1", 1), ("word2", 1), etc.

2. Shuffle and Sort Phase: The framework collects the intermediate key-value pairs from all mappers and groups them by key (the word). This phase ensures that all occurrences of the same word are grouped together. Think of it as sorting all the word-count pairs alphabetically.

3. Reduce Phase: The grouped key-value pairs are then processed by reducers. Each reducer receives a key and a list of values associated with that key. A user-defined reduce function combines the values. In our example, each reducer would sum up the counts for each word. The output from the reducers is the final result: the total count of each word in the book.

Example (pseudocode):

Map Function:

for each word in input: emit(word, 1);Reduce Function:

for each word, list of counts: sum = sum(counts); emit(word, sum);MapReduce’s power lies in its ability to parallelize processing, making it highly efficient for big data.

Q 4. What are the advantages and disadvantages of using Hadoop?

Hadoop offers several advantages but also has limitations:

Advantages:

- Scalability: Handles massive datasets that exceed the capacity of single machines. It easily scales horizontally by adding more nodes.

- Cost-effectiveness: Leverages commodity hardware, reducing infrastructure costs compared to proprietary solutions.

- Fault tolerance: Data replication and automatic recovery mechanisms ensure high availability and data protection.

- Open-source: Large community support, readily available resources, and flexibility for customization.

- Flexibility: Supports various data processing frameworks and tools beyond MapReduce.

Disadvantages:

- Complexity: Setting up and managing a Hadoop cluster can be complex, requiring expertise in distributed systems.

- Latency: Processing small datasets might be slower compared to traditional databases due to the overhead of distributing data.

- Limited real-time processing capabilities: While improving, Hadoop is not ideal for applications requiring very low latency, real-time processing.

- Data consistency: Maintaining strong data consistency across a distributed cluster can be challenging.

The choice of Hadoop depends on the specific needs of a project, weighing the benefits of scalability and cost-effectiveness against the complexities of setup and management.

Q 5. Explain the role of NameNode and DataNodes in HDFS.

The NameNode and DataNodes are the two core components of HDFS, working in a master-slave architecture. They have distinct, yet interdependent roles:

NameNode:

- Metadata Management: Stores the metadata of the entire file system, including file and directory names, permissions, block locations, and file sizes. It’s essentially a directory service.

- Namespace Management: Maintains the hierarchical file system namespace. Think of it as the central index of a massive library.

- Client Requests: Handles client requests for file access (reading and writing), coordinating data transfers between clients and DataNodes.

- Block Management: Manages the location of data blocks on DataNodes, ensuring data availability and directing client reads and writes.

DataNodes:

- Data Storage: Stores the actual data blocks of files. These are the physical storage units.

- Block Replication: Stores replicated copies of data blocks as instructed by the NameNode, ensuring redundancy and fault tolerance.

- NameNode Communication: Regularly communicates with the NameNode, reporting the status of stored blocks (available, unavailable, corrupted).

- Data Transfer: Transfers data blocks to and from clients as directed by the NameNode.

The NameNode is a single point of failure; however, high-availability configurations can mitigate this risk. The interplay between the NameNode and DataNodes ensures the efficient and reliable storage and retrieval of massive datasets in HDFS.

Q 6. How does HDFS handle data redundancy and fault tolerance?

HDFS achieves data redundancy and fault tolerance through data block replication. When a file is written to HDFS, the file is broken down into multiple data blocks. Each block is then replicated across multiple DataNodes. The number of replicas is configurable (typically 3), and the locations of these replicas are strategically chosen to prevent data loss even in case of multiple node failures.

Mechanism:

- Replication Factor: The number of replicas for each block is defined during file creation. This factor determines the level of redundancy. A higher replication factor provides greater fault tolerance but consumes more storage space.

- Replica Placement: The NameNode intelligently places replicas across different racks in the cluster (a rack is a group of servers in the data center). This strategy avoids data loss if an entire rack fails.

- DataNode Heartbeats: DataNodes send periodic heartbeats to the NameNode, reporting their status and block information. If the NameNode doesn’t receive a heartbeat from a DataNode for a certain time, it marks that DataNode as dead.

- Replica Recovery: If a DataNode fails, the NameNode detects the lost blocks and schedules the replication of the missing blocks from the remaining replicas onto new DataNodes. This process is automatic and transparent to the user.

This replication mechanism ensures high availability and fault tolerance, allowing HDFS to withstand hardware failures without data loss. Imagine this as having multiple copies of your important documents stored in different safe places. If one location fails, you still have access to the copies.

Q 7. What are the different data formats used in Hadoop?

Hadoop supports various data formats to accommodate different data types and processing needs. Some common formats include:

- Text File (

.txt): The simplest format, storing data as plain text. Each line represents a record. - Comma Separated Values (CSV): A widely used format, with data values separated by commas, suitable for structured data.

- Sequence File: A Hadoop-specific binary format optimized for storage efficiency and fast processing. It’s a key-value pair format, useful for intermediate data in MapReduce jobs.

- Avro: A row-oriented data serialization system that provides schema evolution, making it robust and versatile.

- Parquet: A columnar storage format ideal for analytical queries, offering efficient data access and compression.

- ORC (Optimized Row Columnar): Similar to Parquet, offering efficient compression and query performance for analytical workloads.

The choice of data format depends on factors like data structure, processing requirements, storage space, and query performance. For example, Parquet is preferred for analytical processing due to its columnar storage, while Sequence File is efficient for intermediate data in MapReduce due to its key-value structure. The selection of the right format is critical for optimizing both storage and processing efficiency.

Q 8. Explain the concept of data partitioning in Hadoop.

Data partitioning in Hadoop is the process of dividing a large dataset into smaller, more manageable chunks. Think of it like dividing a massive pizza into slices for easier consumption. This improves processing efficiency by allowing parallel processing of these smaller partitions across multiple nodes in a Hadoop cluster. Each partition is independently processed, significantly reducing the overall processing time.

There are several ways to partition data:

- By Key: Partitions are created based on the values of a specific key field in your data. For instance, you might partition a customer dataset by

customerID, ensuring all data for a given customer resides on the same node. This is highly effective for queries focusing on individual customers. - By Date: This is common in time-series data, where data is partitioned by date (e.g., daily, monthly). This allows efficient processing of data for specific time periods.

- By Range: Partitions can be created based on a numerical range. For example, you might partition a sensor dataset based on temperature ranges (0-20, 21-40, 41-60).

Effective partitioning significantly reduces I/O operations and improves query performance. Choosing the right partitioning strategy is crucial for optimal Hadoop performance, depending on the nature of the data and the type of queries being executed.

Q 9. What is Hive and how does it simplify querying data in Hadoop?

Hive is a data warehouse system built on top of Hadoop. It provides a SQL-like interface (HiveQL) to query data stored in Hadoop’s distributed file system (HDFS). Imagine it as a user-friendly layer simplifying interaction with Hadoop’s complex underlying architecture. Instead of writing MapReduce jobs in Java, you can use familiar SQL commands to perform complex data analysis.

Hive simplifies querying by:

- Abstraction: It hides the complexities of MapReduce from the user. You don’t need to write MapReduce code; you write SQL-like queries.

- Schema Definition: Hive allows you to define a schema for your data, making it easier to manage and query. This brings structure to the unstructured data in HDFS.

- Optimized Query Execution: Hive optimizes your queries, translating them into efficient MapReduce jobs. This ensures optimal performance.

For example, instead of writing a complex MapReduce job to count the number of users in a specific city, you can write a simple HiveQL query like: SELECT COUNT(*) FROM users WHERE city = 'New York';

Q 10. How does Pig differ from Hive?

Both Pig and Hive are high-level data processing frameworks for Hadoop, but they differ significantly in their approach and use cases.

- Pig: Uses a scripting language (Pig Latin) which is more flexible and expressive than HiveQL. It is ideal for complex data transformations and algorithms where HiveQL might lack the expressiveness. It’s best suited for scenarios requiring iterative processing or custom algorithms.

- Hive: Uses SQL-like HiveQL, making it more accessible to users familiar with SQL. It’s optimized for data warehousing and analytical queries. It excels at large-scale data analysis and reporting tasks.

Essentially, if you need the flexibility of a scripting language and complex data manipulation, Pig is a better choice. If you need SQL-like ease of use and focused on analytical queries, Hive is the better option. One might even use both in a single workflow, leveraging Pig for data preparation and transformation and Hive for analytical querying.

Q 11. What is Spark and how does it improve upon MapReduce?

Spark is a unified analytics engine for large-scale data processing. It’s significantly faster than MapReduce due to its in-memory processing capabilities. While MapReduce writes intermediate results to disk after each map and reduce phase, Spark keeps the intermediate data in memory, drastically reducing I/O operations and improving performance. Imagine the difference between writing calculations on a whiteboard (Spark) versus writing and rewriting them on paper (MapReduce). The former is much faster.

Spark improves upon MapReduce by:

- In-Memory Processing: It stores intermediate data in memory, leading to significantly faster processing.

- Fault Tolerance: It’s resilient to node failures, automatically recovering from them.

- Rich API: It offers APIs in various languages (Scala, Java, Python, R), enabling flexibility and ease of use.

- Unified Platform: It supports various data processing tasks, including batch processing, streaming, machine learning, and graph processing, all within a single framework.

Real-world example: Processing massive sensor data streams in real-time for anomaly detection. Spark’s in-memory processing and streaming capabilities allow for immediate responses, whereas MapReduce would be far too slow for such a scenario.

Q 12. Explain the different types of joins in Spark.

Spark offers various join types, similar to traditional SQL databases, enabling you to combine data from different datasets based on common keys.

- Inner Join: Returns only the rows where the join condition is met in both datasets. This is the most commonly used join type.

- Left (Outer) Join: Returns all rows from the left dataset and matching rows from the right dataset. If there is no match in the right dataset, it will include null values for the right dataset’s columns.

- Right (Outer) Join: Returns all rows from the right dataset and matching rows from the left dataset. If there’s no match in the left dataset, it will include null values for the left dataset’s columns.

- Full (Outer) Join: Returns all rows from both datasets, regardless of whether there’s a match in the other dataset. If there’s no match, it will include null values for the missing columns.

The choice of join type depends on the specific data analysis requirement. For example, an inner join is appropriate when you only need data where both datasets have matching records; a left join is useful when you want all records from one dataset, even if there is no matching record in the other.

Q 13. What are RDDs in Spark and how are they used?

RDDs (Resilient Distributed Datasets) are the fundamental data structure in Spark. They represent a collection of elements partitioned across a cluster of machines. Think of them as distributed arrays, where each element is stored in a different part of the cluster. This partitioning allows parallel processing. Each RDD is immutable, meaning once created, it cannot be modified; instead, new RDDs are created from transformations.

RDDs are used for:

- Parallel Processing: The distributed nature of RDDs enables parallel operations on large datasets, drastically improving processing speed.

- Fault Tolerance: RDDs are fault-tolerant. If a node fails, Spark can reconstruct the lost data from other nodes, ensuring data integrity.

- Lazy Evaluation: Spark applies transformations lazily, meaning the computations are only performed when an action (like

collect()orcount()) is called on the RDD. This improves efficiency.

Example: If you have a large text file, you can create an RDD of lines from that file and then apply various transformations (like word counting or filtering) in parallel on each partition of the RDD.

Q 14. Describe the different storage levels in Spark.

Spark offers multiple storage levels to manage the data in memory, balancing memory usage with processing speed. Choosing the right storage level is critical for optimizing performance.

- MEMORY_ONLY: Stores the RDD in memory only. Fastest but might lead to OutOfMemory errors if the dataset is too large. Suitable for smaller datasets that fit comfortably within available memory.

- MEMORY_AND_DISK: Stores the RDD in memory, and if memory is full, spills to disk. Provides a balance between speed and memory usage, handling datasets larger than available RAM.

- MEMORY_ONLY_SER: Similar to

MEMORY_ONLYbut serializes the data before storing it, reducing memory footprint but increasing processing overhead due to deserialization. - MEMORY_AND_DISK_SER: Similar to

MEMORY_AND_DISKbut serializes data, offering a trade-off between speed and memory usage. This is suitable for datasets with varied data sizes where serialization could reduce memory footprint. - DISK_ONLY: Stores the RDD only on disk. Slowest but most memory-efficient, suitable for datasets significantly exceeding the available RAM.

The choice of storage level depends on the size of the dataset, the available memory, and the performance requirements. Experimentation is often needed to determine the optimal storage level for a given workload.

Q 15. What is YARN and what is its role in Hadoop?

YARN, or Yet Another Resource Negotiator, is the resource management system in Hadoop 2.0 and later versions. Think of it as the operating system for your Hadoop cluster. Before YARN, Hadoop’s MapReduce was responsible for both processing jobs and managing resources. This limited scalability and flexibility. YARN separates these two functions, allowing for greater efficiency and the execution of different types of applications beyond just MapReduce, such as Spark, Hive, and Pig.

YARN works by having a ResourceManager, which allocates cluster resources (CPU, memory, etc.) to different applications. Each application has an ApplicationMaster, which is responsible for managing the execution of that specific application’s tasks on the cluster’s NodeManagers. NodeManagers are essentially the workers, running tasks on individual nodes. This architecture allows for multiple applications to run concurrently on the same cluster, maximizing resource utilization. For example, you could have a Spark job running alongside a MapReduce job simultaneously, each efficiently using available resources without interfering with the other.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of data serialization in Hadoop.

Data serialization in Hadoop is the process of converting structured or semi-structured data into a byte stream for efficient storage and transmission across the network. Imagine sending a package: you need to package it carefully to protect its contents during transit. Similarly, serialization prepares data for reliable movement and storage within the distributed Hadoop environment.

Popular serialization formats in Hadoop include Avro, Parquet, and ORC. Avro is a row-oriented format that’s schema-based, meaning it stores data along with its schema, allowing for self-describing data. Parquet and ORC are columnar formats optimized for analytical queries, offering significantly better performance for queries that access only a subset of columns compared to row-based formats like Avro. The choice of format depends on the specific use case; for example, Parquet or ORC are generally preferred for analytical processing, whereas Avro may be more suitable for data that needs to be easily read and written by diverse systems.

Choosing the right serialization format impacts read and write performance, storage efficiency, and the overall efficiency of your Hadoop applications. Poorly chosen formats can lead to inefficient storage and slow query response times.

Q 17. How do you handle data skew in MapReduce?

Data skew in MapReduce occurs when one or a few reducers receive significantly more data than others, leading to a major imbalance and slowing down the entire job. Think of a restaurant where all the customers crowd around a few tables while others are empty. It’s inefficient and leads to delays.

Several strategies can mitigate data skew:

- Input Data Partitioning: Instead of letting Hadoop partition data automatically, you can implement custom partitioning logic to distribute data more evenly across reducers. This might involve salting, where you add a random key to the original key to disperse data more randomly.

- Combiners: Combiners perform a local aggregation of the data before sending it to the reducers, reducing the overall volume of data processed by each reducer. This prevents a single reducer from being overwhelmed.

- Multiple Reducers: Increasing the number of reducers can distribute the load more evenly, but excessively increasing the number can lead to overhead.

- Data Sampling and Repartitioning: Analyze data distribution beforehand and employ techniques to pre-partition data based on frequency or size of keys before it enters the MapReduce job.

The best approach depends on the specifics of your data and application. For instance, if you identify specific keys causing the skew, you might use custom partitioning to distribute their associated data across multiple reducers.

Q 18. What are the different types of data you’ve worked with in Big Data?

In my Big Data experience, I’ve worked with a wide variety of data types, including:

- Structured Data: This is data organized in a predefined format, such as relational databases (MySQL, PostgreSQL), CSV files, or JSON with a consistent schema. These are easy to query and analyze.

- Semi-structured Data: This data lacks a rigid schema but has some structure, such as JSON or XML. The structure might vary slightly from record to record, which adds complexity but also flexibility.

- Unstructured Data: This is data without a defined structure, like text files, images, audio, and video. Analyzing this data often requires specialized techniques like natural language processing (NLP) or computer vision.

- Log Data: I’ve extensively worked with server logs, application logs, and clickstream data – valuable for monitoring, debugging, and business intelligence.

- Sensor Data: Real-time data streams from IoT devices are a growing area where I have experience dealing with both volume and velocity.

The ability to handle diverse data types is crucial in Big Data, requiring expertise in various technologies and techniques to extract meaningful insights.

Q 19. Explain your experience with ETL processes.

ETL (Extract, Transform, Load) is the backbone of any data warehousing or data lake project. I’ve extensively utilized ETL processes in several projects, employing both traditional and cloud-based ETL tools. In one project, we extracted data from various sources—including relational databases, flat files, and APIs—transformed it by cleaning, enriching, and converting it into a standardized format, and then loaded it into a Hadoop-based data warehouse.

My experience includes:

- Data Extraction: Using tools like Sqoop for database extraction, Flume for streaming data ingestion, and custom scripts for handling various data formats.

- Data Transformation: Employing Apache Spark for complex transformations, including data cleaning, deduplication, and data enrichment using external data sources. I’ve also leveraged scripting languages like Python to handle custom transformations.

- Data Loading: Loading the transformed data into various targets, such as Hive tables, HBase, and cloud storage (AWS S3, Azure Blob Storage). I have experience optimizing load performance by parallelizing the load process and optimizing data formats for efficient storage.

I understand the importance of error handling, logging, and monitoring throughout the ETL process to ensure data quality and reliability.

Q 20. Describe your experience with data warehousing and data lakes.

Data warehousing and data lakes represent different approaches to storing and managing big data. Data warehousing focuses on structured data, often modeled around a star schema for efficient querying. Data lakes, on the other hand, store raw data in its native format, offering greater flexibility but requiring more effort for data discovery and access.

I have experience building and managing both. In one project, we built a data warehouse on top of Hive, using Impala for fast querying and visualization. The warehouse provided a structured view of our business data, perfect for operational reporting and business intelligence. In another project, we implemented a data lake on AWS S3, storing various data formats – structured, semi-structured, and unstructured – for exploratory data analysis and machine learning. Access to the data lake was handled via Spark, allowing us to conduct complex analytics on the raw data. This demonstrated the importance of having both structured and unstructured storage based on project needs.

Choosing between a data warehouse and a data lake depends on the specific requirements and use cases. Often, a hybrid approach combining the strengths of both proves most beneficial.

Q 21. How do you ensure data quality in a Big Data environment?

Ensuring data quality in a Big Data environment is paramount. Inaccurate or incomplete data leads to flawed analyses and poor business decisions. My approach involves a multi-faceted strategy:

- Data Profiling: At the initial stage, I conduct comprehensive data profiling to understand data characteristics, identify inconsistencies, and assess data quality metrics (completeness, accuracy, consistency, validity, timeliness). Tools like Apache Griffin are helpful for this.

- Data Cleansing: Implementing data cleansing procedures to handle missing values, outliers, and inconsistent data formats. This often involves custom scripting and data transformation techniques within the ETL process.

- Data Validation: Defining clear data validation rules and applying them during the ETL process to ensure data quality before loading it into the target systems. This may involve using constraint checks within databases or custom validation scripts.

- Data Monitoring: Establishing continuous data quality monitoring using dashboards and alerts. This ensures timely identification and resolution of data quality issues. Tools such as Apache Atlas can be helpful in building a data governance framework.

- Data Governance Framework: Implementing a data governance framework that defines roles, responsibilities, and processes for data quality management. This ensures a systematic approach to data quality across the organization.

Proactive data quality management is essential. Reacting to data issues after they’ve impacted business decisions is far less effective than building preventative measures into the data pipeline from the start.

Q 22. What are some common performance bottlenecks in Hadoop clusters?

Hadoop cluster performance bottlenecks can stem from various sources, often intertwined. Imagine a highway system – if one section is congested, the whole system slows down. Similarly, issues in one area of a Hadoop cluster can significantly impact overall performance.

Network Bottlenecks: Slow network speeds between nodes, insufficient bandwidth, or high network latency are major culprits. Data transfer between NameNodes, DataNodes, and clients is crucial; slow transfers severely impact job execution. Think of this as the highway’s speed limit – a low limit slows everything down.

Disk I/O Bottlenecks: Slow hard drives on DataNodes are a common problem. If nodes can’t read or write data fast enough, MapReduce jobs will be delayed. This is like having a traffic jam at a highway toll booth – vehicles (data) pile up.

Resource Bottlenecks: Insufficient CPU, memory (RAM), or insufficient number of nodes can lead to long processing times. Imagine this as having too few lanes on the highway for the volume of traffic.

NameNode Bottlenecks: The NameNode’s metadata management is critical. If it struggles to handle requests or becomes overloaded, the entire cluster slows. This is like the central control center of the highway system becoming overwhelmed.

Data Skew: Uneven data distribution across DataNodes results in some nodes working much harder than others, leading to imbalance and delays. Think of this as a highway having only one major route, causing congestion.

Configuration Issues: Incorrectly configured Hadoop parameters, like HDFS block size or MapReduce job settings, can drastically hinder performance. This is like having poorly designed highway systems without proper routing.

Identifying and resolving these bottlenecks often involves careful monitoring, performance tuning, and potentially upgrading hardware or optimizing data distribution.

Q 23. How do you monitor and troubleshoot Hadoop clusters?

Monitoring and troubleshooting Hadoop clusters requires a multi-faceted approach, combining tools and best practices. Think of it as being a mechanic for a complex machine – you need the right tools and knowledge to diagnose the problem.

Resource Monitoring Tools: Tools like

Ganglia,Nagios, andAmbariprovide real-time monitoring of CPU, memory, disk I/O, and network usage on each node. They provide a dashboard view of cluster health and performance.Hadoop Ecosystem Tools:

YARN(Yet Another Resource Negotiator) provides metrics on resource utilization and job progress.HDFSprovides commands to check disk space, replication factors, and other file system metrics.Log Analysis: Examining the logs from NameNode, DataNodes, and job trackers is critical for debugging. Errors, warnings, and slow operations often leave clues in the logs. Tools like

LogstashandElasticsearchcan help centralize and analyze these logs.Profiling Tools: Tools like

JProfilercan help identify performance bottlenecks within the MapReduce jobs themselves, indicating slow functions or inefficient data processing.

Troubleshooting typically involves analyzing the above metrics, identifying unusual patterns (e.g., high CPU on specific nodes, slow I/O), and then digging into the logs to identify the root cause. For example, seeing high CPU usage and slow I/O might point to a problem with either the hard drive or a specific task in your MapReduce job. Addressing these issues then involves optimization, reconfiguration, or hardware upgrades.

Q 24. What is your experience with various NoSQL databases?

My experience encompasses several NoSQL databases, each suited for different data models and use cases. Think of them as specialized tools in a toolbox – each has its strengths and weaknesses.

MongoDB: I have extensive experience with MongoDB, a document-oriented database. Its flexible schema and scalability make it ideal for handling semi-structured data and rapid prototyping. I’ve used it in projects requiring high-velocity data insertion and flexible querying.

Cassandra: I’ve worked with Cassandra, a wide-column store database known for its high availability and scalability. Its distributed nature makes it perfect for handling massive datasets with high write throughput, often in situations where eventual consistency is acceptable. I used this in a project with extremely high volume transactional data.

HBase: As a column-oriented database built on top of HDFS, HBase offers strong integration with the Hadoop ecosystem. Its capabilities in handling massive datasets and random access to rows make it suited for read/write-heavy applications and real-time analytics. I integrated HBase with several Hadoop projects requiring fast lookups.

Redis: I’ve used Redis for in-memory data caching. Its speed and performance significantly boost applications that require frequent data access. I’ve implemented it to improve query performance in several large scale projects.

The choice of NoSQL database depends heavily on the specific requirements of the project – the data structure, query patterns, scalability needs, and consistency requirements are all crucial factors.

Q 25. Describe your experience with cloud platforms like AWS, Azure, or GCP for Big Data solutions.

I have significant experience leveraging cloud platforms like AWS, Azure, and GCP for Big Data solutions. These platforms offer managed services that significantly simplify the deployment and management of Hadoop clusters. Think of them as pre-built garages for your car (Hadoop) instead of having to build your own from scratch.

AWS: I’ve extensively used AWS services like EMR (Elastic MapReduce), S3 (Simple Storage Service), and Redshift for managing Hadoop clusters, storing data, and performing data warehousing. EMR’s ease of cluster creation and management greatly improved our workflow.

Azure: I have experience using Azure HDInsight, Azure Data Lake Storage, and Azure Synapse Analytics for similar Big Data tasks. Azure’s integration with other Azure services provided a seamless data pipeline.

GCP: My experience with GCP includes using Dataproc, Cloud Storage, and BigQuery. GCP’s focus on serverless computing options made some projects more efficient in terms of cost.

Each cloud provider offers advantages in terms of pricing, features, and integration with other services. The best choice depends on the specific project requirements, existing infrastructure, and budget constraints. I am proficient in utilizing the strengths of each platform to optimize performance and cost-efficiency for specific projects.

Q 26. Explain your experience with data security and privacy in a Big Data environment.

Data security and privacy are paramount in any Big Data environment. Think of it as protecting a valuable treasure – multiple layers of security are necessary.

Access Control: Implementing robust access control mechanisms, using tools like Kerberos and Ranger, is essential. This ensures that only authorized users can access sensitive data.

Data Encryption: Encrypting data at rest (on disk) and in transit (during network transfers) is crucial to protect against unauthorized access. We use tools like HDFS encryption and SSL/TLS.

Data Masking and Anonymization: Techniques like data masking and anonymization are employed to protect sensitive information while still allowing for analysis. For example, we might replace real names with pseudonyms.

Compliance: Adherence to regulations like GDPR, CCPA, and HIPAA is vital depending on the nature of the data being processed. This often involves implementing data governance policies and procedures.

Auditing: Regular auditing of access logs, system configurations, and data flows is necessary to detect and respond to security breaches.

In my experience, a proactive approach to security, incorporating these measures from the project’s inception, is far more effective than trying to retrofit security afterward.

Q 27. What are your preferred tools and technologies for Big Data development?

My preferred tools and technologies are chosen based on the specific project requirements and the overall architecture. However, several tools are consistently valuable in my workflow.

Programming Languages: Java, Python, and Scala are my go-to languages for Big Data development, offering different strengths for different tasks. Java is often used for core Hadoop development, Python for scripting and data analysis, and Scala for Spark applications.

Frameworks: Apache Spark and Hadoop MapReduce are crucial for large-scale data processing. Spark’s speed and ease of use are often preferred for iterative algorithms and real-time analytics, while Hadoop MapReduce remains powerful for batch processing tasks.

Databases: As mentioned earlier, my experience spans multiple NoSQL databases (MongoDB, Cassandra, HBase, Redis) alongside SQL databases like PostgreSQL and MySQL for specific tasks. I select the database based on the data’s structure and required performance.

Cloud Platforms: I’m comfortable with AWS, Azure, and GCP, selecting the most cost-effective and suitable platform for each project.

Data Visualization Tools: Tools like Tableau and Power BI are essential for presenting insights derived from Big Data analysis in a clear and concise manner.

The right combination of tools is key to efficient and effective Big Data development.

Q 28. Describe a challenging Big Data project you worked on and how you overcame the challenges.

One challenging project involved processing and analyzing terabytes of real-time sensor data from a large manufacturing facility. The challenge was threefold: (1) the high velocity of incoming data, (2) the need for real-time anomaly detection, and (3) the requirement for low latency responses.

We overcame these challenges using a multi-pronged approach:

Apache Kafka: We used Kafka as a high-throughput streaming platform to handle the constant influx of sensor data. This ensured that no data was lost.

Apache Spark Streaming: Spark Streaming was used to process the data streams in real-time. Its ability to handle micro-batches enabled us to perform calculations quickly.

Machine Learning Models: We developed machine learning models (using Python and libraries like scikit-learn) to identify anomalies in the sensor data. These models were trained on historical data and deployed within the Spark Streaming pipeline.

Data Visualization Dashboard: A real-time dashboard (using tools like Grafana) was developed to display critical metrics and detected anomalies, allowing for immediate intervention.

This project highlighted the importance of choosing the right technology stack for a given problem and underscores the power of combining streaming technologies, machine learning, and real-time data visualization for impactful solutions. The success of this project demonstrated our ability to work under pressure and innovate to meet stringent performance requirements.

Key Topics to Learn for Big Data and Hadoop Interview

Landing your dream Big Data and Hadoop role requires a solid understanding of core concepts and their practical applications. Focus your preparation on these key areas:

- Hadoop Ecosystem Components: Deep dive into HDFS (Hadoop Distributed File System), YARN (Yet Another Resource Negotiator), MapReduce, and their functionalities. Understand the strengths and limitations of each component.

- Data Processing Frameworks: Explore frameworks like Spark, Hive, and Pig. Learn their differences, use cases, and how they interact within the Hadoop ecosystem. Practice writing basic scripts or queries in at least one of these frameworks.

- Data Modeling and Warehousing: Grasp the principles of data modeling for Big Data, including schema-on-read and schema-on-write approaches. Understand the role of data warehousing in the Big Data lifecycle and different types of data warehouses.

- NoSQL Databases: Familiarize yourself with various NoSQL database types (e.g., Cassandra, MongoDB) and their suitability for different Big Data applications. Understand their scalability and performance characteristics.

- Big Data Analytics Techniques: Explore common analytical techniques like machine learning algorithms, statistical modeling, and data mining within the context of Big Data. Be prepared to discuss how these techniques are applied to real-world problems.

- Data Governance and Security: Understand the importance of data governance and security in Big Data environments. Explore topics like data lineage, access control, and data privacy.

- Cloud-Based Big Data Solutions: Familiarize yourself with cloud-based Hadoop offerings from major providers (AWS EMR, Azure HDInsight, Google Cloud Dataproc). Understand their advantages and how they differ from on-premise deployments.

Next Steps

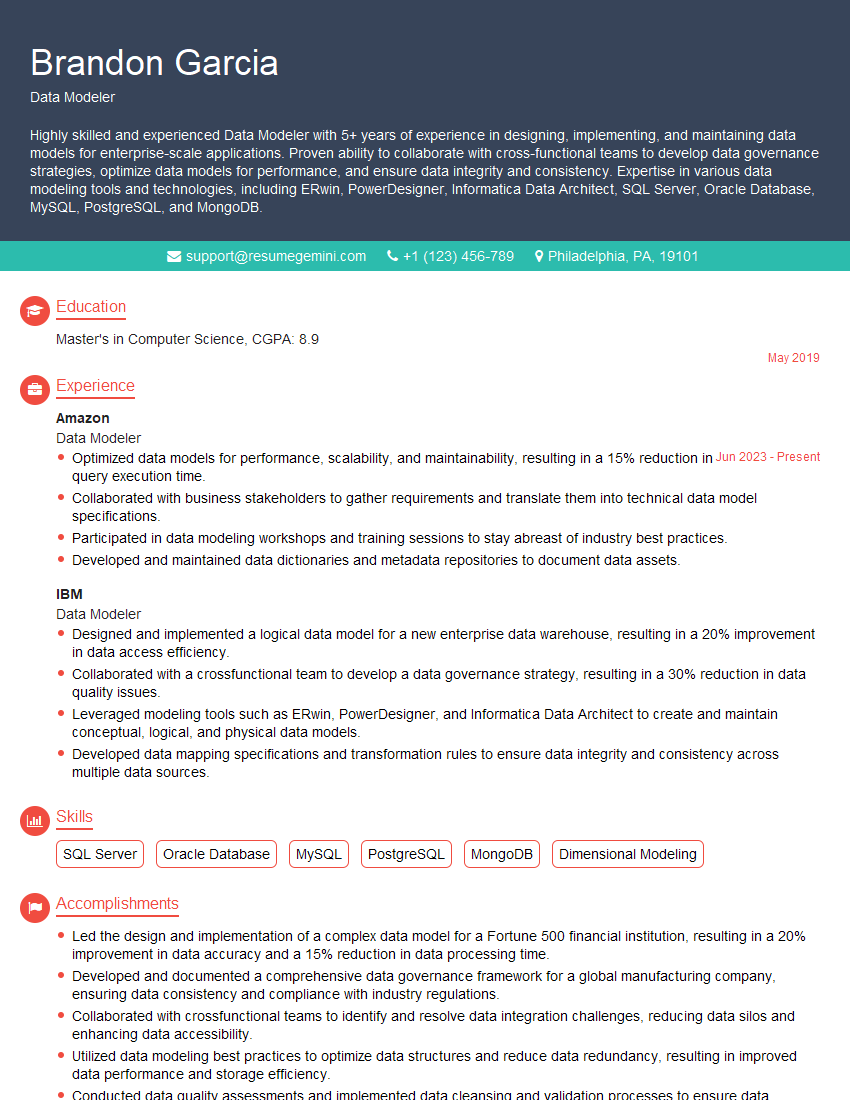

Mastering Big Data and Hadoop opens doors to exciting and high-demand careers. To maximize your job prospects, focus on crafting a compelling and ATS-friendly resume that showcases your skills and experience effectively. ResumeGemini is a trusted resource to help you build a professional and impactful resume. They provide examples of resumes tailored specifically to Big Data and Hadoop roles, giving you a head start in presenting yourself as the ideal candidate. Take the next step and invest in creating a resume that truly reflects your capabilities – your future self will thank you!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

These apartments are so amazing, posting them online would break the algorithm.

https://bit.ly/Lovely2BedsApartmentHudsonYards

Reach out at BENSON@LONDONFOSTER.COM and let’s get started!

Take a look at this stunning 2-bedroom apartment perfectly situated NYC’s coveted Hudson Yards!

https://bit.ly/Lovely2BedsApartmentHudsonYards

Live Rent Free!

https://bit.ly/LiveRentFREE

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.

Hi, I represent a social media marketing agency and liked your blog

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?