Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Cloud-Based Data Processing interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Cloud-Based Data Processing Interview

Q 1. Explain the difference between batch processing and real-time processing in cloud environments.

Batch processing and real-time processing represent two fundamentally different approaches to data handling in cloud environments. Think of batch processing as a factory assembly line: you collect a large batch of raw materials (data), process them all at once, and then get the finished product (analyzed data) later. Real-time processing, on the other hand, is more like a live news broadcast – data is processed and made available instantly, with minimal latency.

- Batch Processing: Ideal for large datasets where immediate results aren’t crucial. It’s cost-effective because it utilizes resources efficiently during off-peak hours. Examples include nightly data warehousing updates, generating monthly reports, or processing large log files for security analysis. The data is processed in large batches, often at scheduled intervals.

- Real-time Processing: Essential for applications requiring immediate insights, such as fraud detection, stock trading, or personalized recommendations. Data is processed as it arrives, with low latency. This approach demands higher computational power and often more expensive infrastructure but provides immediate feedback.

Choosing between the two depends entirely on the application’s requirements. For example, analyzing website traffic for a daily summary would be a batch processing task, while detecting fraudulent transactions in an online payment system requires real-time processing.

Q 2. Describe your experience with various cloud data storage services (e.g., AWS S3, Azure Blob Storage, GCP Cloud Storage).

I have extensive experience working with various cloud storage services, including AWS S3, Azure Blob Storage, and GCP Cloud Storage. My experience spans from designing storage architectures for large-scale data lakes to optimizing data retrieval for analytical applications.

- AWS S3 (Amazon Simple Storage Service): I’ve used S3 extensively for storing raw data, processed data, and application assets. I’ve leveraged its features like versioning for data protection, lifecycle policies for cost optimization, and integration with other AWS services like EMR and Glue for seamless data processing.

- Azure Blob Storage: My experience with Azure Blob Storage includes setting up highly available and scalable storage solutions for large media files and archival data. I’ve utilized its features like hierarchical namespaces for better organization and integration with Azure Data Lake Storage Gen2 for advanced analytics.

- GCP Cloud Storage: I’ve worked with GCP Cloud Storage for storing various data types, including images, videos, and structured data. I’ve utilized its strong integration with other GCP services like BigQuery and Dataflow for efficient data processing. I’ve also leveraged its lifecycle management features to control storage costs.

In each case, my approach focused on selecting the optimal storage class based on access frequency and cost considerations. I’ve consistently prioritized security by implementing appropriate access controls and encryption strategies.

Q 3. How would you design a scalable data pipeline for processing large datasets in the cloud?

Designing a scalable data pipeline for large datasets in the cloud requires a well-defined architecture that addresses various aspects of data ingestion, processing, and storage. I typically follow a modular and distributed approach, leveraging cloud-native services.

1. Ingestion: This stage involves collecting data from various sources (databases, APIs, IoT devices, etc.). I’d employ services like Apache Kafka or Amazon Kinesis for high-throughput, fault-tolerant data ingestion. These systems can handle massive data streams and provide a robust foundation for the pipeline.

2. Processing: This step involves transforming and enriching the ingested data. I might use serverless functions (AWS Lambda, Azure Functions, Google Cloud Functions) or managed services like Apache Spark on cloud-based clusters (EMR, Databricks, Dataproc) for parallel processing of large datasets. This allows for scalability and efficient resource utilization.

3. Storage: Data storage is crucial. I’d select appropriate storage based on data type and access patterns: cloud object storage (S3, Azure Blob Storage, GCP Cloud Storage) for large-scale, cost-effective storage; data warehouses (Snowflake, Redshift, BigQuery) for analytical queries; and NoSQL databases (MongoDB, Cassandra, DynamoDB) for specific use cases.

4. Monitoring and Logging: Comprehensive monitoring and logging are essential to track pipeline health, identify bottlenecks, and ensure data quality. Cloud monitoring services (CloudWatch, Azure Monitor, Stackdriver) provide invaluable insights.

Example Architecture: A common pattern is to use Kafka for ingestion, Spark for processing (running on a managed cluster), and then loading the processed data into a data warehouse for reporting and analytics. The entire process can be orchestrated using tools like Apache Airflow or Prefect for managing the workflow and dependencies.

Q 4. What are the key considerations for data security and privacy in cloud-based data processing?

Data security and privacy are paramount in cloud-based data processing. Addressing these concerns requires a multifaceted approach encompassing several key considerations:

- Data Encryption: Encrypting data at rest and in transit is crucial. Cloud providers offer various encryption services, such as server-side encryption (SSE) and client-side encryption. Selecting the appropriate encryption method depends on the sensitivity of the data and security requirements.

- Access Control: Implementing fine-grained access control mechanisms is vital. Role-Based Access Control (RBAC) allows for granular permission management, ensuring only authorized users can access specific data and functionalities.

- Data Loss Prevention (DLP): DLP tools can help prevent sensitive data from leaving the cloud environment. These tools can scan data for confidential information and alert administrators if unauthorized access or data breaches are attempted.

- Compliance and Regulations: Adhering to relevant regulations like GDPR, HIPAA, or CCPA is mandatory. This involves implementing appropriate security controls and data governance policies to meet legal obligations.

- Auditing and Monitoring: Regularly auditing security controls and monitoring system logs is crucial to detect and respond to potential threats and data breaches promptly.

A well-defined security strategy, coupled with regular security assessments and penetration testing, ensures the confidentiality, integrity, and availability of data in the cloud.

Q 5. Compare and contrast different data warehousing solutions in the cloud (e.g., Snowflake, Redshift, BigQuery).

Snowflake, Redshift, and BigQuery are leading cloud data warehousing solutions, each with its strengths and weaknesses:

- Snowflake: A fully managed, cloud-based data warehouse known for its scalability, performance, and ease of use. It’s highly scalable, able to handle massive datasets and concurrent queries efficiently. Its pay-as-you-go pricing model is attractive, and it offers excellent performance with its unique architecture.

- Redshift: Amazon’s data warehouse service, tightly integrated with the AWS ecosystem. It offers good performance and scalability but can be less flexible than Snowflake in terms of pricing and deployment options. Its integration with other AWS services is a major advantage.

- BigQuery: Google Cloud’s serverless data warehouse known for its strong analytical capabilities and integration with other GCP services. It’s highly scalable and excels at handling large datasets with its columnar storage and optimized query engine. Its pricing model is often viewed as favorable for large-scale analytical workloads.

The choice depends on the specific needs of the project. Snowflake’s flexibility and ease of use make it a popular choice for various applications. Redshift’s strong integration with AWS is beneficial for users already heavily invested in the AWS ecosystem. BigQuery’s serverless nature and analytical prowess are advantageous for large-scale analytical workloads.

Q 6. Explain your experience with ETL (Extract, Transform, Load) processes in a cloud environment.

My ETL experience in cloud environments is extensive. I’ve designed and implemented numerous ETL pipelines using various tools and technologies, always focusing on efficiency, scalability, and maintainability.

I’ve utilized both managed ETL services (like AWS Glue, Azure Data Factory, Google Cloud Data Fusion) and open-source tools (Apache Spark, Apache Kafka) depending on project requirements and budget constraints. Managed services often provide ease of use and management, while open-source tools offer more flexibility and customization options.

A typical ETL process I might design involves:

- Extract: Using connectors and APIs to extract data from various sources, ensuring data integrity and handling potential errors gracefully.

- Transform: Employing data transformation techniques (cleaning, filtering, aggregation, etc.) using tools like Spark or serverless functions to prepare the data for loading.

- Load: Loading the transformed data into the target data warehouse or data lake, ensuring data consistency and handling potential conflicts.

I always prioritize robust error handling, logging, and monitoring throughout the ETL process to ensure data quality and pipeline stability. My goal is to create efficient and reliable ETL pipelines that can adapt to changing data volumes and requirements.

Q 7. How do you handle data quality issues in a cloud-based data processing system?

Handling data quality issues is crucial for the success of any cloud-based data processing system. My approach involves a multi-stage strategy:

- Data Profiling: Before any processing begins, I perform thorough data profiling to understand the data’s characteristics (data types, distributions, completeness, etc.). This step helps identify potential quality issues early on.

- Data Cleaning: I employ various data cleaning techniques to address issues like missing values, inconsistencies, and outliers. This might involve data imputation, standardization, or deduplication using techniques appropriate for the specific data type and issue.

- Data Validation: Implementing data validation rules and constraints at various stages of the pipeline ensures data integrity. This involves checks for data types, ranges, and consistency across different datasets.

- Data Monitoring: Continuous data monitoring post-processing is essential to identify and address any emerging quality issues. This involves setting up alerts and dashboards to track key data quality metrics.

- Data Governance: Establishing clear data governance policies and procedures is vital to maintaining data quality over time. This involves defining data quality standards, roles and responsibilities, and processes for managing data quality issues.

Tools like data quality platforms and custom scripts can automate these processes, making them more efficient and effective. A proactive approach to data quality, rather than reactive, minimizes errors and leads to more accurate and reliable data-driven decisions.

Q 8. Describe your experience with various data processing frameworks (e.g., Apache Spark, Hadoop, Apache Flink).

My experience spans several prominent data processing frameworks. Apache Spark, for instance, is my go-to for large-scale data processing due to its in-memory computation capabilities, making it significantly faster than traditional Hadoop MapReduce for iterative algorithms. I’ve used Spark extensively in projects involving real-time analytics and machine learning model training, leveraging its SQL API for querying and its MLlib library for model building. I’ve optimized Spark jobs by tuning configurations like partitions, using broadcast variables for data reuse, and employing caching strategies.

Hadoop, while not as fast as Spark for iterative tasks, remains crucial for batch processing of extremely large datasets that don’t fit into memory. My experience includes designing and implementing Hadoop workflows using MapReduce for ETL (Extract, Transform, Load) processes, where the volume and velocity of data necessitated a distributed approach. I’ve also worked with HDFS (Hadoop Distributed File System) for robust data storage and management.

Apache Flink, on the other hand, is a powerful framework for stream processing. I’ve employed Flink in projects requiring real-time data analysis from various sources, building applications for fraud detection and anomaly detection. Flink’s ability to handle both batch and streaming workloads in a unified platform is particularly valuable, simplifying application development and maintenance. I’ve leveraged Flink’s state management features to build highly reliable and fault-tolerant streaming applications.

Q 9. How do you optimize query performance in a cloud-based data warehouse?

Optimizing query performance in a cloud-based data warehouse involves a multifaceted approach. It’s like fine-tuning a well-oiled machine – every component matters. First, data modeling plays a critical role. Properly designed schemas with appropriate indexing and partitioning can drastically reduce query execution time. For example, using columnar storage formats like Parquet or ORC often significantly outperforms row-based storage for analytical queries.

Secondly, query optimization strategies are essential. This includes understanding query execution plans, using appropriate join strategies (e.g., hash joins, merge joins), and employing techniques like predicate pushdown to filter data at the source. Many cloud data warehouses offer query optimizers, but it’s crucial to understand how they work and sometimes provide hints to guide them. For instance, specifying data types correctly can influence how the optimizer selects the best execution plan.

Thirdly, resource allocation is key. Insufficient CPU, memory, or storage can bottleneck query performance. Cloud providers offer tools to monitor and adjust resource allocation dynamically based on workload. Auto-scaling is a powerful tool here, automatically adjusting resources to handle fluctuating demand.

Finally, data warehousing best practices, such as materialized views and caching frequently accessed data, can significantly improve query response times. Think of materialized views as pre-computed answers to common queries, instantly available when needed.

Q 10. Explain your experience with data modeling techniques for cloud-based data warehouses.

My experience with data modeling for cloud-based data warehouses centers around choosing the right model for the specific needs of the application. The choice often lies between a star schema, snowflake schema, or data vault modeling. A star schema, simple and efficient, is ideal for straightforward reporting. A snowflake schema, a normalized version of the star schema, improves data integrity but can be slightly less efficient for querying.

Data vault modeling, on the other hand, is ideal for situations requiring high data lineage and historical tracking. It is particularly useful in situations requiring regulatory compliance or complex data integration scenarios. The selection of a data model often involves a trade-off between query performance and data integrity. I’ve encountered scenarios where a slightly less performant model was necessary to maintain better data quality and compliance requirements.

Beyond schema design, partitioning and clustering are crucial for optimizing query performance. Partitioning allows for parallel processing of data, while clustering enables more efficient data retrieval. For instance, partitioning a table by date allows for efficient querying of data within a specific time range. Careful consideration of these factors dramatically improves performance and scalability.

Q 11. How do you monitor and manage the performance of a cloud-based data processing system?

Monitoring and managing the performance of a cloud-based data processing system is crucial for ensuring reliability and efficiency. Cloud providers offer a range of monitoring tools, often integrated into their data warehouse and processing services. These tools provide insights into various aspects of system performance, including CPU utilization, memory usage, network latency, and query execution times.

I typically establish a comprehensive monitoring strategy leveraging these tools, configuring alerts for critical thresholds (e.g., high CPU usage, slow query response times). This proactive approach allows for timely intervention, preventing performance degradation and minimizing service disruptions. Furthermore, I regularly analyze performance logs and metrics to identify potential bottlenecks and optimize system configurations.

Beyond basic monitoring, I frequently employ profiling tools to analyze the performance of individual queries or jobs. This detailed analysis can pinpoint performance issues, such as inefficient queries or resource contention. Addressing these issues, often through query optimization or resource adjustments, significantly improves overall system performance. The process is iterative: monitoring, analysis, optimization, and repeating the cycle to continuously improve system efficiency and stability.

Q 12. What are some common challenges encountered in cloud-based data processing, and how have you overcome them?

Cloud-based data processing presents unique challenges. One common issue is cost management. Uncontrolled resource usage can quickly escalate expenses. To overcome this, I focus on optimizing resource allocation, using auto-scaling to adjust resources dynamically, and employing cost optimization tools offered by cloud providers. For example, right-sizing instances and leveraging spot instances can significantly reduce costs.

Another challenge is data security and privacy. Ensuring data confidentiality, integrity, and availability in the cloud requires implementing robust security measures. This includes data encryption both in transit and at rest, access control management using IAM (Identity and Access Management) roles, and regular security audits. Compliance with relevant regulations, such as GDPR, is also crucial.

Finally, dealing with data heterogeneity and schema evolution is a common hurdle. Data often originates from diverse sources with varying formats and structures. To overcome this, I employ data integration tools and techniques to cleanse, transform, and load data into a consistent format. Schema evolution necessitates designing flexible schemas and implementing mechanisms to handle changes gracefully without disrupting existing applications. A robust ETL process is key to addressing these challenges effectively.

Q 13. Explain your experience with serverless computing for data processing tasks.

Serverless computing offers a compelling approach to data processing, particularly for event-driven or batch jobs where scalability and cost-efficiency are paramount. I have extensive experience using serverless platforms like AWS Lambda and Azure Functions for data processing tasks. For example, I’ve built Lambda functions triggered by new data arriving in an S3 bucket to perform data transformations and load the data into a data warehouse. This approach eliminates the need to manage servers, reducing operational overhead and allowing for automatic scaling based on demand.

The key advantages of serverless for data processing include cost savings (pay-per-use model), scalability (automatic scaling based on demand), and simplified management (no server administration). However, it’s crucial to be mindful of cold starts (initial delays in function execution) and potential vendor lock-in. Proper function design, including efficient code and optimized invocation strategies, can mitigate these challenges. Serverless shines when dealing with infrequent, large-scale tasks or event-driven processing where bursts of activity are common.

Q 14. Describe your experience with containerization technologies (e.g., Docker, Kubernetes) in a cloud data processing context.

Containerization technologies like Docker and Kubernetes are indispensable in modern cloud data processing environments. Docker allows for packaging applications and their dependencies into portable containers, ensuring consistent execution across different environments. This eliminates the “it works on my machine” problem, ensuring reliable deployment and operation in the cloud. I frequently use Docker to package data processing applications (e.g., Spark applications) and their dependencies before deploying them to a cloud environment.

Kubernetes takes container orchestration to the next level. It manages the deployment, scaling, and monitoring of containerized applications. This is essential for managing complex data processing workflows involving multiple containers and services. For instance, I might use Kubernetes to deploy a distributed Spark cluster across multiple nodes in a cloud environment, ensuring high availability and scalability. Kubernetes simplifies deployment, manages resource allocation efficiently, and provides robust monitoring and logging capabilities, all crucial aspects of managing a complex cloud data processing system.

Q 15. How do you ensure data consistency and integrity in a distributed cloud data processing environment?

Ensuring data consistency and integrity in a distributed cloud environment is paramount. It’s like building a skyscraper – each floor (data element) needs to be perfectly aligned with the others to prevent collapse (data corruption). We achieve this through a multi-pronged approach:

Idempotent Operations: Designing operations that can be repeated multiple times without altering the outcome. For instance, if a data update fails halfway, retrying it shouldn’t corrupt the data.

Distributed Transactions: Using technologies like two-phase commit (2PC) or sagas to guarantee that all parts of a distributed operation succeed or fail atomically. Imagine transferring money between accounts across multiple servers – either both transfers happen or neither does.

Data Replication and Consistency Models: Employing techniques like master-slave replication or multi-master replication with appropriate consistency models (e.g., eventual consistency, strong consistency) to manage data redundancy and maintain data integrity across multiple nodes. The choice depends on the application’s needs – a banking app needs strong consistency, while a social media feed can tolerate eventual consistency.

Checksums and Hashing: Using checksums or hashing algorithms to detect data corruption during storage or transmission. This acts as a safeguard to detect any silent data corruption.

Data Validation and Constraints: Defining data validation rules and constraints (e.g., data type, range, format) at the source and destination to catch erroneous data before it’s processed. Think of it as a quality control check for your data.

For example, in a project involving real-time analytics on sensor data, we implemented a distributed transaction system using Apache Kafka to ensure that each sensor reading was recorded only once and consistently across all processing nodes.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with data versioning and rollback strategies in the cloud.

Data versioning and rollback strategies are essential for managing changes and mitigating errors in cloud-based data processing. It’s like having a detailed history of edits in a document, allowing you to revert to previous versions if needed. My experience involves:

Version Control Systems (e.g., Git): For managing code and configuration files related to data processing pipelines. This ensures that we can track changes and revert to previous working states.

Data Lakehouse Architectures: Leveraging tools like Delta Lake or Iceberg to provide ACID properties (Atomicity, Consistency, Isolation, Durability) on data lake data. These offer built-in versioning and time-travel capabilities, allowing us to roll back to specific points in time.

Cloud-Native Backup and Restore Services: Using managed services like AWS Backup or Azure Backup to create regular snapshots of data and metadata. This facilitates quick recovery from failures or accidental data deletion.

Schema Evolution Management: Implementing robust schema management practices to handle changes to data structures without disrupting downstream processes. This involves versioning the schema itself and handling compatibility between different versions.

In a recent project, we used Delta Lake to version our data warehouse tables. This allowed us to easily revert to a previous state of the data after a faulty ETL (Extract, Transform, Load) job ran, minimizing downtime and data loss.

Q 17. How do you handle data anomalies and outliers in a cloud-based data processing system?

Handling data anomalies and outliers is crucial for maintaining data quality and accuracy. Think of it as cleaning up a messy room – you need to identify and deal with the things that don’t belong. My approach includes:

Data Profiling and Exploration: Employing statistical methods and data visualization techniques to identify unusual patterns and potential outliers. This helps in understanding the nature and extent of the anomalies.

Statistical Methods for Outlier Detection: Using methods like Z-score, IQR (Interquartile Range), or DBSCAN (Density-Based Spatial Clustering of Applications with Noise) to identify data points that significantly deviate from the norm.

Data Cleansing Techniques: Applying data cleansing techniques like imputation (filling missing values), smoothing, or removal of outliers, depending on the nature of the anomaly and its impact on the analysis. The strategy depends on whether the outlier is an error or truly exceptional data.

Machine Learning for Anomaly Detection: Using machine learning models like one-class SVM or autoencoders to identify anomalies that are difficult to detect using traditional statistical methods. This is particularly effective when dealing with complex datasets.

In a fraud detection system, we used machine learning to identify unusual transaction patterns that indicated potential fraudulent activities. These patterns were not easily detectable using traditional statistical methods.

Q 18. What are your preferred methods for data visualization and reporting in the cloud?

Data visualization and reporting are essential for communicating insights derived from cloud-based data processing. It’s about transforming raw data into easily understandable stories. My preferred methods include:

Cloud-based BI Tools (e.g., Tableau, Power BI, Looker): These tools offer a user-friendly interface for creating interactive dashboards and reports, allowing for easy exploration and sharing of data.

Custom Visualization Libraries (e.g., D3.js, Plotly): For creating highly customized visualizations tailored to specific needs. This allows for creating bespoke visualizations that best represent the data.

Data Storytelling Techniques: Combining visualizations with narrative explanations to create compelling reports that effectively communicate key findings.

Automated Reporting: Scheduling automated reports to be generated and distributed at regular intervals. This saves time and ensures timely access to critical information.

In a project for a retail company, we used Tableau to create interactive dashboards showing sales trends, customer demographics, and inventory levels. This allowed business users to easily understand key performance indicators and make data-driven decisions.

Q 19. Describe your experience with implementing data governance policies in a cloud environment.

Implementing data governance policies in a cloud environment is crucial for ensuring data security, compliance, and quality. It’s like setting up rules and guidelines for a shared workspace. My experience includes:

Data Access Control and Security: Defining granular access control policies to restrict access to sensitive data based on roles and responsibilities. This involves integrating with cloud Identity and Access Management (IAM) services.

Data Lineage and Tracking: Implementing mechanisms to track the origin, movement, and transformations of data throughout its lifecycle. This ensures data traceability and accountability.

Data Quality Monitoring and Management: Establishing processes and tools for monitoring data quality, identifying data quality issues, and implementing remediation strategies. This ensures data accuracy and reliability.

Compliance and Regulatory Adherence: Ensuring compliance with relevant data privacy regulations (e.g., GDPR, CCPA) and industry standards. This involves implementing appropriate technical and organizational measures.

Data Catalogs and Metadata Management: Implementing data catalogs to provide a centralized inventory of data assets, metadata, and associated policies. This enables better data discovery and governance.

In a healthcare project, we implemented stringent data governance policies to comply with HIPAA regulations, ensuring patient data privacy and security while enabling efficient data analysis for improving patient care.

Q 20. Explain your understanding of different cloud data integration patterns.

Cloud data integration patterns describe different approaches to combine data from various sources. These patterns are like different recipes for combining ingredients to create a delicious dish (integrated data). My understanding encompasses:

Data Virtualization: Creating a unified view of data from disparate sources without actually moving or replicating the data. This is like a menu that describes all the dishes without needing to prepare them all upfront.

Data Replication: Copying data from source systems to a central data warehouse or data lake. This is like creating a complete copy of all recipes to have readily available.

Change Data Capture (CDC): Capturing only changes to data sources and propagating them to downstream systems. This is like only recording the changes in recipes as they are updated.

ETL (Extract, Transform, Load): A traditional approach involving extracting data from sources, transforming it to match a target schema, and loading it into a target system. This is like taking different recipes, standardizing the format, and compiling them into a cookbook.

ELT (Extract, Load, Transform): A more modern approach where data is loaded into a data warehouse or lake first and then transformed. This is like assembling all ingredients first and then preparing the dish based on the recipe.

The choice of pattern depends on factors like data volume, velocity, variety, and the specific requirements of the integration task. For example, in a real-time data streaming application, CDC is often preferred for its efficiency.

Q 21. How do you choose the appropriate cloud service for a specific data processing task?

Choosing the appropriate cloud service for a specific data processing task depends on several factors, much like selecting the right tool for a specific job. The key considerations include:

Data Volume and Velocity: For high-volume, high-velocity data streams, services like Apache Kafka or Kinesis are ideal. For smaller datasets, cloud storage solutions like S3 or Azure Blob Storage may suffice.

Data Variety and Structure: For structured data, relational databases like RDS or Cloud SQL are suitable. For unstructured or semi-structured data, data lakes built on cloud storage services are more appropriate.

Processing Requirements: For complex analytics, managed services like Databricks or EMR provide scalable compute resources. For simpler tasks, serverless functions or managed database services may be sufficient.

Scalability and Cost: Serverless options offer cost-effectiveness for intermittent tasks, while managed services provide scalability for large-scale processing.

Security and Compliance: Choosing services that meet required security and compliance standards is crucial.

For instance, when processing large volumes of IoT sensor data requiring real-time analysis, we opted for a serverless architecture using AWS Lambda and Kinesis, allowing for cost-effective and scalable processing. Conversely, for batch processing of a large, structured dataset for reporting, we chose a managed service like Google BigQuery.

Q 22. Describe your experience with cost optimization strategies for cloud-based data processing.

Cost optimization in cloud-based data processing is crucial for maintaining profitability. It involves strategically managing cloud resources to minimize expenses without sacrificing performance. This requires a multi-faceted approach.

- Right-sizing instances: Instead of using oversized compute instances, I meticulously analyze workload requirements and select appropriately sized virtual machines (VMs). For example, if a batch processing job requires significant compute power for a short period, using spot instances (unused compute capacity offered at a discount) is highly effective. If it’s a consistently running service, reserving instances might be more cost-effective.

- Leveraging serverless technologies: Serverless computing, like AWS Lambda or Azure Functions, automatically scales resources based on demand, eliminating the need to manage idle servers. This is particularly beneficial for event-driven architectures and microservices.

- Data storage optimization: Utilizing cost-effective storage tiers is paramount. Frequently accessed data can reside in faster, more expensive storage, while less frequently accessed data can be archived to cheaper, slower storage like Glacier or Azure Archive Storage. Life-cycle management policies automate this process.

- Monitoring and alerting: Implementing robust monitoring and alerting systems allows for proactive identification of cost anomalies. This enables immediate action to prevent unnecessary resource consumption. For instance, setting alerts for consistently high CPU utilization can indicate a need for scaling or optimization.

- Using Reserved Instances/Savings Plans: Committing to a certain amount of compute capacity upfront through Reserved Instances (RI) or Savings Plans can result in significant cost savings, often 40-70% compared to on-demand pricing.

In a recent project, by implementing these strategies, we reduced our client’s cloud data processing costs by 35% without impacting performance. We achieved this through a combination of right-sizing instances, migrating data to a more cost-effective storage tier, and leveraging serverless functions for specific tasks.

Q 23. What are the key performance indicators (KPIs) you would use to measure the success of a cloud-based data processing system?

Key Performance Indicators (KPIs) for a cloud-based data processing system should cover various aspects – cost, performance, and reliability. These are some of the most critical KPIs:

- Data Processing Latency: The time it takes to process a given amount of data. Low latency is crucial for real-time applications.

- Throughput: The volume of data processed per unit of time. This measures the overall efficiency of the system.

- Data Ingestion Rate: How quickly data can be ingested into the system. Bottlenecks here can significantly impact overall performance.

- Cost per unit of processing: This tracks the cost-effectiveness of the system. It’s crucial for ensuring budget adherence.

- System Availability/Uptime: The percentage of time the system is operational. High uptime is essential for reliability.

- Error Rate: The percentage of failed processing attempts. Low error rates are critical for data integrity.

- Resource Utilization: How efficiently compute, memory, and storage resources are being used. High utilization indicates optimal resource allocation, while low utilization suggests potential for optimization.

For example, in a project involving real-time fraud detection, minimizing latency was paramount. We meticulously monitored and optimized the system to ensure low latency and high throughput, which directly impacted the accuracy and timeliness of fraud detection.

Q 24. Explain your experience with implementing data encryption and access control mechanisms in the cloud.

Implementing data encryption and access control in the cloud is vital for ensuring data security and compliance. This involves a layered approach.

- Data Encryption at Rest: Encrypting data stored in databases, data lakes, or object storage using services provided by the cloud provider (e.g., AWS KMS, Azure Key Vault). This protects data from unauthorized access even if the storage is compromised.

- Data Encryption in Transit: Using TLS/SSL to encrypt data while it’s being transmitted between different components of the system. This protects data from interception during network transfer.

- Access Control Lists (ACLs): Implementing granular access control using ACLs to define which users or services have permission to access specific data or resources. This adheres to the principle of least privilege.

- Role-Based Access Control (RBAC): Using RBAC to assign permissions based on roles within the organization. This simplifies access management and improves security.

- Data Loss Prevention (DLP): Implementing DLP measures to prevent sensitive data from leaving the cloud environment unauthorized. This can involve monitoring data transfers and blocking suspicious activity.

In a recent project involving healthcare data, we implemented end-to-end encryption using cloud provider-managed keys, granular access control via RBAC, and regular security audits to ensure compliance with HIPAA regulations. This involved detailed configuration of IAM roles, encryption policies, and network security groups.

Q 25. Describe your experience with using cloud-based machine learning services for data analysis.

Cloud-based machine learning (ML) services significantly accelerate data analysis. I have extensive experience using services like AWS SageMaker, Azure Machine Learning, and Google Cloud AI Platform. These platforms provide managed infrastructure, pre-trained models, and tools for building, training, and deploying ML models.

- Model Training: I utilize these platforms to train custom models using large datasets, leveraging their scalable compute resources. This often involves experimenting with different algorithms and hyperparameters to optimize model performance.

- Model Deployment: Once trained, models are deployed as APIs or integrated into data pipelines for real-time or batch processing. This typically involves using managed services for model hosting and scaling.

- Pre-trained Models: Leveraging pre-trained models for common tasks (e.g., image classification, natural language processing) can significantly reduce development time and improve accuracy.

- Hyperparameter Tuning: I use automated hyperparameter tuning capabilities to optimize model performance, minimizing manual effort and maximizing efficiency.

For instance, in a project involving customer churn prediction, I used AWS SageMaker to train a model using historical customer data. The model was then deployed as an API to predict churn in real-time, enabling proactive intervention strategies.

Q 26. How do you handle data migration to and from the cloud?

Data migration to and from the cloud requires careful planning and execution to ensure data integrity and minimal downtime. The approach depends on the volume and type of data, as well as the source and target systems.

- Assessment and Planning: A thorough assessment of the existing data infrastructure, data volume, and required migration timelines is crucial. This involves identifying potential challenges and developing a detailed migration plan.

- Data Extraction, Transformation, and Loading (ETL): Using ETL tools to extract data from the source, transform it to the required format, and load it into the cloud. This may involve using cloud-native ETL services or open-source tools like Apache Kafka or Apache NiFi.

- Incremental vs. Full Migration: Choosing between a full migration (migrating all data at once) or an incremental migration (migrating data in phases) depends on the data volume and business requirements. Incremental migration is often preferred for large datasets to minimize downtime.

- Data Validation: After migration, thorough data validation is essential to ensure data integrity. This often involves data comparison and quality checks.

- Testing and Rollback Plan: A robust testing plan should be in place to identify and resolve any issues before the full migration. A rollback plan is crucial for reverting to the previous state in case of unforeseen problems.

In a recent migration project, we used a phased incremental approach, migrating data in batches to minimize disruption to the business. We used AWS DMS (Data Migration Service) for the database migration and AWS S3 for staging and validating the data. This process ensured minimal downtime and data integrity.

Q 27. Explain your experience with different data formats used in cloud-based data processing (e.g., JSON, Avro, Parquet).

Different data formats have strengths and weaknesses in cloud-based data processing. Choosing the right format depends on factors like schema evolution, data size, and processing requirements.

- JSON (JavaScript Object Notation): A human-readable, self-describing format ideal for small to medium-sized datasets and systems requiring flexibility. However, it can be less efficient for large-scale processing due to its text-based nature.

- Avro: A binary format that supports schema evolution, making it well-suited for large datasets where schema changes are expected. It provides efficient serialization and deserialization, making it faster than JSON for processing.

- Parquet: A columnar storage format designed for efficient querying and analysis of large datasets. Its columnar structure allows for quick access to specific columns, making it particularly suitable for analytical workloads. It’s often used in conjunction with Hadoop and Spark.

In a project involving large-scale log analysis, we opted for Parquet due to its columnar storage and efficient query capabilities. This allowed us to quickly and efficiently analyze specific aspects of the logs without processing the entire dataset. For smaller datasets where schema evolution wasn’t a concern, we used JSON for its simplicity and human readability.

Key Topics to Learn for Cloud-Based Data Processing Interview

- Cloud Data Storage Solutions: Understand the various storage options (object storage, block storage, file storage) offered by major cloud providers (AWS S3, Azure Blob Storage, Google Cloud Storage), their strengths, weaknesses, and appropriate use cases. Consider cost optimization strategies and data lifecycle management.

- Data Processing Frameworks: Gain proficiency in frameworks like Apache Spark, Hadoop, and cloud-native services such as AWS EMR, Azure Databricks, and Google Dataproc. Practice designing and implementing data pipelines for ETL (Extract, Transform, Load) processes.

- Data Warehousing and Data Lakes: Learn the differences between data warehouses and data lakes, and when to choose one over the other. Explore cloud-based data warehouse solutions like Snowflake, Amazon Redshift, and Google BigQuery. Understand concepts like schema-on-read vs. schema-on-write.

- Serverless Computing for Data Processing: Explore serverless technologies like AWS Lambda, Azure Functions, and Google Cloud Functions for building scalable and cost-effective data processing solutions. Understand how to trigger these functions based on events.

- Data Security and Privacy: Familiarize yourself with data security best practices in the cloud, including encryption, access control, and compliance with regulations like GDPR and HIPAA. Understand data governance and data lineage.

- Big Data Analytics Techniques: Master fundamental analytical techniques applicable to large datasets, including data mining, machine learning, and statistical modeling. Understand how to leverage cloud-based machine learning platforms.

- Cost Optimization Strategies: Learn to analyze and optimize cloud-based data processing costs. Understand different pricing models and how to choose the most economical solutions for specific workloads.

- Monitoring and Logging: Understand the importance of monitoring and logging in cloud-based data processing environments. Learn how to use cloud-based monitoring tools to track performance and identify issues.

Next Steps

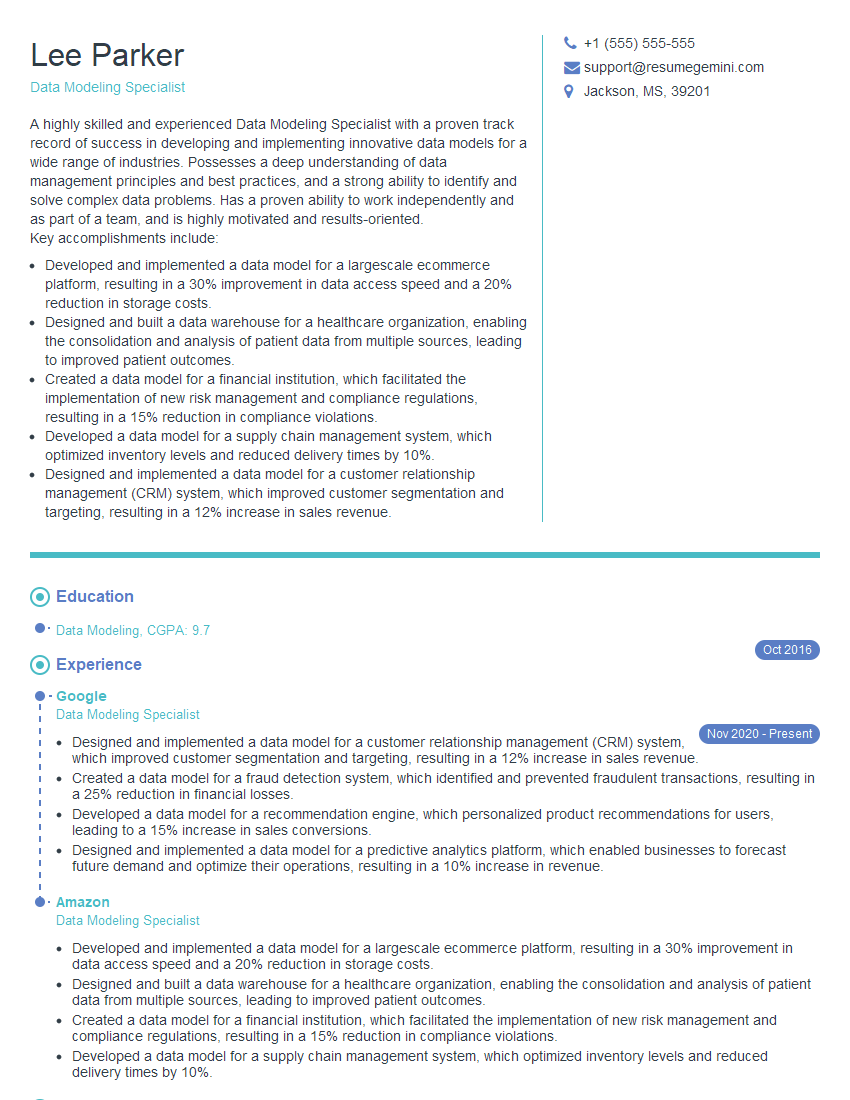

Mastering cloud-based data processing opens doors to exciting and high-demand roles. To maximize your job prospects, building a strong, ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you craft a professional resume that highlights your skills and experience effectively. ResumeGemini provides examples of resumes tailored to Cloud-Based Data Processing roles, giving you a head start in showcasing your qualifications. Take the next step towards your dream career – create a compelling resume today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.