Preparation is the key to success in any interview. In this post, we’ll explore crucial Experience in working with LiDAR and hyperspectral imagery interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in Experience in working with LiDAR and hyperspectral imagery Interview

Q 1. Explain the principles of LiDAR data acquisition.

LiDAR, or Light Detection and Ranging, acquires data by emitting laser pulses and measuring the time it takes for the pulses to reflect back to the sensor. This time-of-flight measurement, combined with the sensor’s position and orientation, allows for the precise determination of the three-dimensional coordinates (X, Y, Z) of points on the Earth’s surface or other objects. Imagine it like a really fast, highly accurate echolocation system. A LiDAR system typically consists of a laser scanner, a GPS receiver for georeferencing, and an Inertial Measurement Unit (IMU) for measuring the sensor’s orientation and movement. Different types of LiDAR exist, including airborne LiDAR (mounted on aircraft), terrestrial LiDAR (ground-based), and mobile LiDAR (mounted on vehicles). The laser pulses can be emitted in different patterns (e.g., single pulses, multiple pulses, scanning patterns), affecting the density and accuracy of the resulting point cloud data. Processing the raw data involves removing noise, correcting for errors in position and orientation, and classifying the points based on their properties.

Q 2. Describe different LiDAR point cloud classification methods.

LiDAR point cloud classification methods categorize points based on their characteristics and context. This is crucial for extracting meaningful information. Common methods include:

- Manual Classification: This involves visually inspecting the point cloud and manually assigning classes (e.g., ground, vegetation, buildings) to individual points or groups of points. While accurate for small datasets, it’s time-consuming and impractical for large-scale projects.

- Automated Classification: Algorithms analyze point cloud properties like intensity, elevation, and neighborhood characteristics to automatically assign classes. Examples include k-Nearest Neighbors (k-NN), support vector machines (SVMs), and machine learning methods like Random Forests and neural networks. These methods are often trained on manually classified data.

- Hybrid Classification: This combines manual and automated approaches. For instance, an automated algorithm might provide an initial classification, which is then refined manually by a human operator.

- Object-Based Image Analysis (OBIA): This approach segments the point cloud into meaningful objects (e.g., individual trees, buildings) and then classifies these objects based on their features. This method is particularly useful for extracting features with complex shapes.

The choice of method depends on factors like the size of the dataset, the desired level of accuracy, and the available resources.

Q 3. How do you handle noise and outliers in LiDAR data?

Noise and outliers in LiDAR data are common problems. Noise typically arises from atmospheric effects, sensor inaccuracies, or reflections from unexpected sources. Outliers are points that deviate significantly from their surroundings and are often caused by errors in data acquisition or processing. Several techniques help handle these issues:

- Filtering: Spatial filtering techniques, such as median filtering or moving average filters, smooth the point cloud and remove noise. Statistical filters identify and remove outliers based on their deviation from the mean or median.

- Segmentation and Classification: Robust segmentation algorithms can isolate noisy or outlier points, making them easier to remove or correct.

- Data editing and quality control: Visual inspection and manual editing are often essential to remove obvious errors and outliers. Software tools provide functionalities for selecting and deleting points or adjusting their coordinates.

- Ground filtering: Specialized algorithms remove ground points from the point cloud to isolate objects of interest. This is crucial for various applications, such as detecting vegetation or buildings.

The selection of appropriate noise and outlier removal techniques requires careful consideration of the dataset and the application.

Q 4. What are the advantages and disadvantages of using LiDAR compared to traditional surveying techniques?

LiDAR offers several advantages over traditional surveying techniques, primarily in speed, accuracy, and data density. Traditional methods, such as total stations or GPS, are often more labor-intensive and time-consuming, particularly for large areas. LiDAR can acquire massive amounts of high-density point cloud data quickly. This results in highly accurate 3D models and detailed terrain information.

Advantages of LiDAR:

- High accuracy and precision: Provides centimeter-level accuracy for measurements.

- High data density: Captures a large number of points, allowing for detailed representation of the environment.

- Rapid data acquisition: Collects data quickly, particularly for large areas.

- Operates in various conditions: Can operate effectively in various weather conditions and environments (although limitations exist).

Disadvantages of LiDAR:

- High cost: LiDAR equipment and data processing can be expensive.

- Data processing complexity: Requires specialized software and expertise to process the large volume of data.

- Limited penetration: Laser pulses may not penetrate dense vegetation or water effectively.

- Safety considerations: Safety precautions are necessary during data acquisition.

Ultimately, the choice between LiDAR and traditional methods depends on the specific project needs, budget, and required level of detail.

Q 5. Explain the concept of hyperspectral imaging and its applications.

Hyperspectral imaging captures images across a continuous spectrum of wavelengths, providing detailed spectral information for each pixel. Unlike traditional RGB imagery, which uses only three broad bands (red, green, blue), hyperspectral imaging utilizes hundreds or even thousands of narrow, contiguous spectral bands. This allows for the identification of subtle spectral variations that are invisible to the human eye. Think of it as having a much finer ‘color’ palette to analyze the world around us. Each object or material has a unique spectral signature that can be used for identification and classification.

Applications of Hyperspectral Imaging include:

- Precision agriculture: Monitoring crop health, identifying nutrient deficiencies, and detecting diseases.

- Environmental monitoring: Mapping vegetation types, assessing water quality, detecting pollution, and monitoring deforestation.

- Geology and mining: Identifying minerals and identifying valuable ore deposits.

- Defense and security: Object detection and identification, target recognition.

- Medical imaging: Tissue classification and disease diagnosis.

Q 6. Describe different preprocessing steps for hyperspectral imagery.

Preprocessing hyperspectral imagery is essential to improve data quality and enhance subsequent analysis. Key preprocessing steps include:

- Radiometric calibration: Converting raw digital numbers (DNs) to physically meaningful units (e.g., radiance, reflectance). This corrects for sensor-specific variations.

- Geometric correction: Correcting for geometric distortions caused by sensor movements or other factors. This ensures accurate spatial alignment.

- Atmospheric correction: Removing the effects of the atmosphere, such as scattering and absorption, to obtain true surface reflectance.

- Noise reduction: Filtering out noise using techniques such as wavelet denoising or principal component analysis (PCA).

- Data transformation: Applying transformations like PCA or Minimum Noise Fraction (MNF) to reduce dimensionality and enhance the signal-to-noise ratio.

The specific preprocessing steps chosen depend on the application, data quality, and the available resources. Proper preprocessing significantly influences the accuracy and reliability of downstream analyses.

Q 7. How do you perform atmospheric correction on hyperspectral data?

Atmospheric correction is crucial for hyperspectral data because atmospheric effects can significantly distort the spectral signatures of surface features. These effects include absorption and scattering of light by gases (like water vapor and ozone) and aerosols (like dust and clouds). Several methods are used for atmospheric correction:

- Empirical Line Methods: These methods use dark targets or known reflectance values to estimate and remove atmospheric effects. Examples include the FLAASH (Fast Line-of-sight Atmospheric Analysis of Spectral Hypercubes) algorithm.

- Physical Models: These methods use radiative transfer models to simulate atmospheric effects and correct for them. MODTRAN (Moderate Resolution Atmospheric Radiative Transfer) is a commonly used model.

- Statistical Methods: Methods like dark subtraction and histogram equalization are simpler but might be less accurate than physical models for complex atmospheric conditions.

The choice of method depends on the availability of ancillary data (e.g., atmospheric profiles), the complexity of the atmospheric conditions, and the desired accuracy. Proper atmospheric correction ensures that the spectral information accurately represents surface features and improves the accuracy of subsequent analyses, such as material classification or vegetation indices calculation.

Q 8. What are some common methods for feature extraction from hyperspectral images?

Feature extraction from hyperspectral images involves identifying and quantifying meaningful information from the vast amount of spectral data. Think of it like sifting through a mountain of sand to find gold nuggets – those nuggets are the features.

Common methods fall into several categories:

- Spectral Indices: These are simple calculations combining reflectance values at different wavelengths to highlight specific phenomena. For example, the Normalized Difference Vegetation Index (NDVI) uses near-infrared and red bands to estimate vegetation health. It’s a classic and computationally efficient method.

- Spectral Transformations: Techniques like Principal Component Analysis (PCA) reduce data dimensionality by identifying principal components that capture most of the variance in the data. This simplifies analysis and can reveal hidden patterns. Imagine condensing a complex dataset into a more manageable summary.

- Derivative Analysis: Calculating the first or second derivatives of the spectral reflectance curves enhances subtle spectral features, often revealing details not apparent in the original data. It’s like zooming in on a fine detail in a picture.

- Target Detection Algorithms: Methods like matched filtering use known spectral signatures to locate specific materials or objects within the image. It’s like searching for a specific type of sand grain using a template.

- Machine Learning Techniques: Algorithms such as Support Vector Machines (SVMs) and Random Forests can be trained on labeled data to extract complex features that might not be apparent through simpler methods. They learn intricate patterns within the data, offering great flexibility.

The choice of method depends on the specific application and the type of features of interest. For example, in precision agriculture, NDVI might be sufficient for assessing vegetation vigor, while more complex techniques are required for identifying specific crop diseases based on their subtle spectral characteristics.

Q 9. Explain the difference between supervised and unsupervised classification techniques for hyperspectral data.

The key difference between supervised and unsupervised classification of hyperspectral data lies in the availability of labeled training data.

Supervised classification requires a labeled dataset where each pixel (or a group of pixels) is associated with a known class label (e.g., vegetation type, mineral composition). Think of it as learning to identify different types of animals with labeled photographs – you have examples to learn from.

Algorithms like Support Vector Machines (SVMs), Random Forests, and Maximum Likelihood Classification are commonly used. The algorithm learns patterns from the labeled data and then applies these patterns to classify unlabeled pixels. The accuracy depends heavily on the quality and quantity of training data.

Unsupervised classification, on the other hand, doesn’t require labeled data. The algorithm attempts to group pixels based on their spectral similarity without prior knowledge of the classes. Think of clustering similar flowers together without knowing their names – you group based on visual similarities.

K-means clustering and hierarchical clustering are popular methods. The resulting classes are interpreted after the analysis, which can be challenging and often requires additional contextual information or ground truthing. Unsupervised methods are useful when labeled data is scarce or expensive to obtain, but the results may be less accurate and require more interpretation.

Q 10. How do you evaluate the accuracy of your LiDAR and hyperspectral data analysis?

Accuracy evaluation is crucial for ensuring the reliability of LiDAR and hyperspectral data analysis. It often involves a multi-faceted approach.

For LiDAR data, accuracy assessment typically focuses on:

- Point cloud completeness: How much of the surveyed area is actually covered by point cloud data.

- Geometric accuracy: Comparison to ground truth data (e.g., GPS coordinates of known points) to evaluate positional errors.

- Classification accuracy: If the point cloud is classified (e.g., ground, vegetation, buildings), the accuracy of classification is assessed using confusion matrices, precision, and recall.

For hyperspectral data, accuracy assessment often focuses on:

- Classification accuracy: Using confusion matrices, overall accuracy, producer’s accuracy (how well the classifier predicts a certain class), and user’s accuracy (how reliable a given predicted class is).

- Spectral accuracy: How well the detected spectral signatures match the actual spectral characteristics of materials.

- Spatial accuracy: How well the location of features are mapped in the final analysis.

Combining LiDAR and hyperspectral data adds another layer of complexity. We often evaluate the accuracy of fusion results by comparing them against ground truth data or through independent validation methods. This might involve a combination of the above-mentioned metrics, depending on the specific application.

In summary, accuracy assessment is not a single metric but rather a holistic evaluation based on relevant metrics tailored to the specific project and data types involved.

Q 11. Describe your experience with different LiDAR data processing software (e.g., LAStools, PDAL).

I have extensive experience working with various LiDAR processing software packages. My work has primarily focused on LAStools and PDAL, which are industry standards known for their efficiency and flexibility.

LAStools is a powerful suite of command-line tools known for its speed and efficiency, especially when dealing with large datasets. I’ve used it extensively for tasks like filtering, classification, and creating different point cloud derivatives. For example, I used lasground to classify ground points from airborne LiDAR data to generate a Digital Terrain Model (DTM). The command-line interface takes some getting used to, but the speed and efficiency are unparalleled for batch processing.

PDAL (Point Data Abstraction Library) offers a more flexible and modern approach, utilizing a pipeline approach. This allows for complex processing workflows, often combining multiple algorithms in a single script. I’ve found PDAL particularly useful for working with diverse data formats and integrating LiDAR data with other geospatial datasets. For instance, I used PDAL to filter noisy points and then perform georeferencing using a high accuracy control points file.

My experience spans both individual tool application and the creation of custom scripts for automated processing of large datasets. I am adept at optimizing processing workflows to maximize efficiency and accuracy.

Q 12. What are your experiences with different hyperspectral image processing software (e.g., ENVI, ArcGIS)?

My experience with hyperspectral image processing software is primarily with ENVI and ArcGIS. Both offer powerful capabilities, but they cater to slightly different workflows and strengths.

ENVI is a dedicated hyperspectral image processing software package. It provides a comprehensive suite of tools for atmospheric correction, spectral unmixing, classification, and other advanced analyses. I have leveraged ENVI‘s capabilities for tasks such as creating custom spectral indices, performing PCA and derivative analyses, and training supervised classifiers. One specific project involved using ENVI to identify invasive plant species through their unique spectral signatures in a large agricultural area.

ArcGIS, while not solely dedicated to hyperspectral data, offers excellent integration with other geospatial data. I’ve utilized ArcGIS for georeferencing and mosaicking hyperspectral imagery, integrating it with LiDAR data for 3D visualization and analysis. Its geoprocessing tools are invaluable for spatial analysis and for managing large datasets and integrating them into GIS workflows. For example, in a recent project, we used ArcGIS to overlay hyperspectral classification maps on LiDAR derived elevation data to assess the spatial distribution of different vegetation types in relation to elevation.

My proficiency extends to using both software’s scripting capabilities (IDL for ENVI, Python for ArcGIS) for automating processing workflows and customized analysis.

Q 13. Explain your experience with point cloud registration and georeferencing.

Point cloud registration and georeferencing are crucial steps in LiDAR data processing. Registration involves aligning multiple point clouds acquired from different viewpoints or times, while georeferencing involves assigning real-world coordinates (latitude, longitude, elevation) to the points.

Registration techniques range from simple methods such as manual alignment based on visually identifiable features to sophisticated algorithms like Iterative Closest Point (ICP). ICP is computationally intensive but can achieve high accuracy in aligning datasets. I’ve extensively used ICP algorithms, fine-tuning parameters such as the tolerance and maximum iterations to optimize alignment accuracy based on the specific dataset characteristics and overlap between point clouds.

Georeferencing often relies on ground control points (GCPs) – points with known coordinates obtained through GPS surveys. These GCPs are identified within the LiDAR point cloud and used to transform the point cloud into a geospatial coordinate system. The accuracy of georeferencing is directly related to the accuracy and number of GCPs used. I’ve worked on projects requiring high-accuracy georeferencing (centimeter-level) where careful GCP selection and rigorous quality control were essential. In some instances, we have used direct georeferencing techniques that leverage the sensor’s internal positioning system (IMU and GNSS) for data acquisition.

My experience includes handling various challenges, such as large datasets and noisy data, using appropriate software tools and algorithms to ensure accurate registration and georeferencing. I also frequently assess the accuracy of the georeferencing process through post-processing quality control checks and independent validation.

Q 14. How do you handle large LiDAR datasets?

Handling large LiDAR datasets requires strategic planning and the use of efficient tools and techniques. Simply loading the entire dataset into memory is often impractical or impossible.

My approach involves a combination of techniques:

- Data tiling and processing: Dividing the large dataset into smaller, manageable tiles for processing. This allows parallel processing and significantly reduces memory requirements. This is especially useful when dealing with datasets exceeding available RAM.

- Efficient data formats: Using compressed data formats like LAS (for LiDAR) to minimize storage space and improve I/O performance.

- Cloud-based computing: Utilizing cloud computing platforms (e.g., AWS, Google Cloud) for processing large datasets. These platforms offer scalability and readily available computational resources.

- Optimized algorithms: Employing algorithms and software packages designed for efficient handling of large datasets.

LAStoolsandPDAL(as previously discussed) are prime examples of tools that are highly optimized for large-scale LiDAR processing. - Data filtering and downsampling: Before conducting in-depth analysis, often it is crucial to filter the data to remove noise and outliers. Downsampling, where you selectively reduce the density of points, can reduce processing time significantly, particularly if high-resolution analysis is not essential for a specific task.

The choice of specific techniques depends heavily on the project requirements, the available computational resources, and the desired level of detail in the analysis. Effective planning is paramount to ensure efficient and reliable processing of large LiDAR datasets.

Q 15. Describe your experience with different coordinate systems and projections.

Coordinate systems and projections are fundamental in geospatial data processing. They define how we represent the 3D earth on a 2D surface. My experience encompasses a wide range, from geographic coordinate systems like latitude and longitude (WGS84 being a common example), to projected coordinate systems like UTM (Universal Transverse Mercator) and State Plane Coordinate Systems. The choice of coordinate system depends heavily on the project’s spatial extent and desired accuracy. For instance, for a large-scale project spanning multiple countries, a geographic coordinate system like WGS84 is more appropriate, while for smaller, regional projects, a projected coordinate system like UTM minimizes distortion within a specific zone. I’m proficient in using software such as ArcGIS Pro and QGIS to perform coordinate system transformations and handle projections, ensuring data consistency and accuracy across different datasets.

For example, I worked on a project mapping a large forest reserve. Initially, the LiDAR data was in a local coordinate system, while the land ownership boundaries were in UTM. I successfully transformed the LiDAR data to UTM using appropriate georeferencing techniques to accurately overlay the forest cover data onto the land boundaries. This was crucial for assessing deforestation and managing conservation efforts.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your understanding of different LiDAR sensor types and their applications.

LiDAR sensors come in various types, each with specific applications. The main categories are airborne, terrestrial, and mobile LiDAR. Airborne LiDAR, mounted on aircraft, provides large-scale data acquisition, ideal for creating DEMs, mapping terrain, and analyzing vegetation cover across vast areas. Terrestrial LiDAR, deployed on the ground, offers high-accuracy data over smaller areas, perfect for detailed 3D modeling of buildings, bridges, or archaeological sites. Mobile LiDAR, mounted on vehicles, provides a cost-effective solution for capturing data along roads and linear features, often used for infrastructure management and urban planning. Within these categories, there are further distinctions based on the waveform processing capabilities (full-waveform vs. discrete-return) and the scanning technology. Full-waveform LiDAR offers superior information regarding target characteristics, while discrete-return systems are often simpler and cheaper to process. The choice of LiDAR type hinges on the project’s specific needs, budget, and desired level of detail.

For instance, I used airborne LiDAR to generate a high-resolution DEM of a mountainous region for flood risk assessment. The resulting DEM, combined with hydrological modeling software, accurately predicted areas vulnerable to flooding, helping local authorities allocate resources for mitigation.

Q 17. How do you interpret different spectral signatures in hyperspectral imagery?

Hyperspectral imagery captures hundreds of narrow, contiguous spectral bands, allowing for detailed analysis of material properties. Interpreting spectral signatures involves identifying unique reflectance patterns across different wavelengths for various materials. Each material has a characteristic spectral signature; for example, vegetation shows strong reflectance in the near-infrared region, while water shows low reflectance across most bands. Specialized software like ENVI or ArcGIS is employed for spectral analysis, involving techniques such as spectral unmixing to identify the proportions of different materials in a mixed pixel. Furthermore, spectral libraries and indices (like NDVI for vegetation) assist in the identification and quantification of different features.

In one project, I used hyperspectral imagery to differentiate between different types of vegetation in a wetland ecosystem. By analyzing the spectral signatures in the visible and near-infrared regions, I was able to accurately map the distribution of various plant species, which was crucial for understanding ecosystem health and biodiversity.

Q 18. Describe your experience with creating digital elevation models (DEMs) from LiDAR data.

Creating DEMs from LiDAR data is a crucial step in many geospatial applications. The process generally involves several steps. First, the raw LiDAR point cloud data needs to be cleaned and processed to remove noise and outliers. Next, the data is classified to distinguish ground points from other features (vegetation, buildings, etc.). Various algorithms are used for ground classification, including progressive TIN densification and filtering techniques. Once the ground points are identified, different interpolation methods can be used to create a surface model, such as kriging, inverse distance weighting, or triangulation. Finally, the resulting DEM can be georeferenced and exported in various formats.

In a recent project, I used LAStools to preprocess the LiDAR data and then employed ArcGIS Pro’s interpolation tools to create a high-resolution DEM. I compared different interpolation methods to find the optimal one based on the accuracy and computational efficiency. The final DEM was then used for terrain analysis, slope mapping, and volume calculations.

Q 19. How do you use LiDAR data for vegetation analysis?

LiDAR data is invaluable for vegetation analysis. The point cloud’s ability to penetrate vegetation canopies allows for the detailed characterization of forest structure and biomass. Metrics such as canopy height, canopy cover, and leaf area index (LAI) can be derived from the LiDAR data. These metrics are essential for monitoring forest health, assessing carbon sequestration, and managing forest resources. Further analysis can also reveal information about tree species composition, tree density, and understory vegetation. Often, LiDAR data is combined with other data sources, such as multispectral imagery, to gain a more comprehensive understanding of the vegetation.

I used LiDAR data to assess the impact of a wildfire on a forest ecosystem. By comparing pre- and post-fire LiDAR data, I was able to quantify the changes in canopy height, density, and volume, providing valuable insights into the extent of the damage and the forest’s recovery potential. This information assisted in planning effective reforestation efforts.

Q 20. How do you use hyperspectral imagery for mineral identification?

Hyperspectral imagery excels in mineral identification due to its ability to capture subtle variations in spectral reflectance. Minerals possess unique spectral signatures, reflecting specific wavelengths based on their chemical composition and physical properties. By analyzing these spectral signatures, we can identify and map various minerals. Techniques like spectral angle mapper (SAM) and matched filtering are used to compare the measured spectra with known mineral spectral libraries. The results can be used to create thematic maps showcasing mineral distribution, which is crucial for geological exploration, environmental monitoring, and resource management.

I used hyperspectral imagery to identify and map areas with high concentrations of iron oxide minerals in a mining region. This information helped in guiding exploration efforts, optimizing mining operations, and minimizing environmental impacts.

Q 21. Explain your experience with creating orthomosaics from aerial imagery.

Creating orthomosaics from aerial imagery involves geometrically correcting and mosaicking overlapping aerial images to produce a seamless, map-like image with minimal distortion. The process typically involves several key steps: image georeferencing (aligning images to a known coordinate system), image registration (precisely aligning overlapping images), geometric correction (removing distortions caused by camera tilt, lens effects, and terrain), and mosaicking (combining corrected images into a single seamless image). Software like Pix4D or Agisoft Metashape are commonly used for these tasks. High-quality orthomosaics are crucial for various applications, including land-use mapping, urban planning, and precision agriculture.

In one project, I produced a high-resolution orthomosaic of an urban area to assist in the development of a new transportation network. The orthomosaic, with its accurate geometric representation, allowed for precise measurements and planning of road alignments and infrastructure placement.

Q 22. Describe your experience with different data formats used in LiDAR and hyperspectral imaging.

LiDAR and hyperspectral imaging generate data in various formats, each with its strengths and weaknesses. For LiDAR, the most common format is LAS (LASer point cloud) files, which store 3D point cloud data including X, Y, Z coordinates, intensity, and classification information. Other formats include ASCII XYZ files for simpler point cloud representations and compressed formats like LAZ for efficient storage. Processing often involves converting between these formats depending on the software used.

Hyperspectral imagery, on the other hand, typically comes in raster formats like GeoTIFF, which stores the image data along with geospatial metadata. The spectral data itself can be organized in various ways. Common formats include ENVI, which is a proprietary format holding both spectral and spatial data, and HDF5 (Hierarchical Data Format version 5), a flexible format capable of storing large, complex datasets. The choice of format depends largely on the sensor used and the intended analysis workflow.

My experience includes working extensively with LAS, LAZ, GeoTIFF, and ENVI formats. I’m proficient in using software tools to convert between these formats, ensuring compatibility throughout the data processing pipeline.

Q 23. How do you address the challenges of data fusion between LiDAR and hyperspectral data?

Fusing LiDAR and hyperspectral data presents significant challenges, primarily due to the differences in their data structures and resolutions. LiDAR provides high-accuracy 3D geometry, while hyperspectral data offers detailed spectral information. The key is to find common spatial references and then align the datasets accurately. This often requires rigorous georeferencing and co-registration techniques.

One approach is to use common geospatial coordinates. We first ensure both datasets are projected into the same coordinate system (e.g., UTM). Then we employ techniques such as image registration and point cloud alignment. For instance, we might use common control points identified in both datasets and apply transformations (e.g., affine transformations or more complex models) to align the datasets. Once aligned, various fusion strategies can be employed – for example, pixel-wise fusion, where spectral information is added as attributes to LiDAR points, or feature-based fusion where we extract features from both datasets and integrate them for further analysis (e.g., object-based image analysis).

The choice of fusion method depends on the specific application. I’ve used both pixel-wise and feature-based fusion approaches in projects, selecting the most suitable method based on the project’s goals and the characteristics of the data.

Q 24. Explain your experience with cloud computing platforms for processing LiDAR and hyperspectral data.

Cloud computing platforms like AWS (Amazon Web Services) and Google Cloud Platform (GCP) are essential for processing the massive datasets generated by LiDAR and hyperspectral sensors. Their scalability and computational power are crucial for tasks like point cloud processing, orthorectification, and spectral analysis, which can be computationally intensive.

My experience involves utilizing cloud-based services such as Amazon S3 for data storage, EC2 for virtual machine instances, and various cloud-based GIS software packages. This allows me to perform complex analyses on large datasets without investing in expensive, high-performance computing infrastructure. Moreover, cloud computing provides robust data management and collaboration tools, simplifying the workflow and allowing for efficient team collaboration.

For example, in one project, we leveraged AWS to process a large-scale LiDAR point cloud covering a city using parallel processing techniques across multiple EC2 instances. This reduced processing time significantly compared to using a single, high-end desktop computer.

Q 25. What are some of the ethical considerations when working with geospatial data?

Ethical considerations in geospatial data handling are paramount. Privacy is a major concern; geospatial data can often be linked to sensitive personal information. For example, high-resolution imagery can reveal individual homes and activities, raising privacy concerns. Anonymisation and data aggregation techniques are crucial to mitigate this risk. Furthermore, data bias is a significant concern, with datasets potentially reflecting existing societal biases.

Another important ethical consideration is data ownership and access. Who owns the data? Who has permission to access and use it? Clear guidelines and legal frameworks must be followed. Finally, the responsible use of geospatial data for decision-making is critical. It’s essential to ensure that analyses are transparent, unbiased, and used for the benefit of society, avoiding potential for discrimination or misuse.

My approach emphasizes adhering to ethical guidelines and best practices throughout the entire data lifecycle, from data acquisition to analysis and dissemination.

Q 26. How do you ensure the quality and accuracy of your geospatial analysis?

Ensuring quality and accuracy in geospatial analysis requires a multi-faceted approach. Firstly, data quality control is crucial. This involves assessing the accuracy of the source data – checking for errors in LiDAR point clouds (e.g., noise, outliers) and validating the georeferencing and radiometric calibration of hyperspectral imagery. Appropriate pre-processing techniques are then implemented to correct these errors.

Secondly, rigorous validation procedures are essential. This often involves comparing our results with ground truth data – for instance, comparing our classification results with field measurements or using independent validation datasets. Accuracy metrics such as overall accuracy, kappa coefficient, and root mean square error (RMSE) are then used to assess the reliability of our analyses.

Finally, good documentation and transparency are key. We maintain detailed records of our data processing steps, analysis methods, and validation results. This ensures the reproducibility and traceability of our work.

Q 27. Describe a project where you successfully used LiDAR or hyperspectral imagery to solve a problem.

In a recent project, we used hyperspectral imagery to monitor the health of a large agricultural field. The goal was to identify areas experiencing nutrient deficiency or disease early on, enabling timely intervention and optimized resource allocation. We processed the hyperspectral data using vegetation indices (e.g., NDVI, NDRE) to identify areas exhibiting stress, and then used machine learning techniques to classify the field into different health categories (healthy, stressed, diseased).

This analysis revealed previously unnoticed areas of nutrient deficiency and disease, allowing the farmers to target their interventions effectively, saving them time and resources. The use of hyperspectral imagery provided a far more detailed and accurate assessment of crop health than traditional methods, demonstrating the power of this technology for precision agriculture.

Q 28. What are your future goals in the field of remote sensing?

My future goals in remote sensing involve exploring the potential of integrating LiDAR, hyperspectral, and other sensor modalities (e.g., multispectral imagery, thermal imagery) to create comprehensive, 3D representations of the environment. This will allow for more accurate and detailed monitoring of various phenomena, from urban growth and infrastructure assessment to environmental changes and natural disaster response. I also aim to advance the application of machine learning and deep learning techniques for automated feature extraction and classification within these complex datasets, increasing efficiency and improving the precision of our analyses.

Specifically, I’m interested in exploring the use of AI for automated detection of anomalies in large-scale geospatial data, potentially aiding in early warning systems for various environmental and societal challenges.

Key Topics to Learn for LiDAR and Hyperspectral Imagery Interviews

- LiDAR Data Acquisition and Processing: Understanding different LiDAR systems (e.g., airborne, terrestrial), data formats (e.g., LAS, LAZ), point cloud processing techniques (e.g., filtering, classification, registration), and common software packages (e.g., ArcGIS, QGIS, CloudCompare).

- Hyperspectral Image Acquisition and Preprocessing: Familiarize yourself with hyperspectral sensor principles, data formats (e.g., ENVI, GeoTIFF), atmospheric correction methods, and preprocessing steps like geometric correction and radiometric calibration.

- Data Fusion Techniques: Explore methods for integrating LiDAR and hyperspectral data to leverage the strengths of both modalities. Understand concepts like co-registration, feature extraction, and the creation of 3D hyperspectral models.

- Applications in Remote Sensing: Be prepared to discuss practical applications in areas such as precision agriculture, urban planning, environmental monitoring, forestry, and geological mapping. Think about specific examples and quantify your contributions where possible.

- Classification and Object Detection: Understand various classification techniques (e.g., supervised, unsupervised) applied to LiDAR and hyperspectral data. Be familiar with object detection methods and their use in identifying specific features of interest.

- Challenges and Limitations: Discuss potential challenges in working with LiDAR and hyperspectral data, such as data noise, data volume, computational requirements, and limitations of specific techniques. Demonstrate your problem-solving skills by highlighting how you’ve overcome such obstacles.

- Software Proficiency: Highlight your expertise in relevant software packages used for data processing, analysis, and visualization. Be prepared to discuss your experience with specific functionalities and workflows.

Next Steps

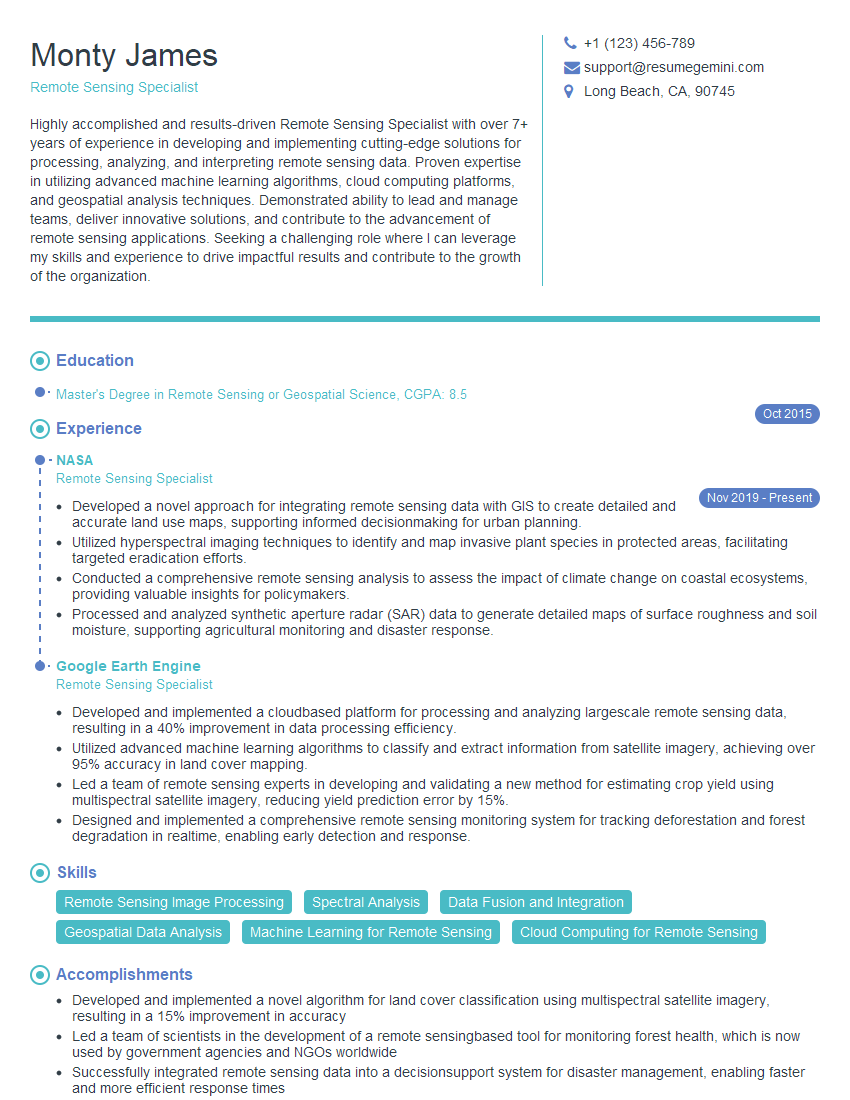

Mastering LiDAR and hyperspectral imagery processing and analysis opens doors to exciting careers in various high-growth sectors. A strong understanding of these technologies significantly enhances your job prospects. To maximize your chances, create an ATS-friendly resume that effectively showcases your skills and experience. ResumeGemini is a trusted resource to help you build a professional and impactful resume tailored to your specific experience. Examples of resumes optimized for LiDAR and hyperspectral imagery expertise are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

These apartments are so amazing, posting them online would break the algorithm.

https://bit.ly/Lovely2BedsApartmentHudsonYards

Reach out at BENSON@LONDONFOSTER.COM and let’s get started!

Take a look at this stunning 2-bedroom apartment perfectly situated NYC’s coveted Hudson Yards!

https://bit.ly/Lovely2BedsApartmentHudsonYards

Live Rent Free!

https://bit.ly/LiveRentFREE

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.

Hi, I represent a social media marketing agency and liked your blog

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?