Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Software Engineer interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Software Engineer Interview

Q 1. Explain the difference between == and === in JavaScript.

In JavaScript, both == and === are used for comparison, but they differ significantly in how they perform the check. == is the loose equality operator, while === is the strict equality operator. The key difference lies in type coercion.

Loose Equality (==): This operator performs type coercion before comparison. It attempts to convert the operands to the same type before checking for equality. This can lead to unexpected results.

Example:

console.log(1 == '1'); // true (string '1' is coerced to number 1)

Strict Equality (===): This operator does not perform type coercion. It checks for both value and type equality. If the types are different, it immediately returns false, regardless of the values.

Example:

console.log(1 === '1'); // false (different types)

Practical Application: In most scenarios, === is preferred due to its predictability and avoidance of potential type-related bugs. Loose equality can introduce unexpected behavior and make debugging more challenging. Use == only when you explicitly need type coercion, but be mindful of its implications.

Q 2. What is the difference between an array and a linked list?

Arrays and linked lists are both data structures used to store collections of elements, but they differ fundamentally in how they store and access those elements.

Arrays: Arrays store elements in contiguous memory locations. This provides efficient random access – accessing any element is equally fast (O(1) time complexity). However, inserting or deleting elements in the middle of an array can be slow (O(n) time complexity) because it requires shifting other elements.

Linked Lists: Linked lists store elements as nodes, each containing the element’s value and a pointer to the next node. Elements are not stored contiguously in memory. Accessing an element requires traversing the list from the head (O(n) time complexity). However, inserting or deleting elements is faster (O(1) time complexity) once the location is found, as it only involves updating pointers.

Example (Conceptual): Imagine a train (array) where each car is next to the other. Accessing a car is fast because you know its position. Adding or removing a car requires rearranging many cars. Now imagine a linked train (linked list) where cars are connected by chains. Finding a car may be slow, but adding or removing one only requires changing the chain links.

Practical Application: Arrays are ideal for scenarios where random access is crucial and insertions/deletions are infrequent. Linked lists are preferred when frequent insertions/deletions are needed and random access is less important, such as implementing stacks or queues.

Q 3. Describe the SOLID principles of object-oriented programming.

SOLID principles are five design principles intended to make software designs more understandable, flexible, and maintainable. They guide the creation of robust and scalable object-oriented systems.

- Single Responsibility Principle (SRP): A class should have only one reason to change. Focus on one specific task or responsibility.

- Open/Closed Principle (OCP): Software entities (classes, modules, functions, etc.) should be open for extension but closed for modification. New functionality should be added without altering existing code.

- Liskov Substitution Principle (LSP): Subtypes should be substitutable for their base types without altering the correctness of the program. Derived classes should behave as expected when used in place of their base classes.

- Interface Segregation Principle (ISP): Clients should not be forced to depend upon interfaces they don’t use. Large interfaces should be broken down into smaller, more specific ones.

- Dependency Inversion Principle (DIP): High-level modules should not depend on low-level modules. Both should depend on abstractions. Abstractions should not depend on details. Details should depend on abstractions.

Practical Application: Applying SOLID principles leads to more modular, reusable, and maintainable code. It reduces coupling between different parts of the system, making it easier to change or extend functionality without breaking other parts.

Q 4. What are design patterns and give examples of common ones.

Design patterns are reusable solutions to common software design problems. They represent best practices and help improve code organization, readability, and maintainability. They are not finished code, but rather templates or blueprints.

Examples:

- Singleton: Ensures that only one instance of a class is created. Useful for managing resources like database connections.

- Factory: Provides an interface for creating objects without specifying their concrete classes. Useful for decoupling object creation from client code.

- Observer: Defines a one-to-many dependency between objects so that when one object changes state, all its dependents are notified and updated automatically. Useful for event handling and data synchronization.

- Decorator: Dynamically adds responsibilities to an object without altering its structure. Useful for adding features or functionality to existing objects.

- Strategy: Defines a family of algorithms, encapsulates each one, and makes them interchangeable. Useful for choosing algorithms at runtime.

Practical Application: Design patterns provide proven solutions to recurring problems, saving development time and effort. They improve code quality by promoting consistency and understandability. By using established patterns, developers can communicate more effectively and collaborate more efficiently.

Q 5. Explain the concept of RESTful APIs.

RESTful APIs (Representational State Transfer Application Programming Interfaces) are a set of architectural constraints that, when applied as a whole, create systems that are scalable, reliable, and maintainable.

Key characteristics of RESTful APIs:

- Client-Server Architecture: Clear separation of concerns between client and server.

- Statelessness: Each request from client to server must contain all the information necessary to understand the request; the server doesn’t store client context between requests.

- Cacheable: Responses can be cached to improve performance.

- Uniform Interface: A consistent set of operations (GET, POST, PUT, DELETE) are used to interact with resources.

- Layered System: Clients cannot see the internal structure of the system.

- Code on Demand (Optional): The server can extend client functionality by transferring executable code.

Practical Application: RESTful APIs are widely used for building web services and microservices. Their stateless nature allows for scalability and ease of maintenance. The uniform interface simplifies development and makes it easier for different systems to interact.

Q 6. What are the different types of database systems and their uses?

Database systems are categorized in various ways, but here are some common types:

- Relational Databases (RDBMS): Data is organized into tables with rows and columns, linked through relationships. Examples: MySQL, PostgreSQL, Oracle, SQL Server. Used for structured data, transactions, and data integrity. Excellent for managing complex data relationships.

- NoSQL Databases: These databases don’t use the relational model. They offer flexibility and scalability. Examples: MongoDB (document database), Cassandra (wide-column store), Redis (key-value store), Neo4j (graph database). Used for large volumes of unstructured or semi-structured data, high availability, and scalability. Each type excels in different situations.

- Graph Databases: Data is represented as nodes and edges, allowing for efficient querying of relationships. Example: Neo4j. Useful for social networks, recommendation systems, and knowledge graphs where relationships are central.

- Object-Oriented Databases: Data is stored as objects, similar to object-oriented programming concepts. They are less common than relational or NoSQL databases.

Practical Application: The choice of database system depends heavily on the application’s requirements. Relational databases are suitable for applications needing strong data consistency and relationships. NoSQL databases are preferred for large-scale applications needing high availability and scalability, often handling unstructured data. Graph databases are excellent for applications focused on relationships.

Q 7. How do you handle exceptions in your code?

Exception handling is crucial for writing robust and reliable code. It involves anticipating potential errors and gracefully handling them to prevent program crashes or unexpected behavior.

Strategies:

try...catch...finallyblocks (JavaScript): Thetryblock contains the code that might throw an exception. Thecatchblock handles the exception, and thefinallyblock (optional) executes regardless of whether an exception occurred.- Error Logging: Logging exceptions provides valuable information for debugging and monitoring. A robust logging system should include timestamps, error messages, stack traces, and other relevant context.

- Custom Exceptions (if applicable): Creating custom exception classes allows for more specific error handling and improves code clarity.

- Defensive Programming: Implementing input validation and checks prevents many common errors.

Example (JavaScript):

try { // Code that might throw an error let result = 10 / 0; } catch (error) { console.error('An error occurred:', error.message); // Log the error } finally { console.log('This always executes.'); // Cleanup code }Practical Application: Good exception handling improves the user experience by gracefully handling errors instead of abrupt crashes. It also simplifies debugging and maintenance by providing detailed error information.

Q 8. What is version control and why is it important?

Version control is a system that records changes to a file or set of files over time so that you can recall specific versions later. Think of it like having a detailed history of every edit made to a document, allowing you to revert to previous versions or compare different iterations. It’s crucial for software development because it allows multiple developers to work on the same project simultaneously without overwriting each other’s work and provides a safety net for mistakes.

Importance:

- Collaboration: Multiple developers can work concurrently on the same codebase without conflicts.

- Version Tracking: Track every change made, enabling rollback to previous versions if needed.

- History: Provides a complete history of the project, invaluable for understanding the evolution of the code and for debugging.

- Branching & Merging: Allows developers to work on new features (branches) independently and merge them back into the main codebase when ready.

- Backup & Recovery: Acts as a robust backup system, protecting against data loss.

Without version control, software development would be chaotic, error-prone, and incredibly difficult to manage, especially in larger teams.

Q 9. Explain Agile methodologies and their benefits.

Agile methodologies are iterative approaches to software development that emphasize flexibility, collaboration, and customer feedback. Unlike traditional waterfall methods (where each phase is completed sequentially), Agile breaks down the project into smaller, manageable iterations called sprints (typically 1-4 weeks). Each sprint results in a working increment of the software.

Popular Agile Frameworks: Scrum, Kanban, Extreme Programming (XP)

Benefits:

- Adaptability: Easily adapt to changing requirements throughout the development lifecycle.

- Faster Time to Market: Deliver working software frequently.

- Improved Collaboration: Promotes teamwork and communication through daily stand-up meetings and regular feedback.

- Higher Quality: Continuous testing and integration lead to fewer bugs and improved quality.

- Increased Customer Satisfaction: Frequent feedback loops ensure the software meets customer expectations.

Imagine building a house. Waterfall is like having a rigid blueprint followed to the letter, leaving little room for adjustments. Agile is like building the house in stages, regularly checking with the homeowner for feedback and making adjustments along the way, leading to a house that better meets their needs.

Q 10. Describe your experience with testing frameworks (e.g., JUnit, pytest).

I have extensive experience with both JUnit (for Java) and pytest (for Python), two leading testing frameworks. JUnit is a mature framework widely used in Java projects for unit testing, focusing on testing individual components of the code. Pytest is a powerful and versatile framework for Python, supporting various testing styles, including unit, integration, and functional testing.

JUnit Example (Java):

@Test

public void testAddition() {

assertEquals(5, Calculator.add(2, 3));

}

pytest Example (Python):

def test_addition():

assert Calculator.add(2, 3) == 5

In my projects, I utilize these frameworks to write comprehensive test suites, ensuring code quality and catching bugs early in the development process. I’ve used them to implement both unit and integration tests, contributing to a robust and reliable software product. My experience includes writing tests for different layers of the application, from data access to user interfaces. I also have experience with test-driven development (TDD), where tests are written before the code they’re meant to test.

Q 11. What is the difference between Git merge and Git rebase?

Both git merge and git rebase are used to integrate changes from one branch into another, but they do so in different ways. Think of it like merging two rivers.

git merge creates a new commit that combines the changes from both branches. It preserves the complete history of both branches. This is like creating a new river channel where both rivers flow together.

git rebase rewrites the commit history by applying the commits from one branch onto the tip of the other branch. It creates a linear history, making it easier to read but potentially losing some historical context. This is like redirecting one river to flow into the other, so they appear as a single continuous flow.

When to use which:

git merge: Generally preferred for public branches where preserving the complete history is important. It’s safer and avoids potential data loss.git rebase: Often used for private branches to create a cleaner, linear history. Useful for simplifying the commit history before merging into a main branch. However, rebase should be used cautiously, particularly when working on shared branches, to avoid overwriting others’ work.

Q 12. Explain the concept of polymorphism.

Polymorphism, meaning “many forms,” is a powerful object-oriented programming concept where objects of different classes can be treated as objects of a common type. This allows for flexibility and extensibility in code.

Example: Imagine having a base class called Animal with a method makeSound(). You can then create subclasses like Dog and Cat, each overriding the makeSound() method to produce different sounds (“Woof!” and “Meow!” respectively). You can then have an array of Animal objects that hold instances of both Dog and Cat. When you call makeSound() on each element, the correct method for the specific object (Dog or Cat) will be executed—demonstrating polymorphism.

// Java Example

class Animal {

public void makeSound() { System.out.println("Generic animal sound"); }

}

class Dog extends Animal {

@Override

public void makeSound() { System.out.println("Woof!"); }

}

class Cat extends Animal {

@Override

public void makeSound() { System.out.println("Meow!"); }

}

This enables writing generic code that can work with a variety of objects without knowing their specific type at compile time, promoting code reusability and maintainability.

Q 13. How do you optimize database queries for performance?

Optimizing database queries for performance is crucial for application speed and scalability. Inefficient queries can significantly impact the user experience. Here’s a breakdown of common optimization strategies:

- Indexing: Create indexes on frequently queried columns. Indexes are like a book’s index; they allow the database to quickly locate specific data without scanning the entire table.

- Query Optimization Techniques: Use

EXPLAIN(or similar tools) to analyze query execution plans and identify bottlenecks. This helps find areas needing improvement. - Proper Use of WHERE Clauses: Use specific and targeted

WHEREclauses to filter data efficiently. Avoid using wildcard characters (%) at the beginning of patterns whenever possible. - Avoid SELECT *: Select only the columns needed, reducing data retrieval overhead.

- Use Joins Wisely: Choose appropriate join types (INNER, LEFT, RIGHT) based on your needs. Optimize joins by ensuring correct indexing and table structures.

- Database Tuning: Properly configure database parameters (buffer pools, cache sizes) to match the workload.

- Data Normalization: Normalize database tables to reduce data redundancy and improve data integrity.

- Caching: Cache frequently accessed data to reduce database load.

- Stored Procedures: Use stored procedures for frequently executed queries; they are pre-compiled and can be faster than ad-hoc queries.

Consider using tools like database profiling and query analyzers for more detailed analysis. Regular monitoring and performance testing can also highlight opportunities for optimization. A slow query can sometimes be a symptom of a poorly designed database schema, which should be addressed separately.

Q 14. What are some common security vulnerabilities and how do you mitigate them?

Common security vulnerabilities are a constant concern for software developers. Addressing them effectively is essential to protecting user data and application integrity. Here are a few common vulnerabilities and mitigation strategies:

- SQL Injection: This occurs when malicious code is injected into database queries, allowing unauthorized data access or modification. Mitigation: Use parameterized queries or prepared statements, input validation, and least privilege principles.

- Cross-Site Scripting (XSS): This allows attackers to inject client-side scripts into web pages, potentially stealing user data or performing other malicious actions. Mitigation: Encode user input, use a web application firewall (WAF), and follow secure coding practices to sanitize data.

- Cross-Site Request Forgery (CSRF): This tricks users into performing unwanted actions on a website where they’re already authenticated. Mitigation: Use CSRF tokens, which are unique values embedded in forms that servers can verify.

- Authentication & Authorization Vulnerabilities: Weak passwords, lack of multi-factor authentication (MFA), and inadequate access control mechanisms make applications vulnerable to unauthorized access. Mitigation: Implement strong password policies, enforce MFA, use role-based access control (RBAC), and regularly audit user permissions.

- Denial-of-Service (DoS) Attacks: These aim to make a system unavailable to legitimate users. Mitigation: Implement rate limiting, distributed denial-of-service (DDoS) mitigation techniques, and robust infrastructure.

Regular security audits, penetration testing, and keeping software up-to-date with the latest security patches are crucial elements of a comprehensive security strategy. Following secure coding practices and adopting a security-first mindset throughout the software development lifecycle is paramount.

Q 15. Explain the difference between synchronous and asynchronous programming.

Synchronous and asynchronous programming represent fundamentally different approaches to how code executes. In synchronous programming, tasks are executed sequentially, one after another. Imagine a recipe: you must follow each step in order – you can’t add the sugar before you’ve mixed the dry ingredients. The program waits for each task to complete before moving on to the next. This is simple to understand but can be inefficient if one task takes a long time (like waiting for a network request).

Asynchronous programming, on the other hand, allows multiple tasks to run concurrently without blocking each other. Think of it as a chef delegating tasks to sous chefs. They can start preparing multiple parts of the meal simultaneously. The main program doesn’t wait for each task to finish completely; it continues executing other parts of the code. When an asynchronous task is complete, the program is notified and can process the result. This improves efficiency, especially for I/O-bound operations (like network requests or file reads) where a lot of time is spent waiting.

Example: Imagine fetching data from two different servers. In synchronous programming, the program would first fetch data from server A, wait for it to complete, then fetch data from server B. In asynchronous programming, it would send requests to both servers simultaneously, and process the data from each server as soon as it arrives. The asynchronous approach is faster because it doesn’t have to wait for each server to finish responding before moving onto the next.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What is your experience with cloud platforms (e.g., AWS, Azure, GCP)?

I have extensive experience working with several major cloud platforms, including AWS, Azure, and GCP. My experience spans across various services, including:

- AWS: I’ve utilized EC2 for server provisioning, S3 for object storage, Lambda for serverless computing, RDS for database management, and CloudFormation for infrastructure-as-code. I’ve built and deployed numerous applications on AWS, and I’m comfortable with its security and IAM features.

- Azure: My experience with Azure includes working with Virtual Machines, Blob Storage, Azure Functions (serverless), and Azure SQL Database. I’ve used Azure DevOps for CI/CD pipeline development and deployment.

- GCP: On GCP, I’ve worked with Compute Engine, Cloud Storage, Cloud Functions, Cloud SQL, and Kubernetes Engine. I’m familiar with the Google Cloud Platform’s strengths in data analytics and machine learning.

I’m adept at choosing the right platform for specific projects based on factors like cost, scalability, and the specific services required. I also understand the nuances of each platform’s architecture and best practices.

Q 17. Describe your experience with containerization technologies (e.g., Docker, Kubernetes).

I’m proficient in containerization technologies, primarily Docker and Kubernetes. Docker allows me to package applications and their dependencies into isolated containers, ensuring consistency across different environments (development, testing, production). This simplifies deployment and avoids the ‘it works on my machine’ problem. I’m experienced in creating Dockerfiles, building and managing images, and deploying containers to various platforms.

Kubernetes takes containerization a step further by providing an orchestration layer for managing and scaling containerized applications. I’ve used Kubernetes to deploy and manage large-scale applications, taking advantage of its features like automatic scaling, self-healing, and service discovery. I’m familiar with concepts like deployments, pods, services, and namespaces, and I understand the importance of Kubernetes’ declarative approach to infrastructure management. A recent project involved using Kubernetes to manage a microservices architecture, resulting in improved scalability and resilience.

Q 18. How do you approach debugging complex code issues?

Debugging complex code is a systematic process. My approach typically involves:

- Reproduce the issue: First, I focus on consistently reproducing the bug. This often involves carefully documenting the steps to reproduce it and creating a minimal reproducible example (MRE).

- Gather information: I use logging, debugging tools (like debuggers or profilers), and monitoring systems to collect relevant information about the program’s behavior. This includes examining stack traces, network traffic, and resource usage.

- Isolate the problem: Through binary search or systematic elimination, I try to narrow down the source of the error. This often involves commenting out sections of code or using unit tests to isolate the problematic area.

- Understand the root cause: Once I’ve identified the area causing the bug, I dive into the code to understand why the error is occurring. This might involve analyzing the code’s logic, reviewing the relevant documentation, or even looking for related issues online.

- Implement the fix: After understanding the root cause, I implement a fix and thoroughly test it to make sure the problem is resolved and I haven’t introduced new bugs.

Using version control helps immensely during the debugging process, making it easy to revert changes if needed. Collaboration with team members can also be invaluable in identifying blind spots.

Q 19. What is your experience with different data structures (e.g., hash tables, trees)?

I have experience using a wide array of data structures, tailored to the specific needs of a project. My experience includes:

- Hash Tables: I frequently utilize hash tables for fast key-value lookups. Their O(1) average time complexity for search, insertion, and deletion makes them ideal for scenarios like caching and symbol tables. For instance, in a recent project, I used a hash table to implement a fast in-memory cache for frequently accessed data, significantly improving response times.

- Trees: I’ve used various tree structures like binary search trees (BSTs), AVL trees, and red-black trees, each with its own trade-offs regarding balance and search efficiency. Binary search trees are excellent for sorted data and efficient searches, while self-balancing trees like AVL and red-black trees guarantee logarithmic time complexity even in the worst-case scenario.

- Other Structures: Beyond these, I’m also comfortable using arrays, linked lists, stacks, queues, graphs, and heaps, selecting the appropriate data structure based on the specific performance requirements and characteristics of the data being manipulated.

Understanding the strengths and weaknesses of various data structures allows me to select the optimal structure for a given problem, resulting in more efficient and maintainable code.

Q 20. Explain the concept of Big O notation and its importance in algorithm analysis.

Big O notation is a mathematical notation used to describe the performance or complexity of an algorithm. Specifically, it describes how the runtime or space requirements of an algorithm grow as the input size grows. It focuses on the dominant factors and ignores constant factors. For instance, an algorithm with a runtime of O(n) means its runtime grows linearly with the input size n, while an algorithm with a runtime of O(n^2) has a runtime that grows quadratically with n.

Importance: Big O notation is crucial for algorithm analysis because it allows us to compare the efficiency of different algorithms independently of specific hardware or implementation details. It helps in making informed decisions about which algorithm to choose for a given task. For example, an O(n log n) algorithm will generally outperform an O(n^2) algorithm for large input sizes, even if the constant factors in the former are higher.

Examples:

O(1): Constant time – Accessing an element in an array by index.O(log n): Logarithmic time – Binary search in a sorted array.O(n): Linear time – Traversing an array.O(n log n): Linearithmic time – Merge sort.O(n^2): Quadratic time – Bubble sort.O(2^n): Exponential time – Finding all subsets of a set.

By understanding Big O notation, we can make informed choices about algorithm selection and optimize code for better performance, especially as datasets grow.

Q 21. What are some common algorithms and their applications?

Many common algorithms exist, each with specific applications. Here are a few examples:

- Sorting Algorithms: Merge sort (

O(n log n)), quicksort (averageO(n log n), worst caseO(n^2)), bubble sort (O(n^2)). Sorting is fundamental in many applications, from database indexing to data visualization. - Searching Algorithms: Binary search (

O(log n)) for sorted data, linear search (O(n)) for unsorted data. Searching is crucial for retrieving information efficiently. - Graph Algorithms: Breadth-first search (BFS) and depth-first search (DFS) are used for traversing graphs and finding paths. Applications range from network routing to social network analysis.

- Dynamic Programming: Used to solve optimization problems by breaking them down into smaller overlapping subproblems. Examples include finding the shortest path in a graph or the optimal knapsack solution.

- Greedy Algorithms: These algorithms make locally optimal choices at each step, hoping to find a global optimum. Examples include Dijkstra’s algorithm for shortest paths and Huffman coding for data compression.

The choice of algorithm depends heavily on the specific problem and the constraints (time complexity, space complexity, etc.). Understanding the strengths and weaknesses of each algorithm is critical for writing efficient and effective code.

Q 22. How would you design a scalable system for [specific scenario]?

Designing a scalable system for a specific scenario, let’s say a social media platform like Twitter, requires careful consideration of several factors. The core principle is to build a system that can handle increasing load gracefully without significant performance degradation. This involves using a microservices architecture, horizontal scaling, and caching strategies.

Microservices Architecture: Instead of a monolithic application, we break down the platform into smaller, independent services (e.g., user authentication, timeline generation, post creation, messaging). Each service can be scaled independently based on its specific needs. This allows for flexibility and fault isolation; if one service fails, others can continue operating.

Horizontal Scaling: We use multiple instances of each microservice running on different servers. If the load increases, we add more instances to distribute the workload. This contrasts with vertical scaling (increasing the resources of a single server), which has limitations. Load balancers distribute incoming requests across these instances.

Caching: We implement caching at multiple levels (e.g., CDN for static content, in-memory caching like Redis for frequently accessed data, database caching). This significantly reduces the load on databases and improves response times. A well-designed caching strategy is crucial for performance.

Database: We choose a database suitable for handling large amounts of data and high write throughput. A NoSQL database like Cassandra or MongoDB might be preferred over a relational database for this type of application, due to its horizontal scalability.

Asynchronous Processing: Tasks like sending notifications or processing images can be handled asynchronously using message queues (e.g., Kafka, RabbitMQ). This prevents these operations from blocking the main request processing path and improves overall responsiveness.

Example: Imagine a user posting a tweet. The post creation service handles the data, the timeline service updates follower timelines, and the notification service sends out notifications. Each of these operates independently and can be scaled separately.

Q 23. Explain your understanding of different software development lifecycles.

Software development lifecycles define the stages involved in building software. Several methodologies exist, each with its own strengths and weaknesses. Here are a few popular ones:

- Waterfall: A linear, sequential approach. Each phase (requirements, design, implementation, testing, deployment) must be completed before moving to the next. It’s simple but inflexible and doesn’t adapt well to changing requirements.

- Agile (Scrum, Kanban): Iterative and incremental approaches focusing on flexibility and collaboration. Work is broken down into short sprints (Scrum) or visualized on a Kanban board. Frequent feedback and adaptation are key. Agile is ideal for projects with evolving requirements.

- DevOps: A cultural and technical shift emphasizing collaboration between development and operations teams. It aims to automate processes, improve deployment frequency, and enhance monitoring. DevOps integrates continuous integration and continuous delivery (CI/CD) for faster and more reliable releases.

- Spiral: A risk-driven approach that combines elements of waterfall and prototyping. Each iteration involves planning, risk analysis, development, and evaluation. This is useful for complex projects where risks need careful management.

The choice of lifecycle depends on project size, complexity, and the need for flexibility. For smaller projects, a simplified Agile approach might suffice, while larger, more complex projects might benefit from a more structured approach like Spiral.

Q 24. Describe your experience with different programming languages and frameworks.

I have extensive experience with several programming languages and frameworks. My proficiency includes:

- Java: I’ve used Java extensively for enterprise-level applications, leveraging Spring Boot for microservices development and building robust, scalable systems. I’m familiar with its object-oriented paradigm and its strong ecosystem of libraries.

- Python: I’ve used Python for data analysis, scripting, and web development (Django, Flask). Python’s readability and versatility make it ideal for rapid prototyping and data science tasks.

- JavaScript (Node.js, React): I have experience building web applications using Node.js for backend development and React for frontend development. I understand asynchronous programming concepts and building responsive user interfaces.

- SQL and NoSQL Databases: I am proficient in working with relational databases (MySQL, PostgreSQL) and NoSQL databases (MongoDB, Cassandra). I understand the strengths and weaknesses of each database type and can choose the appropriate one based on project requirements.

I am always eager to learn new languages and frameworks. I believe continuous learning is crucial in this ever-evolving field.

Q 25. How do you stay up-to-date with the latest technologies?

Staying up-to-date with the latest technologies is a continuous process. I employ several strategies:

- Online Courses and Tutorials: Platforms like Coursera, edX, and Udemy offer a wealth of resources to learn new technologies and deepen existing skills. I regularly take courses on relevant topics.

- Industry Blogs and Publications: I follow influential blogs and publications in the software engineering space to stay informed about the latest trends, best practices, and emerging technologies.

- Conferences and Workshops: Attending conferences and workshops provides opportunities to learn from experts, network with peers, and discover new ideas. It’s a great way to stay engaged with the community.

- Open-Source Contributions: Contributing to open-source projects is a fantastic way to learn from experienced developers, gain practical experience, and stay ahead of the curve.

- Experimentation and Personal Projects: I frequently work on personal projects to experiment with new technologies and reinforce my learning. This is a great way to apply theoretical knowledge to practical situations.

By combining these approaches, I maintain a strong understanding of current technologies and trends.

Q 26. What are your strengths and weaknesses as a Software Engineer?

Strengths: I’m a highly analytical and problem-solving oriented individual with a strong work ethic. I excel at designing efficient and scalable systems, and I’m proficient in multiple programming languages and frameworks. I’m a collaborative team player and possess excellent communication skills. I am adaptable and comfortable working in dynamic environments.

Weaknesses: While I am a strong communicator, I sometimes need to be more assertive in expressing my opinions, especially when facing conflicting viewpoints. I am also always looking to improve my time management skills to ensure I deliver consistently high-quality work while effectively managing my time.

Q 27. Describe a challenging project and how you overcame obstacles.

In a previous project, we were tasked with developing a real-time chat application that needed to handle a large number of concurrent users. We initially faced challenges with scalability and performance. The initial architecture was not optimized for handling the volume of messages and user connections.

To overcome this, we implemented several strategies:

- WebSockets: We transitioned from traditional HTTP polling to WebSockets for real-time communication. This significantly improved responsiveness and reduced server load.

- Message Queues: We introduced message queues to handle asynchronous processing of messages. This prevented performance bottlenecks caused by immediate message delivery.

- Load Balancing: We employed a load balancer to distribute incoming connections across multiple servers, ensuring high availability and fault tolerance.

- Database Optimization: We optimized our database schema and queries to improve data retrieval speed.

Through these changes, we significantly improved the application’s scalability and performance. This project highlighted the importance of choosing the right technologies and carefully designing the architecture for optimal performance under load.

Q 28. How do you handle working in a team environment?

I thrive in team environments. I believe that effective teamwork is crucial for successful software development. I actively contribute to team discussions, share my knowledge and expertise, and readily assist my colleagues. I’m a strong believer in open communication and collaborative problem-solving. I am comfortable taking on leadership roles when appropriate, but also excel as a team member, always prioritizing the overall success of the project. I respect diverse perspectives and actively seek feedback to improve my own performance and contribute to a positive and productive team dynamic.

Key Topics to Learn for Software Engineer Interview

- Data Structures and Algorithms: Understanding fundamental data structures like arrays, linked lists, trees, graphs, and hash tables is crucial. Practice implementing and analyzing their time and space complexity. This forms the bedrock of efficient code design.

- Object-Oriented Programming (OOP): Master the principles of encapsulation, inheritance, and polymorphism. Be prepared to discuss their practical applications in designing robust and maintainable software. Consider designing classes and their interactions to solve common problems.

- Databases: Familiarize yourself with relational databases (SQL) and NoSQL databases. Understand database design principles, querying techniques (SQL), and optimization strategies. Prepare to discuss schema design and data modeling.

- System Design: Practice designing scalable and distributed systems. Understand concepts like load balancing, caching, and message queues. Be ready to discuss trade-offs in architectural choices.

- Software Testing and Debugging: Understand different testing methodologies (unit, integration, system) and debugging techniques. Being able to effectively test and debug your code is critical for any software engineer.

- Version Control (Git): Demonstrate a strong understanding of Git workflows, branching strategies, and collaboration using Git. This is a fundamental skill in modern software development.

- Problem-Solving and Communication: Technical interviews often assess your problem-solving skills and ability to communicate your thought process clearly and effectively. Practice articulating your solutions and asking clarifying questions.

Next Steps

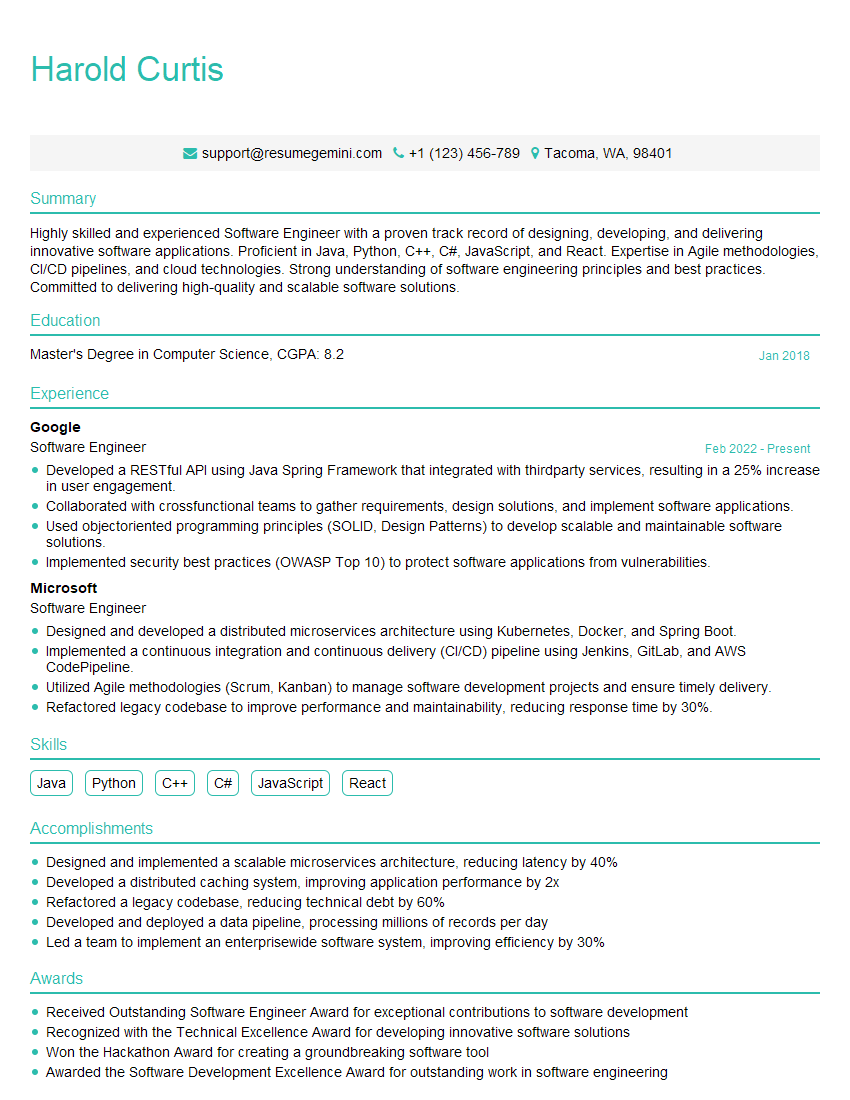

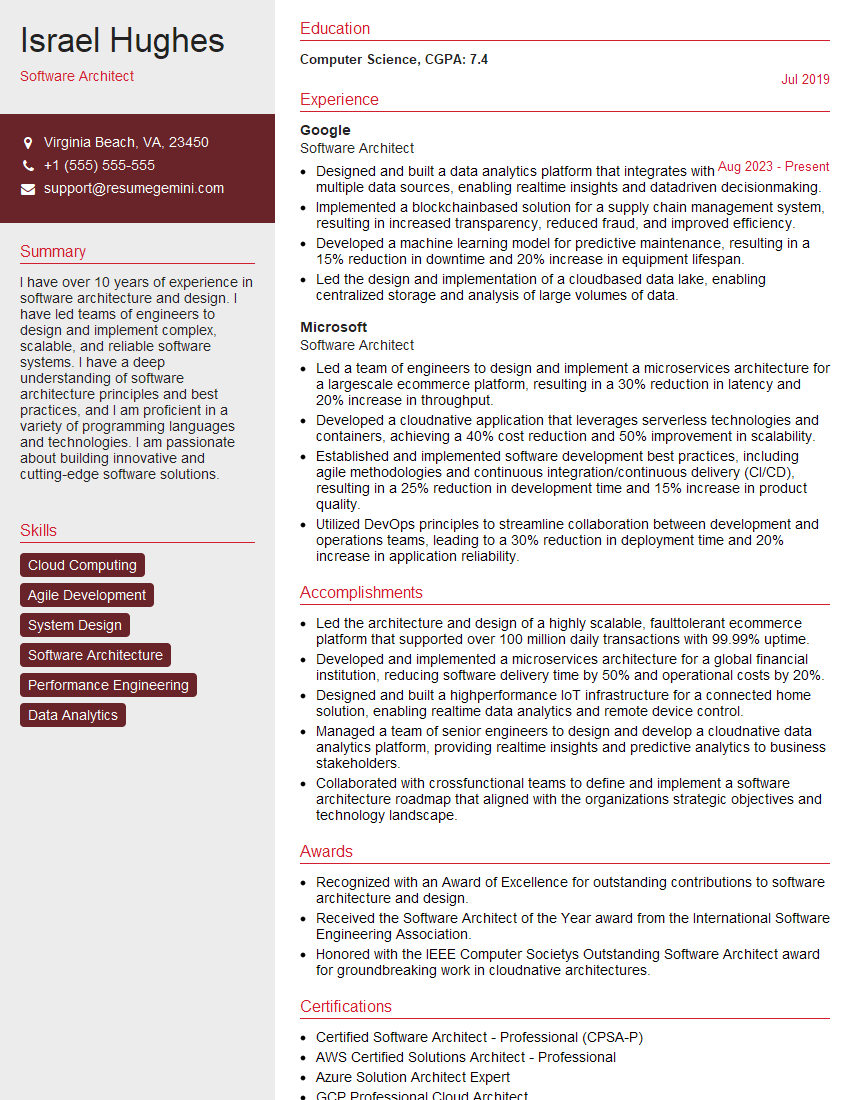

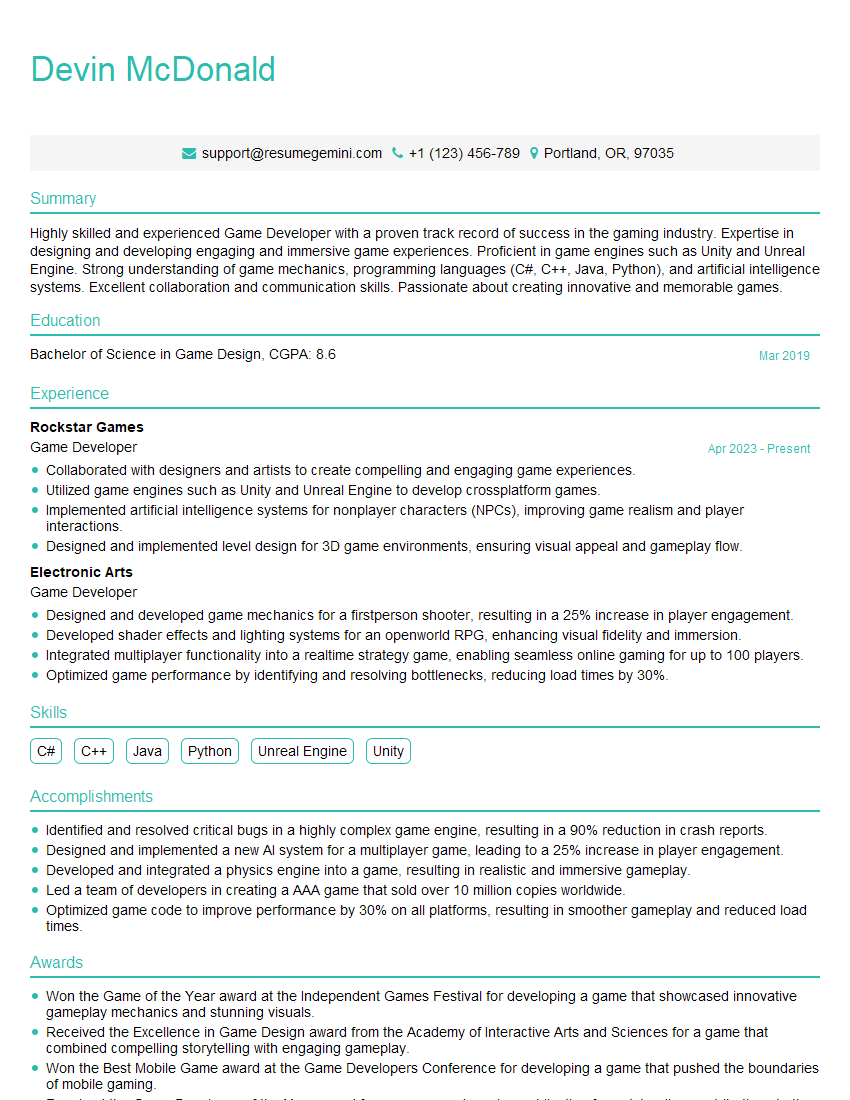

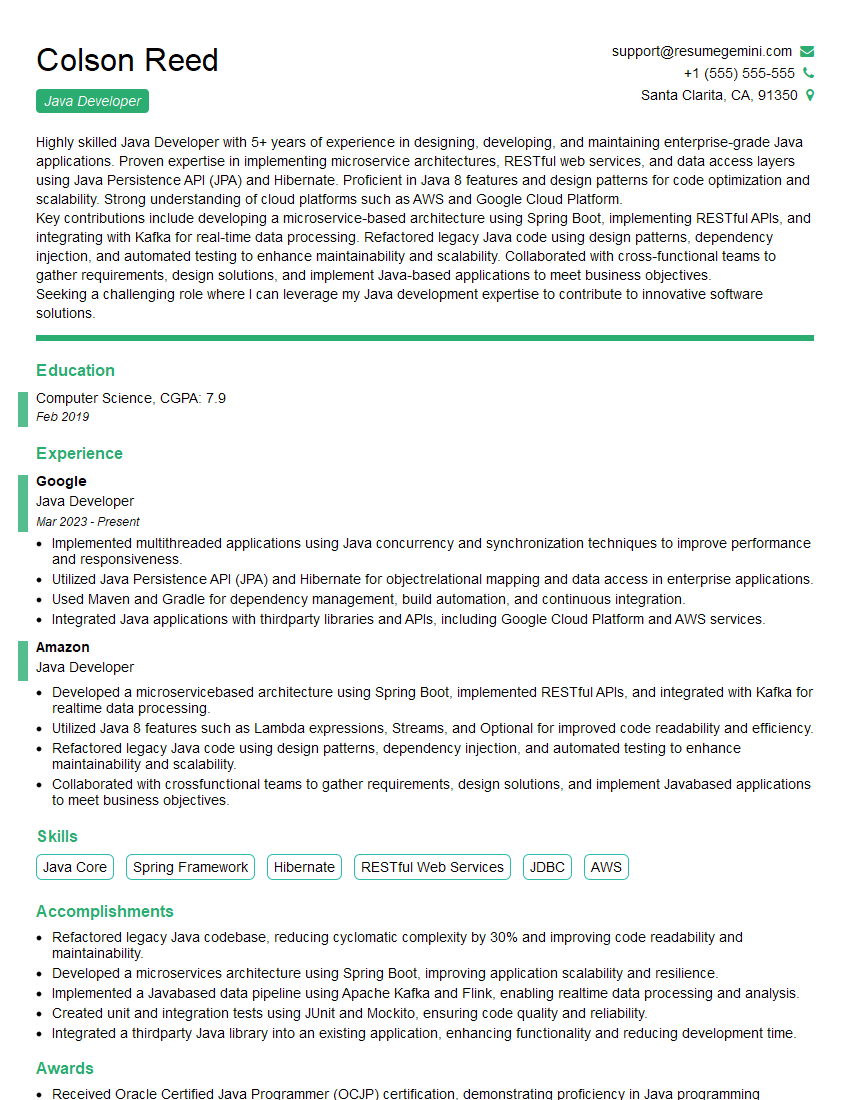

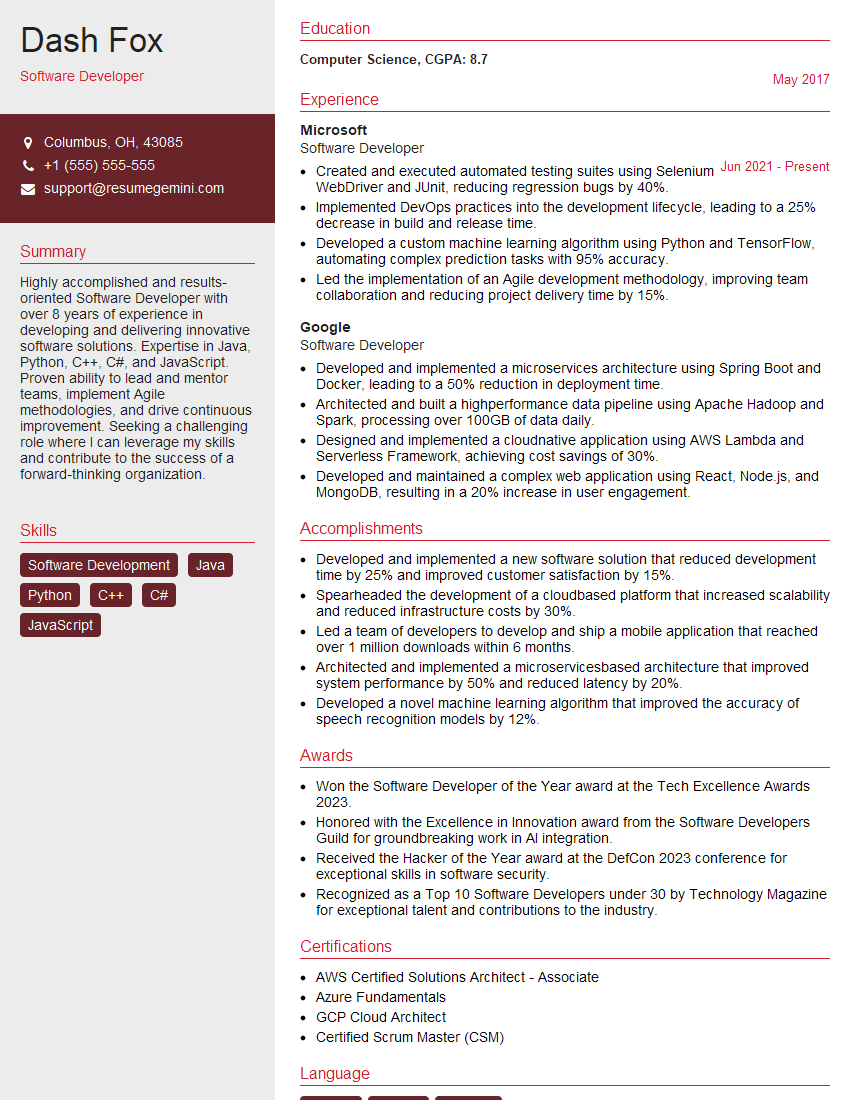

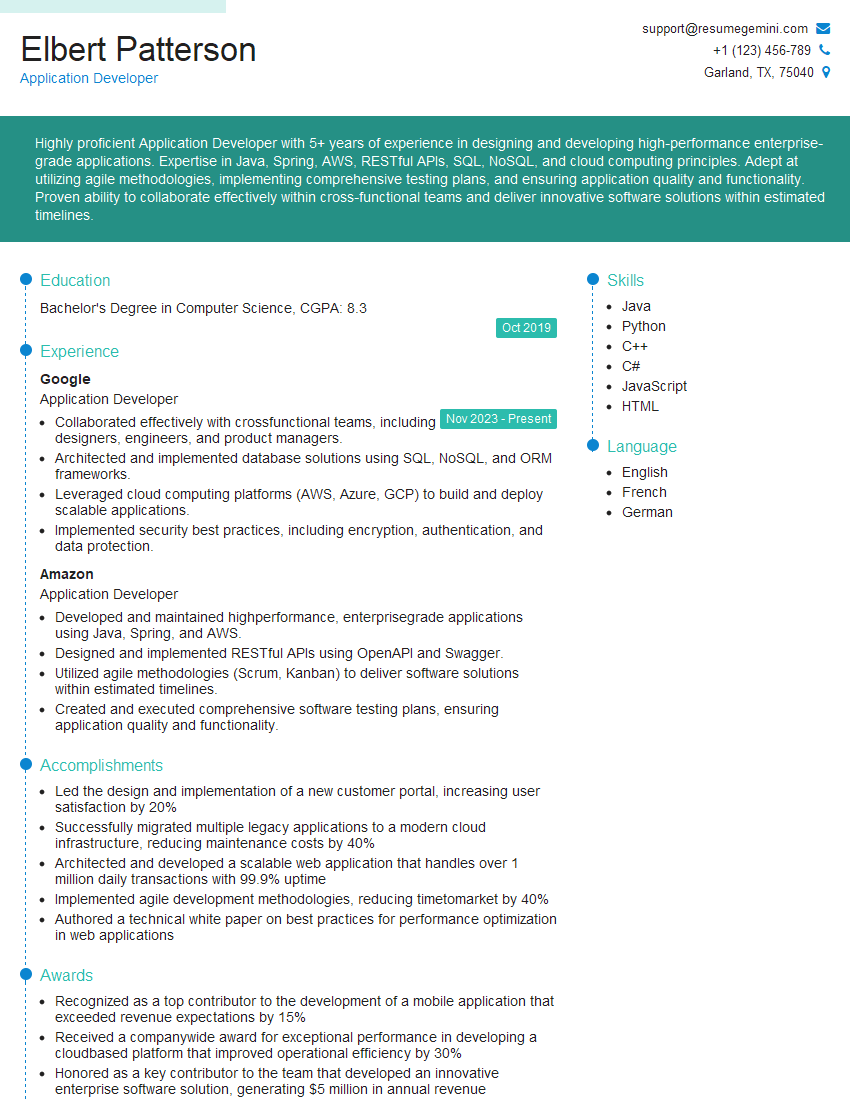

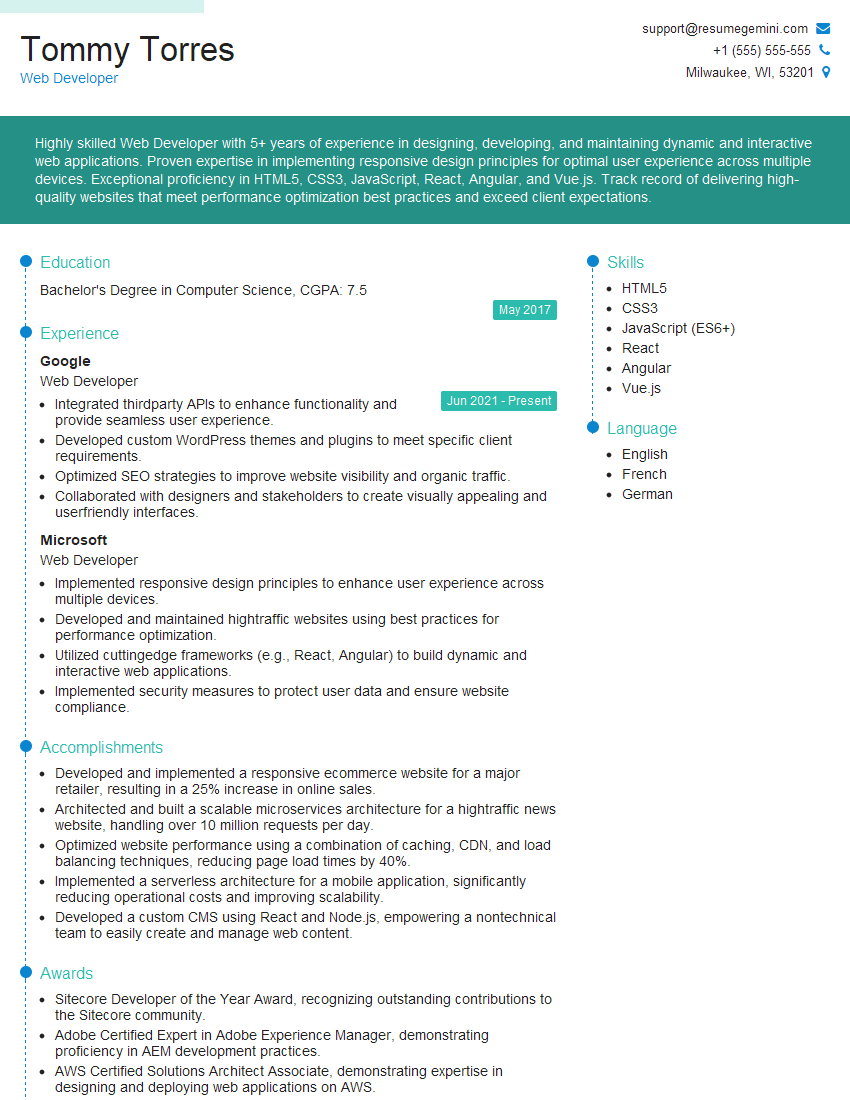

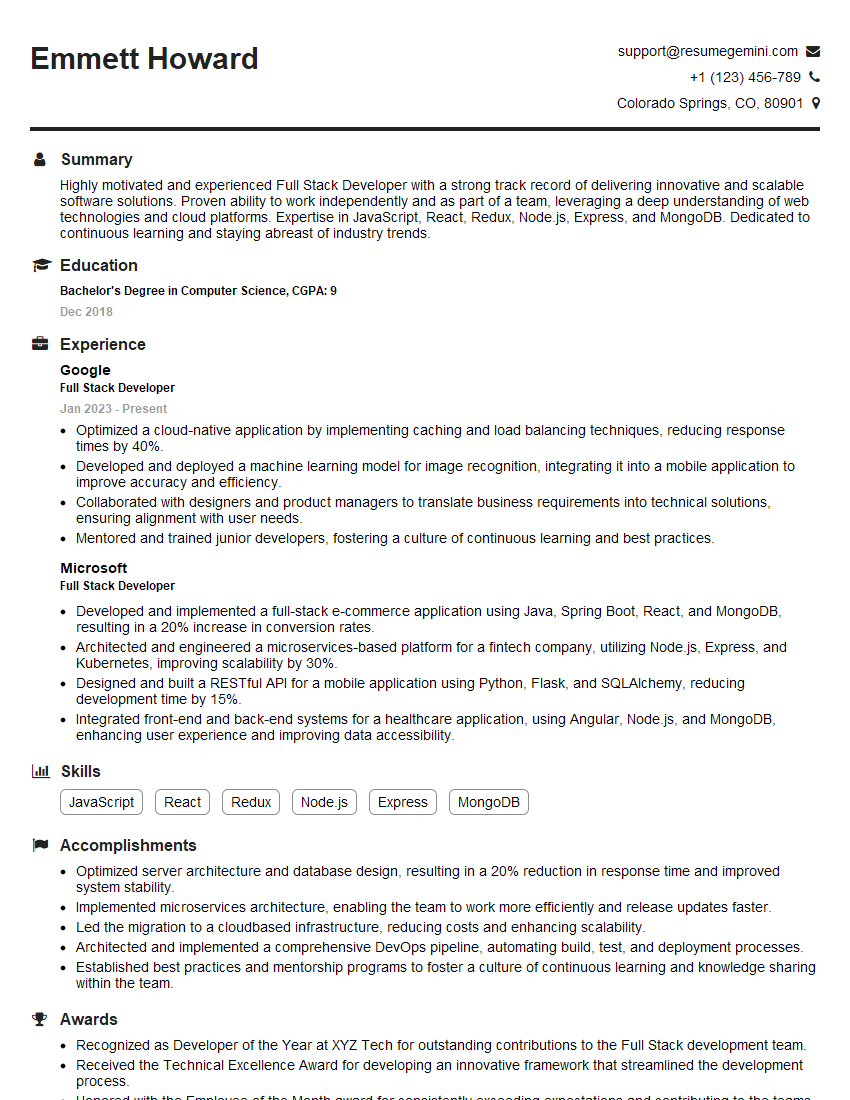

Mastering the skills of a Software Engineer opens doors to exciting and rewarding career opportunities, offering a path to high growth and intellectual stimulation. To maximize your chances of landing your dream job, it’s vital to present yourself effectively. Crafting an ATS-friendly resume is key to getting your application noticed by recruiters and hiring managers. Use ResumeGemini, a trusted resource, to build a professional and impactful resume that showcases your skills and experience. Examples of resumes tailored specifically for Software Engineer positions are available to help you get started.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

hello,

Our consultant firm based in the USA and our client are interested in your products.

Could you provide your company brochure and respond from your official email id (if different from the current in use), so i can send you the client’s requirement.

Payment before production.

I await your answer.

Regards,

MrSmith

These apartments are so amazing, posting them online would break the algorithm.

https://bit.ly/Lovely2BedsApartmentHudsonYards

Reach out at BENSON@LONDONFOSTER.COM and let’s get started!

Take a look at this stunning 2-bedroom apartment perfectly situated NYC’s coveted Hudson Yards!

https://bit.ly/Lovely2BedsApartmentHudsonYards

Live Rent Free!

https://bit.ly/LiveRentFREE

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.

Hi, I represent a social media marketing agency and liked your blog

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?