The thought of an interview can be nerve-wracking, but the right preparation can make all the difference. Explore this comprehensive guide to Special Effects Cinematography interview questions and gain the confidence you need to showcase your abilities and secure the role.

Questions Asked in Special Effects Cinematography Interview

Q 1. Explain your experience with different compositing software (e.g., Nuke, After Effects).

My compositing experience spans several industry-standard software packages. Nuke is my go-to for high-end feature film work, particularly when dealing with complex shots requiring extensive roto, keying, and 3D integration. Its node-based workflow allows for highly customizable and reproducible effects. I’ve used it extensively on projects involving large-scale environment work and seamless integration of CGI elements with live-action footage. After Effects, on the other hand, shines in its speed and ease of use for smaller projects or tasks requiring quick turnaround times, like motion graphics and simpler compositing needs. I’ve employed it for numerous commercials and short films where efficiency was key. For instance, on a recent commercial, I used After Effects to create a seamless transition between a live-action scene and a CGI environment, using rotoscoping and keying techniques to perfectly blend the two.

I also have experience with Fusion, a powerful compositing software, known for its speed and flexibility. This versatility allows me to adapt quickly to various project demands and maintain a high level of quality across different platforms and workflows.

Q 2. Describe your workflow for creating realistic fire or smoke effects.

Creating realistic fire and smoke effects requires a multi-faceted approach. I usually start by working with either pre-rendered simulations (from software like Houdini or FumeFX) or by creating them myself within a suitable 3D package. These simulations are then imported into my compositing software (Nuke or After Effects) where the real magic happens. The key is in the layering and blending. I might use multiple passes from the simulation – perhaps a density pass, a temperature pass, and an emission pass – to create depth and realism. Adding subtle details like flickering, wisps, and variations in opacity are crucial. I often enhance the overall effect by incorporating additional layers such as light wraps and glows, to imitate the way light interacts with the smoke or fire particles. This is where meticulous attention to detail is essential. Imagine creating a burning building – the way embers glow, how the smoke curls and dissipates, all demand individual attention to give it realism.

Furthermore, color grading plays a vital role. Correct color temperatures and subtly adjusting the hue and saturation across different parts of the effect can add a substantial layer of believability. I often use footage from real-world fire and smoke as references and guides to ensure accuracy.

Q 3. How do you handle complex lighting scenarios in a 3D environment?

Handling complex lighting scenarios in a 3D environment is about understanding the principles of light interaction – reflection, refraction, diffusion, and absorption. I start by analyzing the scene’s lighting requirements. Are we aiming for a realistic representation or a stylized interpretation? Then, I utilize the tools offered by the 3D software (often Maya or Houdini) to create and manipulate light sources. This can involve anything from simple point lights to complex area lights and volumetric lighting effects. The software’s lighting shaders and render settings are crucial here. I carefully adjust parameters such as intensity, color temperature, and falloff to achieve the desired look. For instance, I might use HDRI (High Dynamic Range Imaging) environments to simulate realistic reflections and global illumination.

Post-render, compositing becomes critical. I might add or adjust lights in my compositing software to fine-tune the final image, blending and layering effects such as rim lighting or backlighting to enhance depth and realism. For instance, on a space scene, accurate lighting would be critical to depicting the realistic reflection and scattering of light on spacecraft and other objects.

Q 4. What are your preferred methods for creating convincing character animation?

Creating convincing character animation involves a blend of technical skills and artistic intuition. I heavily rely on techniques such as keyframing, motion capture data cleanup (using software like MotionBuilder), and sometimes even procedural animation. Keyframing allows for precise control over the character’s movements, but it’s time-consuming. Motion capture data offers a great foundation, but extensive cleanup and refinement are often needed to achieve a believable and natural performance. Procedural animation can be incredibly useful for repetitive tasks like crowd simulation.

The most critical aspect, however, lies in understanding the character’s personality, emotions, and the story being told. Animation isn’t just about moving points on a screen; it’s about conveying a performance. I meticulously study reference footage, analyze human movement, and consult with animators to refine my work. A successful character animation is one that’s believable and seamlessly integrated into the scene.

For example, on a recent project, we used a combination of motion capture and keyframe animation to create a believable performance for a character undergoing a period of intense emotional distress. The nuances of movement – small shifts in posture, subtle facial expressions – were crucial in conveying that emotional state.

Q 5. Explain your understanding of color correction and color grading.

Color correction and color grading are two distinct but interconnected processes crucial for achieving a unified and aesthetically pleasing final image. Color correction aims to fix technical issues, such as inconsistencies in exposure, white balance, and color casts. This involves making adjustments to ensure that the image is technically accurate and representative of the scene’s original lighting conditions. Imagine a scene shot on a sunny day with some shadows – color correction aims to balance this out.

Color grading, on the other hand, is an artistic process, where I manipulate the image’s colors to create a specific mood, style, or look. This might involve subtly altering the color saturation, contrast, and overall tone to achieve a desired visual effect. Tools like DaVinci Resolve and Baselight allow for precise control over various color parameters using nodes and curves. For instance, I might use a LUT (Look Up Table) to rapidly apply a specific color palette, enhancing the cinematic look of a scene, or perhaps create a gritty, desaturated look for a dramatic scene.

Q 6. How do you manage large file sizes and optimize your workflow for efficiency?

Managing large file sizes is a constant challenge in VFX. My strategy involves a multi-pronged approach. Firstly, I employ lossless compression techniques whenever possible, opting for formats like OpenEXR for image sequences, minimizing the loss of information while reducing storage space. Secondly, I work with proxies – lower-resolution versions of assets – during the initial stages of the workflow, switching to full resolution only when necessary. This drastically improves performance without sacrificing quality in the final product. For example, working with low resolution textures during animation is essential for smooth performance.

Furthermore, I optimize my workflow by employing efficient rendering techniques, such as using render layers, to only render what is absolutely needed. Regularly clearing temporary files and managing my hard drive space are also vital. I also leverage cloud storage and rendering farms, where appropriate, for more extensive projects and large data processing.

Q 7. Describe your experience with motion tracking and matchmoving.

Motion tracking and matchmoving are essential techniques for seamlessly integrating CGI elements into live-action footage. Motion tracking involves analyzing the movement within a video sequence to create a 3D camera solve. This is often done using software like PFTrack or SynthEyes. The software analyzes features in the footage and calculates the camera’s position and orientation over time. This information is then used to accurately place virtual elements within the scene, making them appear as if they were filmed alongside the live-action elements.

Matchmoving takes this a step further, and is a refined process of precisely aligning 3D models or animations with the perspective and movement of the live-action footage. This is crucial for realistic integration. I often use the tracked camera data in my 3D modeling software (such as Maya or 3ds Max) to position and animate 3D assets accordingly. A recent project involved matchmoving a CGI spaceship into a cityscape, requiring meticulous camera tracking and careful alignment to create a truly believable shot.

Q 8. How familiar are you with various rendering techniques and engines (e.g., Arnold, RenderMan, V-Ray)?

My experience encompasses a wide range of rendering techniques and engines. I’m proficient in industry-standard packages like Arnold, RenderMan, and V-Ray, each offering unique strengths. Arnold, for instance, excels in its speed and ease of use for complex scenes, making it ideal for large-scale projects. RenderMan, known for its photorealism and advanced features, is perfect for demanding shots requiring unparalleled detail. V-Ray provides a strong balance between speed, quality, and versatility, making it a go-to for many studios. My understanding extends beyond simply using these engines; I grasp their underlying principles, allowing me to optimize settings for specific projects and achieve desired artistic results. I’ve used these engines extensively on projects ranging from feature films to commercials, adapting my techniques to meet the individual requirements of each.

For example, on a recent project involving a highly detailed fantasy creature, RenderMan’s advanced subsurface scattering capabilities were crucial to achieving a convincing and realistic skin texture. In contrast, for a fast-paced commercial, Arnold’s efficiency allowed us to meet tight deadlines without compromising visual quality.

Q 9. Explain your approach to problem-solving when facing technical challenges in VFX production.

My approach to problem-solving in VFX is systematic and collaborative. I begin by thoroughly analyzing the problem, identifying the root cause, and gathering relevant data. This often involves reviewing the source material, discussing the issue with other team members, and testing different solutions. I then break down the problem into smaller, manageable tasks, prioritizing based on urgency and impact. This allows for a more focused and efficient approach.

For example, if I encounter rendering artifacts, I’d first check the scene for potential geometry issues, like overlapping polygons or incorrect normals. Then, I’d investigate lighting and shading, examining the shaders for errors or inconsistencies. Finally, I’d scrutinize the rendering engine’s settings to ensure optimal performance. If the problem persists, I would leverage online resources, consult documentation, or seek help from more experienced colleagues. This combination of technical skills, problem-solving techniques and teamwork ensures timely solutions without compromising on quality.

Q 10. How do you collaborate effectively within a VFX team?

Effective collaboration is paramount in VFX. I believe in open communication, proactive problem-solving, and a shared understanding of project goals. I actively participate in team meetings, providing constructive feedback and offering solutions. I use project management software to track progress, manage assets, and maintain a clear workflow. I prioritize clear and concise communication, ensuring everyone is on the same page, using tools like review software to ensure everyone is aligned visually.

I foster a collaborative environment by actively listening to my colleagues’ ideas and perspectives. I’m always willing to assist team members, sharing my knowledge and experience to help solve challenges. One project involved a complex sequence requiring collaboration between modelers, animators, and compositors. By actively participating in daily stand-up meetings and providing regular updates, we ensured seamless workflow and a high-quality final product.

Q 11. Describe your experience with different 3D modeling software (e.g., Maya, 3ds Max, Blender).

I have extensive experience with Maya, 3ds Max, and Blender. Maya is my preferred tool for its robust animation and rigging capabilities, particularly beneficial for character animation. 3ds Max is excellent for modeling complex environments and architectural details. Blender, with its open-source nature and powerful sculpting tools, provides an incredible versatility. My experience isn’t limited to simply using the software; I understand their strengths and weaknesses and apply them strategically.

For instance, I might use Maya for character creation and animation, 3ds Max for environment modeling, and then combine the assets in a compositing software like Nuke for final integration. My expertise allows for efficient workflow and informed choices based on the project’s needs, always aiming for the most appropriate software for each task.

Q 12. How familiar are you with different types of cameras and lenses and their effects on VFX?

Understanding cameras and lenses is fundamental to VFX. Different lenses create unique distortions and perspectives, significantly impacting the final look of a shot. A wide-angle lens, for example, will exaggerate depth and perspective, while a telephoto lens will compress the space. These effects need to be carefully considered and replicated in the digital environment to maintain visual consistency.

Knowledge of camera parameters such as aperture, shutter speed, and ISO is equally important. These affect depth of field, motion blur, and exposure, all of which need to be matched between live-action and VFX elements. My experience extends to using camera tracking software to accurately match the virtual camera to the real-world footage, ensuring seamless integration. I’ve worked with various camera formats, from traditional film cameras to modern digital cinema cameras, allowing me to accurately recreate the visual characteristics of each.

Q 13. How would you create a convincing digital double for a character?

Creating a convincing digital double involves a multi-stage process. It begins with capturing high-quality reference footage of the actor, using multiple cameras to capture all angles. This data is then used to create a 3D scan of the actor’s body. This scan serves as the base model for the digital double. Subsequently, detailed texture maps are created, often requiring additional photography to capture fine details like skin pores and wrinkles.

Next, the digital double is rigged, meaning a skeleton is added to allow for realistic animation. Facial capture technology may be used to create convincing facial expressions and movements. This often involves motion capture data and sophisticated facial animation techniques. The final step involves meticulous compositing and rendering, ensuring the digital double seamlessly integrates into the live-action footage. The lighting, shadowing, and overall visual characteristics are crucial in achieving a convincing result, and this requires a deep understanding of both the live-action and digital elements involved.

Q 14. Explain your understanding of different types of shaders and their applications.

Shaders are the fundamental building blocks of visual effects, defining how surfaces interact with light. Different shader types cater to specific material properties. Diffuse shaders, for example, simulate the even scattering of light from matte surfaces like walls, while specular shaders render the glossy reflections found on polished metals. Subsurface scattering shaders are essential for rendering realistic skin and translucent materials, mimicking the way light penetrates and scatters within the material.

Other types of shaders include: emission shaders, which create self-illuminating surfaces; bump and normal maps, adding surface detail without increasing polygon count; and displacement shaders, actually altering the geometry of a surface for higher fidelity detail. My understanding extends to creating custom shaders using languages like shaders like HLSL (High-Level Shading Language) or OpenGL Shading Language (GLSL) for achieving very specific artistic looks or optimizing performance where needed. This allows for a high degree of control and customization in achieving visually compelling results.

Q 15. How would you approach creating a seamless transition between practical effects and CGI?

Seamlessly blending practical and CGI effects hinges on meticulous planning and execution. The key is to ensure visual consistency in lighting, color grading, and the overall texture of the elements. Imagine a scene where we’re creating a fiery explosion. We might use practical fire for the initial burst, capturing its organic movement and heat shimmer. Then, CGI would expand the scale and add details that are difficult to achieve practically, like debris flying through the air. To maintain consistency, we’d:

Match Plate Photography: We carefully shoot the practical effects against a greenscreen or bluescreen background, essentially creating a ‘plate’ to which the CGI elements are added. This allows for precise placement and integration.

Lighting and Color Matching: The CGI fire needs to perfectly match the color temperature and intensity of the practical fire. We use reference images and color pickers to ensure a consistent look. The overall scene lighting should also be considered to prevent any jarring differences.

Texture and Detail Matching: We’d use high-resolution scans of the practical fire to inform the textures used in the CGI elements. This creates a visual continuity that avoids a ‘painted-on’ look.

3D Modeling and Simulation: The CGI elements are meticulously created to match the scale and motion of the practical effects, often employing simulation software to create realistic movement and behavior of smoke, fire, or debris.

This multifaceted approach ensures that the transition between practical and CGI is invisible to the viewer, resulting in a believable and immersive visual experience.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your process for creating realistic textures for various materials.

Creating realistic textures involves a multi-step process that starts with understanding the material itself. For example, creating the texture of weathered wood differs significantly from creating the texture of smooth marble. My process typically involves:

Reference Gathering: Extensive research is crucial. I’d gather high-resolution photos and even physical samples of the material. This forms the basis for creating accurate textures.

Scanning and Photography: For intricate details, photogrammetry—using multiple photos to create a 3D model—is invaluable. Alternatively, I might use a 3D scanner for even higher fidelity. For simpler materials, high-resolution photography with specialized lighting techniques can be sufficient.

Texture Painting and Manipulation: Software like Substance Painter or Mari is used to create and manipulate textures. This involves adding details like scratches, bumps, imperfections, and variations in color and reflectivity. These details are critical for achieving realism.

Procedural Texture Generation: Sometimes, using procedural textures (generated by algorithms) is more efficient. This is especially helpful for creating large-scale textures or those with repetitive patterns like wood grain or brickwork. I often combine procedural and hand-painted techniques to get the best result.

Testing and Refinement: The texture is iteratively refined through rendering tests, comparing it to the reference images to identify areas that need improvement.

For instance, while creating the texture for a rusty metal surface, I would carefully study the nuances of rust—its color variations, its texture, and how light reflects off it. This attention to detail allows for the creation of a convincing visual effect.

Q 17. How do you handle feedback and revisions during the VFX process?

Feedback and revisions are integral to the VFX process. I embrace them as opportunities to refine the work and meet the director’s vision. My approach focuses on:

Clear Communication: Understanding the feedback’s intent is paramount. I’d actively engage in discussions with the director and the client, asking clarifying questions to ensure I understand the desired changes.

Organized Version Control: Using a version control system like Git allows for tracking every change, making it easy to revert to previous versions if necessary. This provides a safety net during the revision process.

Iterative Refinement: I avoid making sweeping changes in one go. Instead, I implement revisions in smaller, manageable steps. This allows for more precise control and minimizes the risk of unintended consequences.

Documentation: I maintain clear documentation of all changes made, along with explanations. This ensures transparency and facilitates collaboration among team members.

Testing and Review: Before delivering the final version, I conduct thorough testing and review the updated VFX within the context of the entire scene.

Ultimately, my goal is to create VFX that not only meets the technical standards but also effectively communicates the creative vision of the project.

Q 18. How familiar are you with pipeline management software?

I’m highly proficient in various pipeline management software. My experience includes working with tools like Shotgun, FTrack, and even custom-built pipeline systems. I understand the importance of these tools in organizing assets, tracking progress, and managing communication within a team. Specifically, I am comfortable with:

Task Management: Assigning tasks to team members, setting deadlines, and monitoring progress.

Asset Management: Organizing and tracking 3D models, textures, shaders, and other assets.

Version Control Integration: Seamlessly integrating the pipeline software with version control systems.

Reporting and Analytics: Generating reports to track project progress, identify bottlenecks, and measure efficiency.

My experience allows me to adapt quickly to different pipeline setups and ensure efficient project workflows.

Q 19. Describe your experience with version control systems (e.g., Git).

I have extensive experience with Git and other version control systems. I understand branching strategies, merging, conflict resolution, and the importance of commit messages. I regularly utilize Git for collaborative VFX work, ensuring that changes are tracked, allowing for easy rollback if needed, and preventing accidental overwrites. My proficiency includes:

Branching and Merging: Using feature branches to develop new features or fixes in isolation, and then merging them back into the main branch.

Conflict Resolution: Effectively resolving merge conflicts that arise when multiple people work on the same files simultaneously.

Committing and Pushing: Regularly committing changes with clear and concise commit messages that describe the changes made.

Pull Requests: Using pull requests to facilitate code review and collaborative development.

Git is an indispensable tool for managing the complexity of VFX projects, ensuring a collaborative and error-free workflow.

Q 20. What is your understanding of pre-visualization (previs) and its role in VFX?

Pre-visualization (previs) is a crucial step in the VFX pipeline. It involves creating a rough animated version of the shots before any expensive or time-consuming CGI work begins. Think of it as a storyboard that comes to life. It serves several vital functions:

Camera Planning: Previs helps determine the best camera angles and movements, ensuring that the final shots are visually compelling and effectively tell the story.

Shot Composition and Blocking: It allows for the refinement of actor and character movement, as well as the placement of virtual sets and props within the scene. This prevents wasted time and resources later.

VFX Planning: Previs helps to identify complex VFX shots early on, enabling the allocation of adequate time and resources to those areas.

Cost and Time Savings: By identifying potential problems early, previs prevents costly reshoots or extensive post-production revisions.

Communication: Previs provides a clear visual reference point for the director, VFX artists, and other team members, ensuring everyone is on the same page.

In a recent project, previs helped us realize that a planned explosion sequence required more complex CGI than initially anticipated. Because we identified this early in the process, we were able to adjust our schedule and budget accordingly. This saved us significant time and resources, leading to a much smoother production process.

Q 21. How familiar are you with different types of particle systems and their application to VFX?

I am very familiar with various particle systems and their application in VFX. Particle systems are essential for creating realistic effects involving large numbers of small elements, such as smoke, fire, water, snow, or debris. Different systems offer unique capabilities. For example:

Fluid Dynamics Simulators: These systems, like RealFlow or Houdini’s FLIP fluids, are used for simulating liquids and gases with high accuracy. They are ideal for creating realistic water splashes, smoke plumes, or even lava flows.

Particle Systems in 3D Packages: Software like Maya, 3ds Max, and Blender have built-in particle systems that are easier to use for less complex effects, such as dust, sparks, or rain. These often allow for easy control of particle properties like size, color, speed, and lifespan.

GPU-Accelerated Systems: Modern GPU-accelerated particle systems allow for the simulation of incredibly large numbers of particles in real time, significantly enhancing realism and performance. This is particularly important for large-scale effects like explosions or massive crowds.

Custom Particle Systems: Sometimes, custom-built particle systems may be needed to achieve very specific effects. This requires a deeper understanding of programming and particle simulation techniques.

Choosing the right particle system depends heavily on the desired effect, budget constraints, and the project’s overall complexity. My expertise allows me to select and implement the optimal system for each scenario.

Q 22. Explain your experience with creating realistic water or fluid simulations.

Realistic water and fluid simulations are achieved through a combination of fluid dynamics simulation software and careful compositing techniques. My experience involves using software like Houdini and Maya to create simulations, ranging from subtle ripples in a calm lake to the chaotic turbulence of a raging river. The process begins with defining the properties of the fluid – viscosity, density, surface tension – all influencing the behavior. Then, a mesh or particle system is used to represent the fluid, with solvers calculating its movement over time based on these properties and external forces like wind or obstacles. For instance, I worked on a project simulating a tsunami wave hitting a coastal city. We used a high-resolution simulation in Houdini, paying close attention to the details of the wave’s interaction with buildings and the resulting debris field. Post-simulation, meticulous compositing is crucial. This often involves adding finer details like foam, splashes, and reflections to enhance realism and match the lighting conditions in the scene.

Consider simulating a simple water droplet. We would define its initial shape, density, and surface tension. The solver then calculates how gravity and surface tension affect its shape as it falls and impacts a surface, creating realistic splashes and distortions. This process is then repeated and refined for larger scale water simulations.

Q 23. Describe your experience with creating believable cloth or hair simulations.

Creating believable cloth and hair simulations requires understanding the physical properties of these materials and employing specialized simulation tools. I’ve extensively used Maya’s nCloth and XGen systems, along with third-party plugins like RealFlow for more complex interactions. The key is to define the material properties correctly – stiffness, elasticity, friction – to accurately represent how the fabric or hair will react to forces like wind, gravity, and collisions. For cloth, we might use a high-resolution mesh to capture fine details like wrinkles and folds. In hair simulations, the number of strands and their interactions are crucial for achieving realism. For example, in a recent project featuring a character with long, flowing hair, we used XGen to create and simulate thousands of individual strands, allowing for realistic movement and response to wind and the character’s actions. Later stages focus on refining these simulations, often involving hand-tweaking and careful compositing to integrate the simulations seamlessly within the scene.

Imagine simulating a flag waving in the wind. We would create a mesh representing the flag, define its material properties (weight, stiffness), and then simulate the interaction of the wind force on the flag’s surface. The result would be a realistic and dynamic flag animation showing complex folding and movement.

Q 24. What is your experience with working on large-scale VFX projects?

I’ve worked on numerous large-scale VFX projects, often involving teams of artists and technicians. Experience includes managing assets, collaborating effectively with other departments, and adhering to strict deadlines and pipelines. On one project involving a large-scale battle sequence, we had to coordinate the work of multiple teams – modeling, rigging, animation, simulation, lighting, and compositing – to ensure consistency and coherence in the final product. The challenges of large-scale projects go beyond the technical aspects; they often involve navigating complex organizational structures and communication protocols. Effective project management, clear communication, and the ability to adapt to changing requirements are all essential for success in these environments. Experience with version control systems like Perforce is vital for managing large numbers of assets and preventing conflicts.

For example, working on a film with extensive CGI environments required coordinating a distributed rendering process across numerous machines to ensure timely completion. This involved configuring render farms and managing data transfer and storage efficiently.

Q 25. Explain your understanding of the challenges of working with different frame rates and resolutions.

Different frame rates and resolutions present significant challenges in VFX. A higher frame rate (e.g., 60fps vs 24fps) demands more processing power and increased storage, leading to longer render times and larger file sizes. Similarly, higher resolutions (e.g., 4K vs 1080p) exponentially increase render times and file sizes. These differences demand different approaches to simulation and rendering. Lower frame rates may allow for simpler simulations and rendering techniques, while higher frame rates require more sophisticated methods to maintain performance. Resolution affects detail levels; higher resolutions require more detailed assets and simulations to avoid artifacts. Efficient workflows, careful asset management, and the judicious use of proxy geometry during simulation are crucial. This might involve using lower-resolution simulations during initial stages, then upscaling later for final renders.

Imagine rendering a fluid simulation at 24fps versus 120fps. The 120fps version requires significantly more processing power and storage but delivers smoother, more realistic motion. Similarly, a higher resolution results in much greater detail but increases render times proportionally.

Q 26. Describe your experience with creating stylized VFX compared to photorealistic VFX.

Stylized VFX and photorealistic VFX require fundamentally different approaches. Photorealistic VFX aims for a one-to-one representation of reality, demanding high fidelity in modeling, texturing, lighting, and rendering. This often involves extensive use of physically based rendering (PBR) techniques and detailed simulations. Stylized VFX, however, embraces artistic interpretation and exaggeration. It often involves simplified models, non-photorealistic rendering techniques (NPR), and stylized shaders to achieve a specific aesthetic. For example, I worked on a project that required both photorealistic environments and a stylized character design with exaggerated features and a cel-shaded look. This required distinct workflows and tools. Photorealistic shots relied on high-poly models, physically based shaders, and realistic lighting, while the character animation employed a simplified model with a custom shader to achieve the desired cel-shaded aesthetic. The challenge is often to seamlessly integrate both styles within the same project.

For instance, consider a scene with photorealistic cars and a stylized cartoon character. The cars require accurate materials, reflections, and lighting, while the character might employ flat shading and bold outlines. The skill lies in balancing these styles so the scene feels coherent.

Q 27. How would you troubleshoot a rendering issue during a tight deadline?

Troubleshooting rendering issues under tight deadlines requires a systematic approach. First, I would identify the nature of the problem – is it a crash, an artifact, incorrect rendering settings? Then, I would check the render logs for error messages or warnings, providing clues about the root cause. Common issues include memory limitations, incorrect rendering settings (e.g., incorrect paths, missing textures), or bugs in shaders. If the issue stems from memory limitations, optimizing the scene geometry, reducing render resolution, or using a more efficient render engine might help. If it’s a shader bug, I’d examine the shader code for errors. Incorrect paths or missing textures often necessitate checking file paths and ensuring that assets are properly linked. If the problem persists, I might consider contacting the rendering software’s support team or community forums for assistance. Using simpler diagnostic rendering passes with reduced settings could help isolate the problematic elements within the scene.

A step-by-step example: If a render crashes, I would first check the render log for error messages. If it points to memory issues, I would attempt reducing the render resolution or scene complexity. If that doesn’t work, I would try restarting the machine and rendering a smaller test section of the scene to identify the point of failure.

Q 28. Describe your understanding of different compression techniques for VFX assets.

VFX assets require efficient compression techniques to manage storage and bandwidth. Different compression methods are suitable for different asset types. For example, images often use lossy compression methods like JPEG or lossless methods like PNG or OpenEXR. JPEG offers high compression ratios but sacrifices some image quality, while PNG and OpenEXR preserve image quality but have larger file sizes. OpenEXR is frequently preferred for high-dynamic-range images (HDRI) used in lighting and compositing. Video compression methods like H.264 or H.265 are used for compressing animation sequences, balancing file size and visual quality. Geometry often uses lossless compression to preserve the precision of the model, often integrated into the file format itself (e.g., Alembic). Choosing the right compression method is crucial; lossy compression might be acceptable for background elements but unacceptable for detailed foreground assets.

Consider a high-resolution texture: JPEG might be suitable for a background image where subtle quality loss is acceptable, but a character’s skin texture would need a lossless format like PNG or OpenEXR to preserve detail.

Key Topics to Learn for Your Special Effects Cinematography Interview

Landing your dream Special Effects Cinematography job requires a deep understanding of both the theoretical and practical aspects of the field. Prepare yourself by mastering these key areas:

- Camera Techniques for VFX: Understanding camera movement, framing, and shot composition specifically within the context of integrating visual effects. Consider how different camera choices impact the final composite.

- Pre-visualization and Planning: Discuss your experience with storyboarding, previs, and postvis. Explain how these processes streamline VFX integration and minimize on-set challenges.

- Lighting for VFX: Explore the crucial role of lighting in achieving seamless VFX integration. Discuss techniques for creating consistent lighting across live-action and digital elements, including practical lighting considerations for green/blue screen work.

- Working with VFX Supervisors and Teams: Showcase your understanding of collaborative workflows and communication strategies vital for effective collaboration within a VFX pipeline. This includes understanding different VFX software and workflows.

- Practical Effects and their integration with CGI: Demonstrate your understanding of the interplay between practical and digital effects, including how to plan and execute shoots that facilitate seamless integration of both.

- Troubleshooting and Problem-Solving: Be ready to discuss scenarios where VFX integration presented unforeseen challenges, and how you approached problem-solving creatively and efficiently. Showcase your technical skills and adaptability.

- Software Proficiency (mention specific software relevant to your experience): Highlight your skills in industry-standard software such as [mention software e.g., Nuke, Maya, After Effects]. Be prepared to discuss your experience with specific tools and techniques.

Next Steps: Level Up Your Career

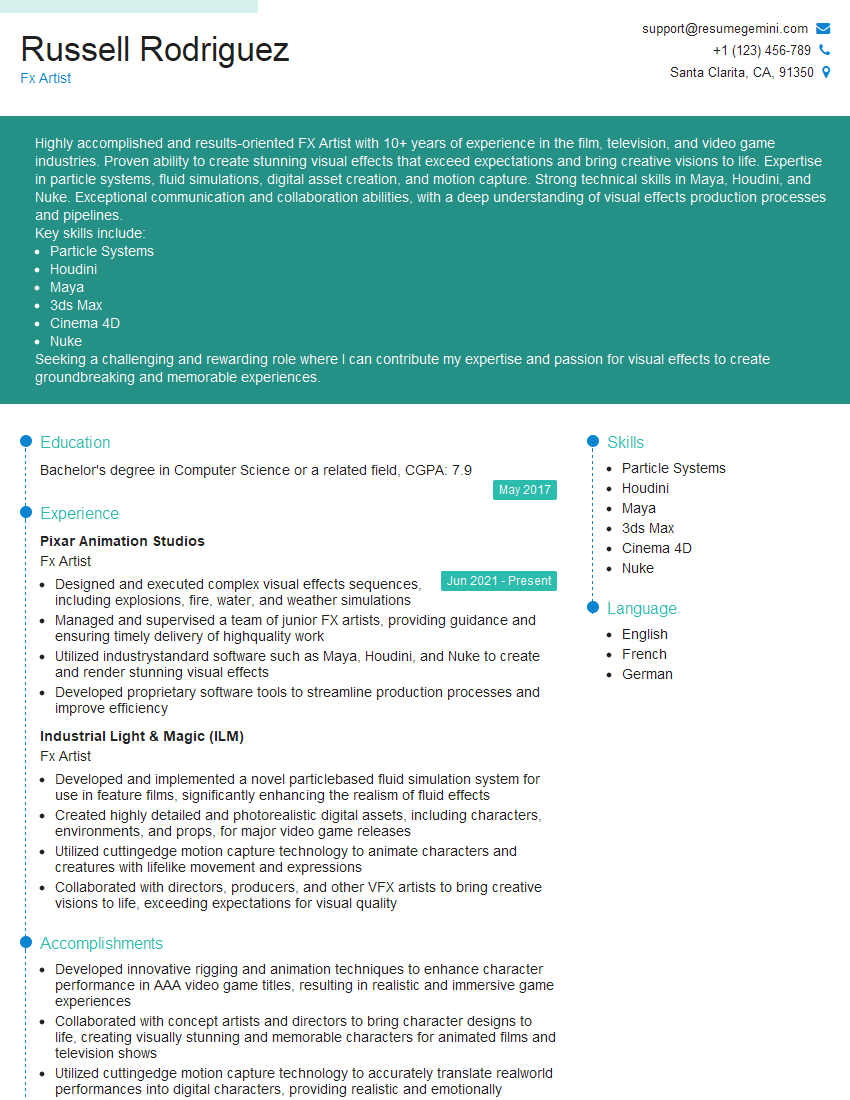

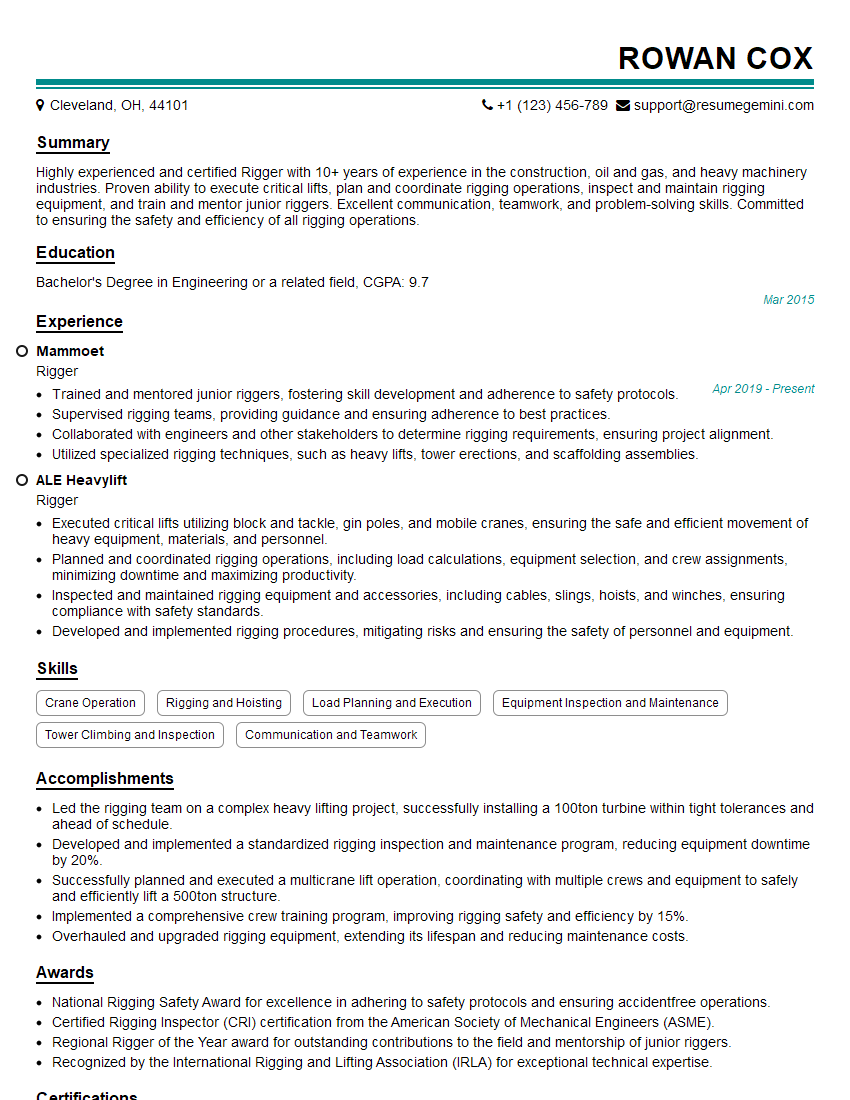

Mastering Special Effects Cinematography opens doors to exciting and rewarding career opportunities in film, television, and beyond. To maximize your job prospects, creating a strong, ATS-friendly resume is critical. This means tailoring your resume to highlight the specific skills and experiences most relevant to each job application.

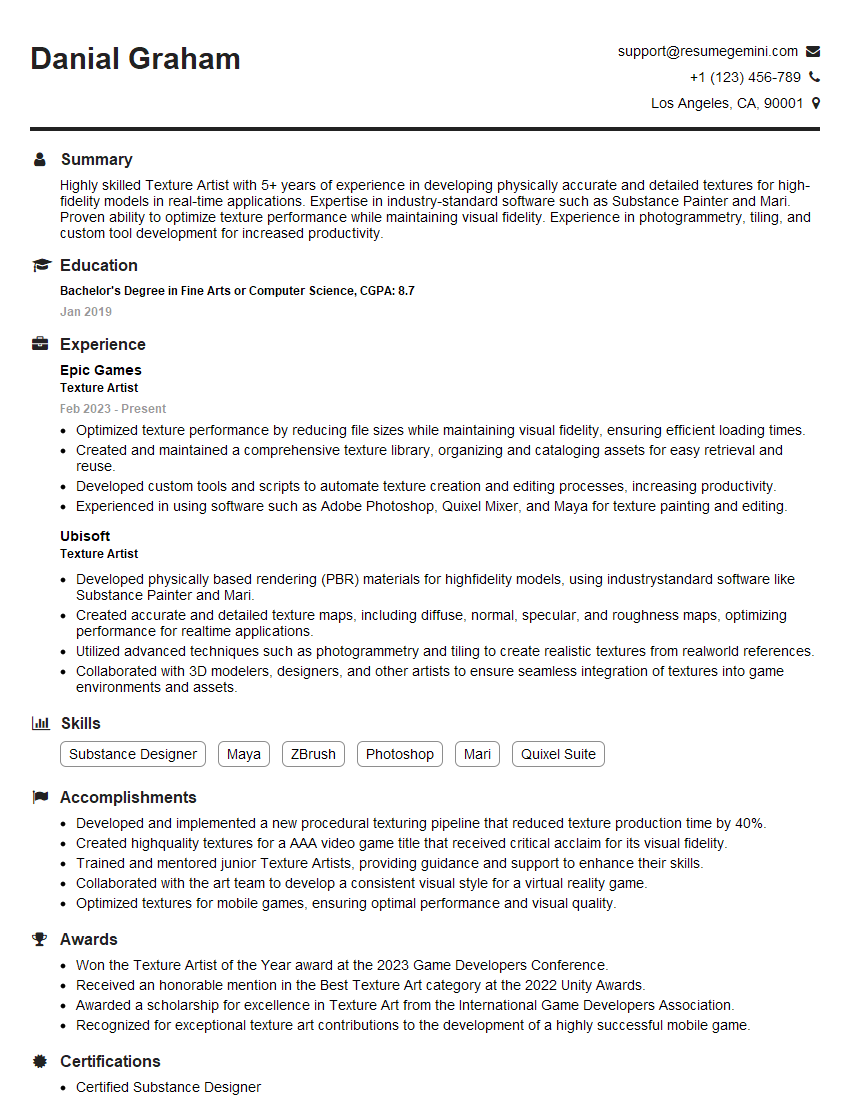

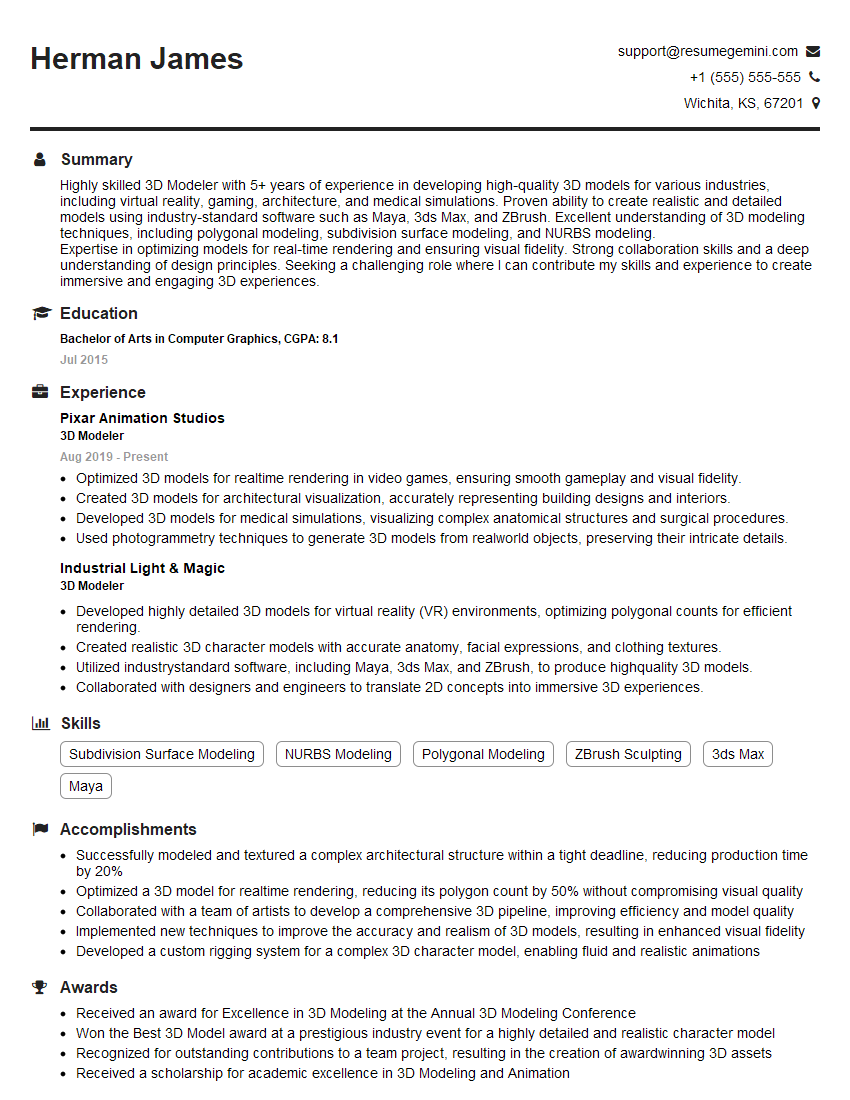

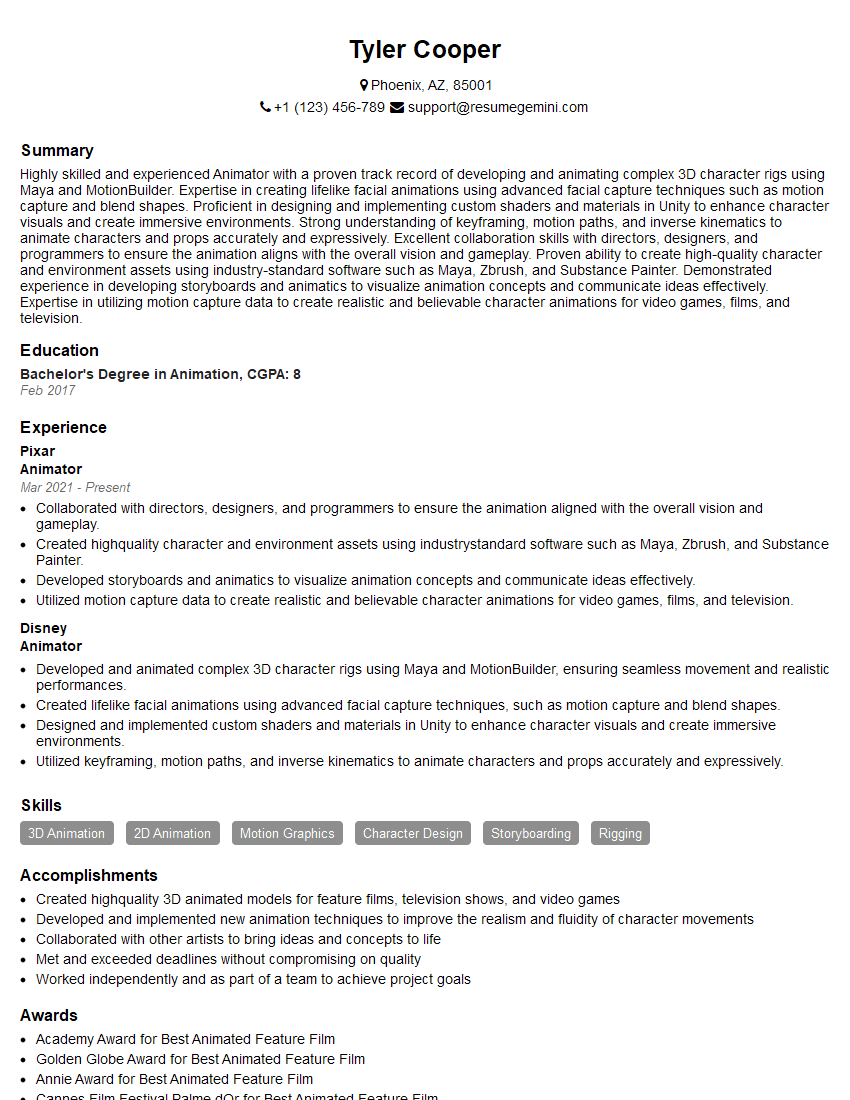

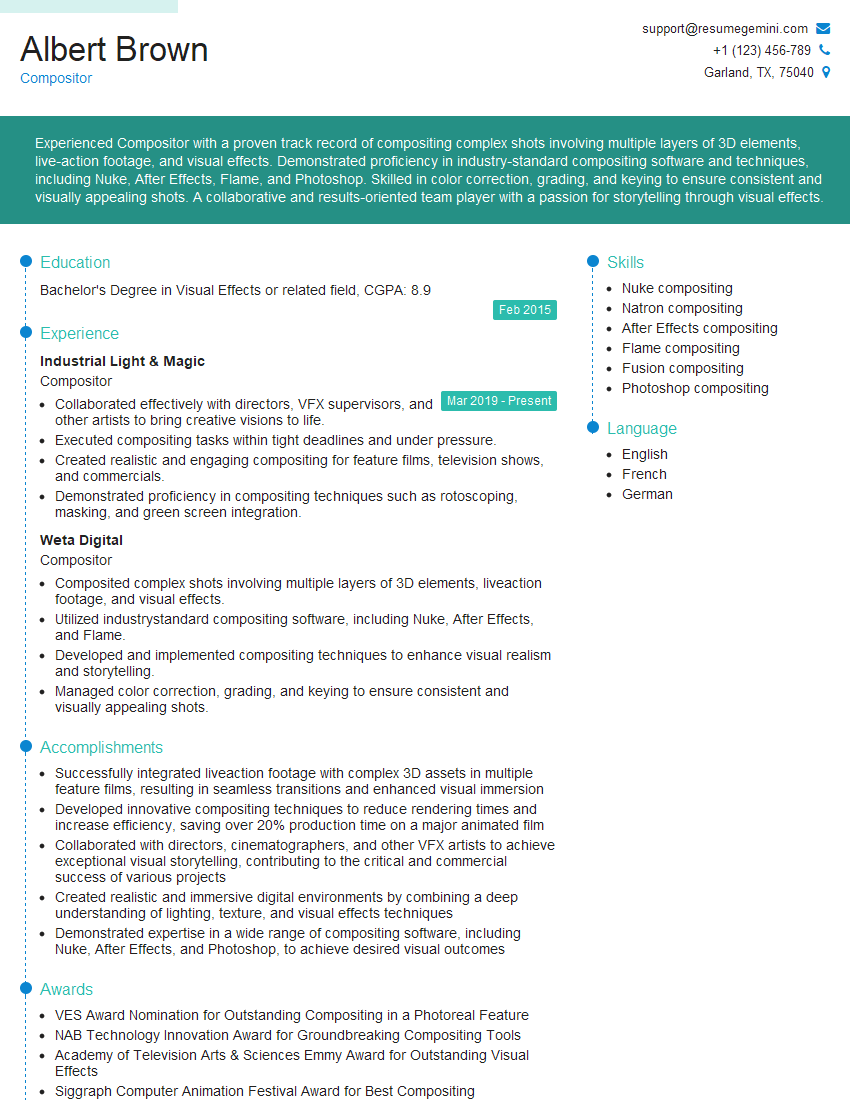

We highly recommend using ResumeGemini to build a professional, impactful resume that gets noticed. ResumeGemini provides the tools and resources to craft a resume that truly showcases your unique skills and experience. Examples of resumes tailored for Special Effects Cinematography professionals are available to help guide you. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Amazing blog

Interesting Article, I liked the depth of knowledge you’ve shared.

Helpful, thanks for sharing.